Home What Is Semantic Search? Definition, How It Works, and Use Cases

What Is Semantic Search? Definition, How It Works, and Use Cases

Learn what semantic search is, how it uses AI to understand meaning behind queries, how it compares to keyword search, and where it is applied across industries.

What Is Semantic Search?

Semantic search is an information retrieval method that uses artificial intelligence and natural language processing to interpret the meaning and intent behind a query rather than relying on exact keyword matches. It returns results based on what the user actually means, not just what they literally type.

Traditional search engines operate on lexical matching. They scan for documents containing the exact terms in the query and rank them using signals like term frequency, document popularity, and metadata. Semantic search goes further by analyzing concepts, relationships between words, and the broader context of a question.

When a user searches for "best ways to keep remote employees motivated," a semantic search system understands this relates to employee engagement, remote work strategies, and retention. It surfaces relevant content even if those documents never contain the phrase "keep remote employees motivated."

The foundation of semantic search lies in representing language as mathematical structures. Words, sentences, and documents are converted into vector embeddings, which are numerical representations in high-dimensional space. Queries and content that share similar meaning end up close together in that space, enabling the system to match based on conceptual similarity rather than string overlap.

Semantic search has become a core component of modern search engines, enterprise knowledge platforms, e-commerce recommendation systems, and cognitive search tools. Its ability to bridge the gap between human language and stored information makes it one of the most practical applications of machine learning in everyday technology.

How Semantic Search Works

Semantic search systems combine multiple AI techniques into a pipeline that processes both queries and content to determine relevance by meaning. The process involves several interconnected stages.

Query Understanding

The first step is interpreting the user's input. The system applies natural language understanding to parse the query, identify entities, detect intent, and resolve ambiguity. If a user types "apple nutrition facts," the system determines whether "apple" refers to the fruit or the technology company based on surrounding context and the domain of the search.

Query understanding also includes expansion and reformulation. The system may recognize that "cardiovascular exercise benefits" and "heart health workout advantages" express the same concept. It enriches the query internally to cover synonyms, related phrases, and conceptual equivalents without requiring the user to think of every possible term.

Text Encoding with Transformer Models

Modern semantic search relies on transformer models to convert text into dense vector representations. Models like BERT and its successors read entire sentences at once, capturing the relationships between words in context.

Unlike older approaches that processed words individually, transformers understand that "bank" in "river bank" and "bank account" refers to entirely different concepts.

The transformer encodes both the query and each document (or document passage) into vectors of several hundred dimensions. These vectors capture not just the topic of the text but its tone, specificity, and relational meaning. The encoding process is the computational core of semantic search and is typically the most resource-intensive step.

Vector Similarity and Retrieval

Once both the query and the content library are encoded as vectors, the system calculates similarity scores between the query vector and every document vector. The most common method is cosine similarity, which measures the angle between two vectors. Vectors pointing in similar directions receive high similarity scores, indicating conceptual alignment.

To make this retrieval fast at scale, semantic search systems use approximate nearest neighbor (ANN) algorithms and specialized vector databases. These tools index millions or billions of vectors and return the closest matches in milliseconds. Without this optimization, searching through large content libraries would be impractically slow.

Re-Ranking and Hybrid Approaches

Many production semantic search systems use a two-stage approach. The first stage retrieves a broad set of candidate results using vector similarity. The second stage re-ranks those candidates using a more computationally expensive cross-encoder model that evaluates the query and each document together for fine-grained relevance scoring.

Hybrid search combines semantic retrieval with traditional keyword matching. This approach ensures that exact-match queries still return precise results while benefiting from semantic understanding for broader or more ambiguous queries. Most commercial search platforms now use some form of hybrid architecture because neither approach alone handles every query type optimally.

Knowledge Graph Integration

Some semantic search systems incorporate knowledge graphs to enrich understanding. A knowledge graph stores structured relationships between entities, such as the fact that Paris is the capital of France, or that a specific drug treats a particular condition. When the search system encounters an entity in the query, it can traverse the graph to find related concepts and improve result quality.

Knowledge graph integration is particularly valuable in specialized domains like healthcare, legal research, and scientific literature, where precise entity relationships carry significant weight in determining relevance.

| Component | Function | Key Detail |

|---|---|---|

| Query Understanding | The first step is interpreting the user's input. | If a user types "apple nutrition facts |

| Text Encoding with Transformer Models | Modern semantic search relies on transformer models to convert text into dense vector. | Models like BERT and its successors read entire sentences at once |

| Vector Similarity and Retrieval | Once both the query and the content library are encoded as vectors. | To make this retrieval fast at scale |

| Re-Ranking and Hybrid Approaches | Many production semantic search systems use a two-stage approach. | — |

| Knowledge Graph Integration | Some semantic search systems incorporate knowledge graphs to enrich understanding. | The fact that Paris is the capital of France |

Semantic Search vs Keyword Search

The differences between semantic search and keyword search are fundamental, not incremental. They represent two distinct philosophies about how to connect users with information.

Matching Logic

Keyword search operates on lexical matching. It looks for documents that contain the exact words in the query. If you search for "machine learning applications in healthcare," keyword search finds documents containing those terms and ranks them by factors like term frequency and document authority.

Semantic search interprets the meaning of that query and can return documents about "AI-driven diagnostics," "predictive modeling for patient outcomes," or "neural network applications in clinical settings," even if none of those documents contain the phrase "machine learning applications in healthcare."

Handling Synonyms and Paraphrasing

Keyword search struggles with synonyms. A search for "automobile maintenance schedule" may miss documents that use "car," "vehicle," or "fleet servicing." Semantic search treats these as conceptually equivalent because their vector representations are similar. This capability alone accounts for a large share of the relevance improvement that semantic search delivers over keyword-based systems.

Context Sensitivity

Keyword search is context-blind. It treats each query term independently and cannot distinguish between different meanings of the same word. Semantic search uses the full context of the query to resolve ambiguity. The word "Python" in "Python data analysis libraries" points the system toward programming, while "Python habitat and behavior" directs it toward biology.

Query Length and Complexity

Keyword search performs best with short, specific queries composed of precise terms. Longer, conversational queries often produce poor results because the system tries to match every word. Semantic search handles natural language questions effectively. A query like "what are the best practices for onboarding new software engineers in a remote environment" works well in a semantic system because it processes the question as a unified concept rather than a bag of individual words.

When Keyword Search Still Wins

Keyword search remains superior for exact-match needs. When a user searches for a specific product SKU, an error code, or a unique identifier, keyword search returns the correct result immediately. Semantic search may introduce noise by trying to find conceptually similar results when an exact match is what the user needs. This is why most modern systems use a hybrid approach that combines both methods.

Semantic Search Use Cases

Semantic search has moved from a research topic to a production technology deployed across industries and applications.

Web Search Engines

Major search engines have incorporated semantic search as a core ranking signal. Google's introduction of BERT-based query understanding marked a turning point where search results began reflecting what users meant rather than what they typed. This shift improved results for conversational queries, voice searches, and long-tail questions that previously returned irrelevant pages.

E-Commerce and Product Discovery

Online retailers use semantic search to connect shoppers with products. A search for "lightweight jacket for spring hiking" returns relevant products even when their listings describe them as "breathable trail outerwear" or "packable windbreaker." Semantic product search reduces zero-result queries and improves conversion rates by surfacing items that match the shopper's actual need rather than their exact phrasing.

Enterprise Knowledge Management

Organizations use semantic search to make internal knowledge accessible. Employees searching across wikis, document repositories, and communication archives benefit from a system that understands their questions in context.

A new hire searching "how do I request time off" gets directed to the correct HR policy document regardless of whether it is titled "PTO Request Procedure" or "Leave of Absence Guidelines." This application overlaps significantly with cognitive search, which layers additional AI capabilities on top of semantic retrieval.

Customer Support and Chatbots

Support systems use semantic search to match customer questions against knowledge base articles. When a customer describes a problem in their own words, the system identifies the relevant troubleshooting guide or FAQ entry. This powers both self-service portals and agent-assist tools where support representatives receive suggested answers in real time.

Retrieval-Augmented Generation

Semantic search is a critical component of retrieval-augmented generation (RAG), an architecture where large language models ground their responses in retrieved documents. The semantic search layer finds the most relevant documents from a knowledge base, and the language model uses those documents to generate accurate, contextual answers.

Without semantic search, RAG systems would rely on keyword matching to select source documents, producing less relevant and less reliable outputs.

Academic and Scientific Research

Researchers use semantic search to discover relevant papers across disciplines. A biologist studying protein folding can find relevant work published in computational chemistry or materials science journals even when those papers use different terminology. Semantic search accelerates literature review by surfacing conceptually related work that traditional keyword searches would miss.

Education and Learning Platforms

Learning platforms apply semantic search to help learners find relevant courses, modules, and resources. When a learner searches for "how to analyze data with spreadsheets," the system can return courses on Excel data analysis, Google Sheets formulas, or introductory data science, matching the learner's intent to the most appropriate content regardless of how each course is titled or described.

Challenges and Limitations

Semantic search offers significant advantages over keyword matching, but it introduces its own set of technical and operational challenges.

Computational Cost

Encoding text into vector representations requires running deep learning models, which demand substantial compute resources. For large content libraries, generating and storing millions of vector embeddings requires specialized infrastructure including vector databases and GPU-accelerated encoding pipelines. The ongoing cost of re-encoding new content and retraining models adds to the operational expense.

Handling Highly Specific Queries

Semantic search excels at understanding meaning but can struggle with very precise queries where exact matching matters. Searches for specific codes, identifiers, part numbers, or proper nouns may return conceptually related but incorrect results. Production systems address this by combining semantic and keyword retrieval, but tuning the balance between the two requires ongoing experimentation.

Language and Domain Dependency

Pre-trained transformer models perform best in the languages and domains they were trained on. A model trained primarily on English web text may produce poor embeddings for specialized medical terminology, legal jargon, or low-resource languages. Fine-tuning models on domain-specific data improves performance but requires labeled training data and machine learning expertise.

The quality of a neural network based embedding model depends directly on the relevance and diversity of its training corpus.

Index Freshness

Semantic search indexes must be kept current. When content changes, its vector representation must be regenerated and updated in the index. In dynamic environments where content is created or modified frequently, maintaining index freshness introduces latency and engineering complexity. Stale embeddings can cause the system to return outdated or incorrect results.

Evaluation Difficulty

Measuring semantic search quality is harder than measuring keyword search quality. With keyword search, relevance is relatively objective: does the document contain the search terms? Semantic relevance is subjective and context-dependent. Evaluating whether a result is "conceptually similar enough" requires human judgment, making automated quality assurance more complex. Organizations need to invest in relevance evaluation frameworks and user feedback loops to maintain search quality over time.

Bias in Embeddings

Vector embeddings inherit the biases present in their training data. If the training corpus over-represents certain perspectives, demographics, or viewpoints, the resulting embeddings will reflect those skews. In search applications, this can surface as certain types of content being systematically favored or suppressed. Addressing embedding bias requires careful dataset curation, evaluation, and sometimes explicit debiasing techniques.

How to Implement Semantic Search

Building a semantic search system involves selecting models, preparing data, deploying infrastructure, and iterating on relevance. The following steps outline the core process.

Define the Search Scope and Requirements

Start by identifying what content the system will search over and what types of queries users will issue. A customer support system searching a knowledge base of 5,000 articles has very different requirements from a research platform searching 10 million academic papers. Content type, volume, update frequency, and query patterns all shape the architecture.

Choose an Embedding Model

The embedding model converts text into vectors and is the most critical component. Options range from open-source models like Sentence-BERT and E5 to commercial embedding APIs. Key selection criteria include:

- Supported languages and domain coverage

- Vector dimensionality and storage requirements

- Encoding speed and throughput

- Quality on benchmark datasets relevant to your domain

- Licensing and cost structure

For specialized domains, fine-tuning a general-purpose model on domain-specific data typically produces better results than using a generic model out of the box.

Prepare and Encode Content

Content preparation involves cleaning, chunking, and encoding documents. Long documents are typically split into passages of 200 to 500 tokens because embedding models produce better representations for shorter text segments. Each passage is encoded into a vector and stored alongside its metadata (source document, title, section, timestamp).

Data quality directly affects search quality. Duplicate content, outdated documents, and poorly structured text all degrade the embedding space. Invest in content cleanup before encoding.

Select a Vector Database

Vector databases store embeddings and perform fast similarity search. Popular options include Pinecone, Weaviate, Milvus, Qdrant, and pgvector (for PostgreSQL). Selection criteria include:

- Scale (number of vectors and query throughput)

- Filtering capabilities (metadata-based pre-filtering)

- Integration with existing infrastructure

- Managed versus self-hosted deployment

- Cost at projected scale

Build the Query Pipeline

The query pipeline processes user input, generates a query embedding, retrieves candidate results, and optionally re-ranks them. A typical pipeline includes:

- Query preprocessing (spelling correction, expansion)

- Query encoding using the same embedding model

- Vector similarity search against the database

- Optional re-ranking with a cross-encoder model

- Result formatting and presentation

Hybrid pipelines that combine vector search with keyword search (using BM25 or similar algorithms) consistently outperform either approach alone in production evaluations.

Evaluate and Iterate

Launch with a test dataset of representative queries and expected results. Measure relevance using metrics like Mean Reciprocal Rank (MRR), Normalized Discounted Cumulative Gain (NDCG), and precision at K. Collect user feedback through click tracking, explicit rating mechanisms, and search session analysis.

Iteration is continuous. Model updates, content changes, and shifts in user behavior all require ongoing tuning. Organizations that treat semantic search as a static deployment rather than a living system see relevance degrade over time.

FAQ

How is semantic search different from keyword search?

Keyword search matches documents containing the exact terms in a query. Semantic search interprets the meaning behind the query and returns results that are conceptually relevant, even when they use different words. A keyword search for "automobile repair shops" misses results about "car mechanics" or "vehicle service centers." Semantic search recognizes these as equivalent concepts and includes them.

What AI technologies power semantic search?

Semantic search relies on natural language processing, transformer models like BERT, vector embeddings, and similarity search algorithms.

Some implementations also incorporate knowledge graphs for entity relationship understanding and deep learning models for re-ranking results.

Can semantic search handle multiple languages?

Yes, multilingual transformer models can encode text from multiple languages into the same vector space. This means a query in English can match relevant documents written in Spanish, French, or Mandarin. However, performance varies by language and depends on how well-represented each language is in the model's training data.

Is semantic search the same as AI search?

AI search is a broader term that includes any search technology enhanced by artificial intelligence. Semantic search is one specific approach within AI search, focused on understanding meaning through vector representations. Other AI search capabilities include entity extraction, intent classification, personalized ranking, and retrieval-augmented generation. Semantic search is often the foundational layer that makes these other capabilities possible.

What is the role of vector embeddings in semantic search?

Vector embeddings are the core data structure. They convert text into numerical arrays that capture meaning in a format computers can compare mathematically. When two pieces of text have similar meanings, their vectors point in similar directions in high-dimensional space. This mathematical property is what enables the system to find relevant content based on meaning rather than keyword overlap.

How accurate is semantic search compared to traditional search?

Accuracy depends on the quality of the embedding model, the domain, and the query type. For conversational, ambiguous, or synonym-heavy queries, semantic search significantly outperforms keyword search. For exact-match queries (product codes, specific names, technical identifiers), keyword search can be more precise. The most accurate production systems use a hybrid approach that combines both methods to cover all query types effectively.

Further reading

AI Readiness: Assessment Checklist for Teams

Evaluate your team's AI readiness with a practical checklist covering data, infrastructure, skills, governance, and culture. Actionable criteria for every dimension.

Predictive Modeling: Definition, How It Works, and Key Use Cases

Predictive modeling uses statistical and machine learning techniques to forecast future outcomes from historical data. Learn how it works, common model types, and real-world applications.

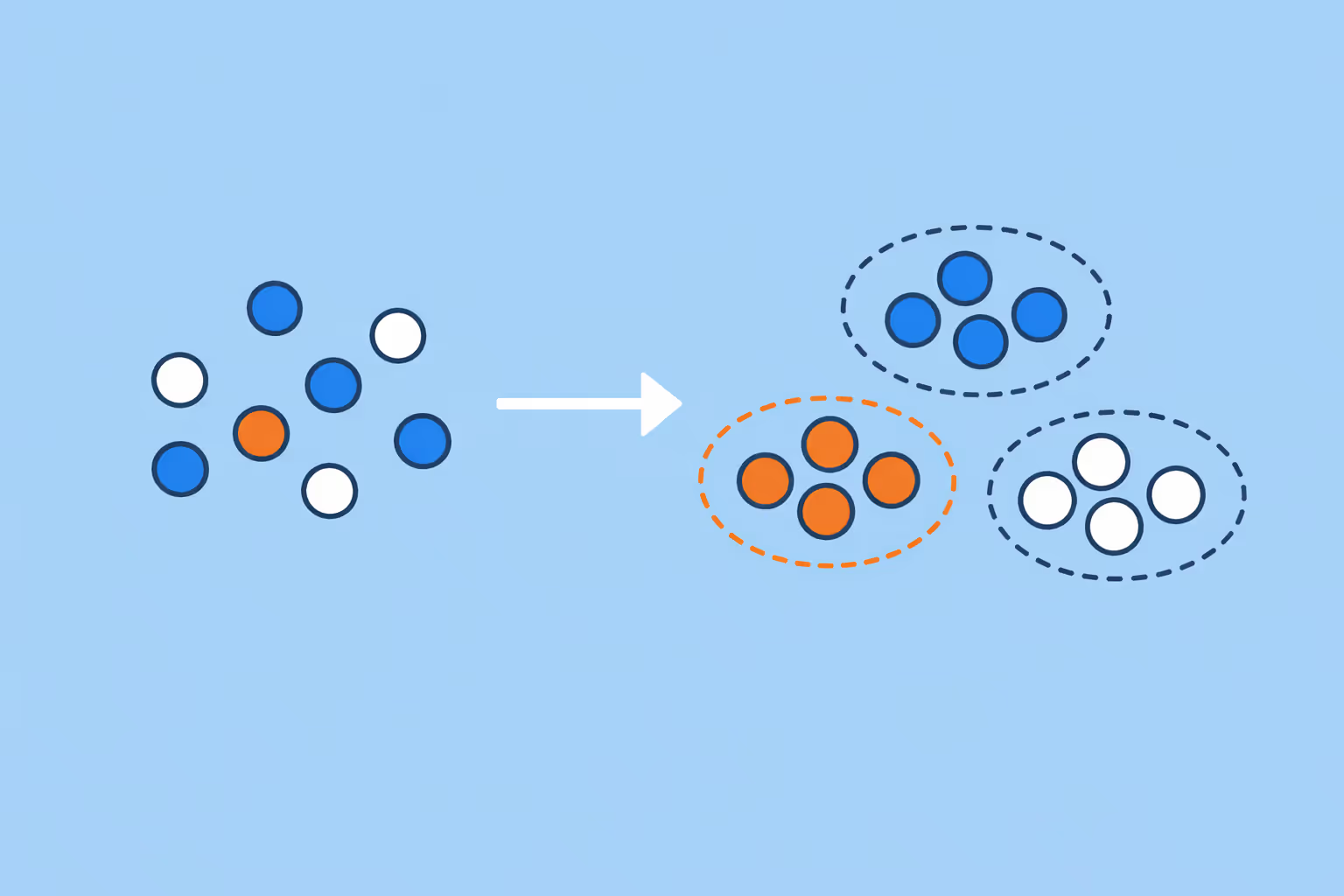

Clustering in Machine Learning: Methods, Use Cases, and Practical Guide

Clustering in machine learning groups unlabeled data by similarity. Learn the key methods, real-world use cases, and how to choose the right approach.

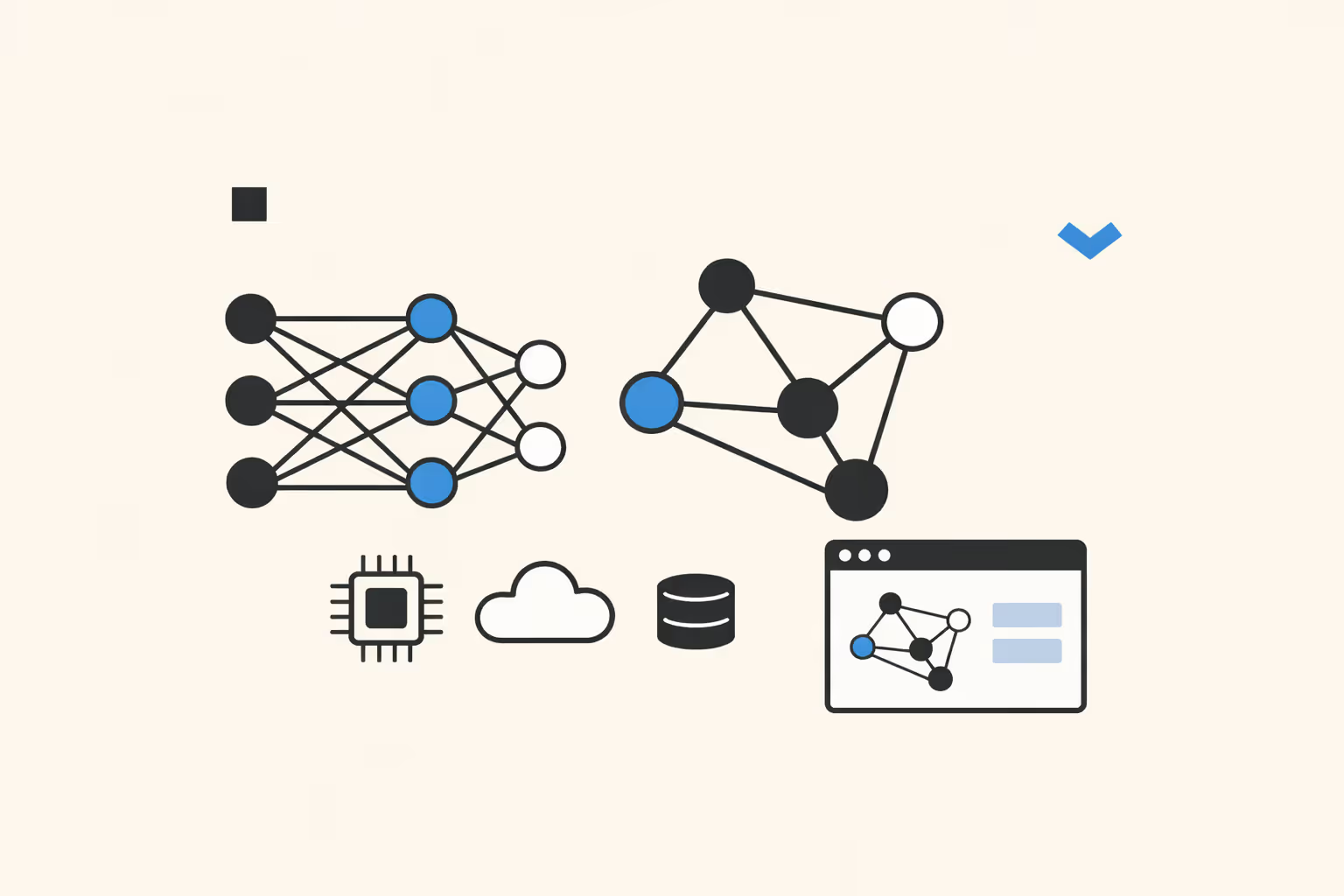

Graph Neural Networks (GNNs): How They Work, Types, and Practical Applications

Learn what graph neural networks are, how GNNs process graph-structured data through message passing, their main types, real-world use cases, and how to get started.

What is an AI Agent in eLearning? How It Works, Types, and Benefits

Learn what AI agents in eLearning are, how they differ from automation, their capabilities, limitations, and best practices for implementation in learning programs.

What Is Neuromorphic Computing? Definition, Architecture, and Applications

Learn what neuromorphic computing is, how brain-inspired chips process information using spiking neural networks, and why this architecture matters for energy-efficient AI at the edge.