Home What Is PyTorch? How It Works, Key Features, and Use Cases

What Is PyTorch? How It Works, Key Features, and Use Cases

PyTorch is an open-source deep learning framework built on Python. Learn how it works, its core features, real-world use cases, and how to get started.

What Is PyTorch?

PyTorch is an open-source machine learning framework designed for building, training, and deploying deep learning models. Developed by Meta AI (formerly Facebook AI Research), it provides a flexible Python-first interface that has made it the dominant framework in academic research and an increasingly popular choice for production systems.

At its core, PyTorch operates on tensors, multi-dimensional arrays similar to NumPy arrays but with full GPU acceleration support. These tensors flow through computational graphs that represent the mathematical operations of a neural network.

What distinguishes PyTorch from earlier frameworks is its use of dynamic computation graphs, which are constructed on the fly during execution rather than being defined and compiled ahead of time.

PyTorch has become the most widely used framework in artificial intelligence research, powering breakthroughs in computer vision, natural language processing, generative modeling, and scientific computing. Major organizations including Tesla, OpenAI, Microsoft, and Hugging Face rely on PyTorch for both research experimentation and large-scale model deployment.

Its ecosystem includes specialized libraries for vision, text, audio, and distributed training, making it a comprehensive platform for the full model development lifecycle.

How PyTorch Works

Tensors and GPU Acceleration

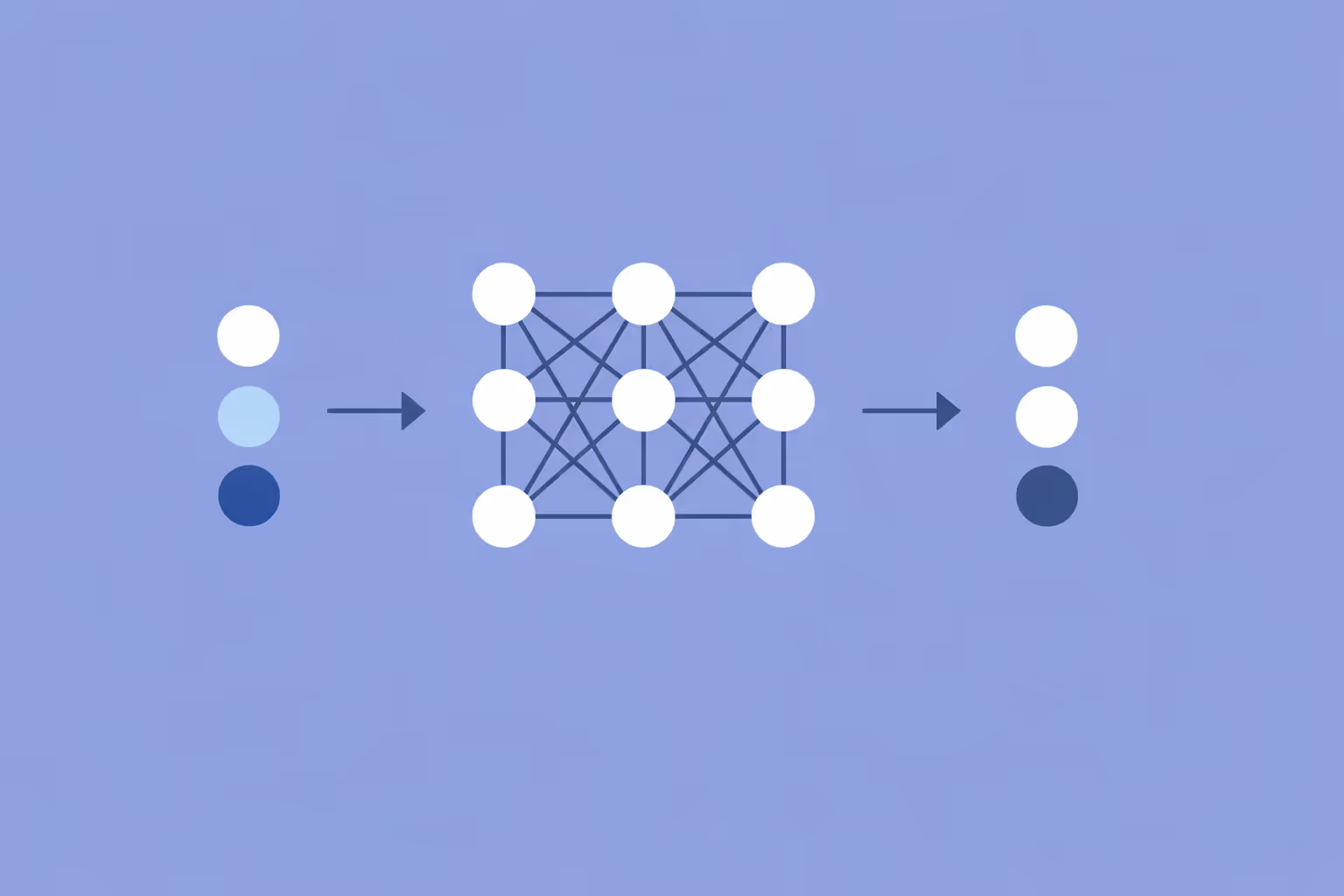

The fundamental data structure in PyTorch is the tensor. A tensor is a generalized matrix that can hold data in any number of dimensions: a scalar is a zero-dimensional tensor, a vector is one-dimensional, a matrix is two-dimensional, and higher-dimensional tensors represent the batches and channels common in deep learning workloads.

PyTorch tensors support the same slicing, indexing, and broadcasting operations familiar to NumPy users, but with one critical addition: they can be moved to GPUs for massively parallel computation.

Moving a tensor to a CUDA-enabled GPU is a single method call. Once on the GPU, all operations on that tensor execute on the graphics processor, which can perform thousands of floating-point calculations simultaneously. This hardware acceleration is what makes training large neural networks feasible. Without it, the matrix multiplications at the heart of gradient descent optimization would take orders of magnitude longer.

Dynamic Computation Graphs

PyTorch builds its computation graph dynamically during the forward pass. Every operation on a tensor that requires gradient tracking is recorded in a directed acyclic graph. When you call the backward method on a loss value, PyTorch traverses this graph in reverse to compute gradients for every parameter, implementing the backpropagation algorithm automatically.

The dynamic nature of this graph is what makes PyTorch particularly intuitive. Because the graph is rebuilt at every forward pass, you can use standard Python control flow (if statements, for loops, recursion) inside your model definitions. The network architecture can change from one input to the next, which is valuable for tasks involving variable-length sequences, tree-structured data, or conditional computation paths.

This approach, sometimes called "define-by-run," contrasts with the "define-and-run" paradigm of earlier frameworks where the entire computation graph had to be specified before any data flowed through it. The practical benefit is faster prototyping, easier debugging with standard Python tools, and models that read like straightforward Python programs rather than abstract graph definitions.

Autograd: Automatic Differentiation

The autograd engine is the system that powers PyTorch's ability to compute gradients automatically. When you create a tensor with the requires_grad=True flag, PyTorch begins tracking every operation performed on it. Each operation records the function that created it and a reference to its input tensors, forming the computational graph.

During the backward pass, autograd applies the chain rule of calculus to propagate gradients from the loss function back through every intermediate operation to the model parameters. This eliminates the need for manual gradient derivation, which would be error-prone and impractical for networks with millions or billions of parameters.

Autograd supports higher-order gradients, in-place operations (with some restrictions), and custom gradient functions. Researchers working on novel optimization methods or architectures can define their own forward and backward computations for custom layers, giving them full control over the gradient computation process.

The nn Module and Model Building

PyTorch organizes neural network components in its torch.nn module. This module provides pre-built layers (linear, convolutional, recurrent, attention), activation functions, loss functions, and container classes for composing them into complete architectures. Users define models by subclassing nn.Module and implementing a forward method that specifies how data flows through the network.

This object-oriented design makes models modular and reusable. A convolutional neural network block can be defined once and used multiple times within a larger architecture. A transformer model can compose encoder and decoder modules that are each built from attention and feed-forward sub-modules. The nesting is natural and maps directly to how researchers think about network design.

The torch.optim module complements nn by providing optimization algorithms. Stochastic gradient descent, Adam, AdamW, RMSprop, and other optimizers are available out of the box. A typical training loop involves computing the forward pass, calculating the loss, calling loss.backward() to compute gradients, and then calling optimizer.step() to update the weights.

| Component | Function | Key Detail |

|---|---|---|

| Tensors and GPU Acceleration | The fundamental data structure in PyTorch is the tensor. | — |

| Dynamic Computation Graphs | PyTorch builds its computation graph dynamically during the forward pass. | Tasks involving variable-length sequences, tree-structured data |

| Autograd: Automatic Differentiation | The autograd engine is the system that powers PyTorch's ability to compute gradients. | When you create a tensor with the requires_grad=True flag |

| The nn Module and Model Building | PyTorch organizes neural network components in its torch.nn module. | This module provides pre-built layers (linear, convolutional |

Key PyTorch Features

Pythonic and Intuitive API

PyTorch was designed to feel native to Python developers. Model definitions are standard Python classes. Training loops are explicit for-loops rather than hidden behind abstract fit methods. Debugging is done with pdb, print statements, or any Python debugger. This transparency reduces the learning curve for developers who already know Python and makes it straightforward to inspect intermediate values, set breakpoints, and trace errors.

The imperative programming style also means that what you write is what executes. There is no separate compilation step that transforms your code into an optimized graph before running. This directness accelerates experimentation because changes to the model or training procedure take effect immediately.

TorchScript and Production Deployment

While dynamic graphs are ideal for research, production environments often need static, optimized models. TorchScript bridges this gap by allowing PyTorch models to be serialized and executed independently of the Python runtime. Models can be exported via tracing (recording operations during a sample forward pass) or scripting (analyzing the Python code to generate a static graph).

TorchScript models can be loaded and run in C++ environments, deployed on mobile devices through PyTorch Mobile, or served through inference engines. This solves the historical criticism that PyTorch was a research-only framework and makes it a viable end-to-end platform from experimentation through production deployment.

Distributed Training

Training modern deep learning models often exceeds the memory and compute capacity of a single GPU. PyTorch provides multiple strategies for distributing training across hardware. DataParallel splits batches across GPUs on a single machine. DistributedDataParallel (DDP) scales across multiple machines with efficient gradient synchronization.

Fully Sharded Data Parallel (FSDP) partitions model parameters, gradients, and optimizer states across devices, enabling the training of models that are too large to fit on a single GPU.

These distributed training utilities integrate with the existing training loop pattern rather than requiring a fundamentally different programming model. A machine learning engineer can scale a single-GPU training script to a multi-node cluster with relatively few code changes.

Rich Ecosystem of Libraries

PyTorch's ecosystem extends well beyond the core framework. TorchVision provides datasets, model architectures, and image transforms for computer vision tasks. TorchText handles text preprocessing, vocabulary management, and dataset loading for NLP. TorchAudio supports audio loading, transformations, and pre-trained models for speech and audio processing.

Hugging Face Transformers, the most popular library for working with pre-trained language models, is built primarily on PyTorch. PyTorch Lightning and the Hugging Face Trainer abstract away boilerplate training code. ONNX Runtime enables exporting models to a cross-platform format. This ecosystem means that practitioners rarely need to build common components from scratch.

torch.compile and Performance Optimization

Recent versions of PyTorch introduced torch.compile, a function that applies just-in-time compilation to model code. It analyzes the computation graph and applies optimizations like operator fusion, memory planning, and automatic kernel selection. In many cases, wrapping a model in torch.compile produces significant speedups with no changes to the model code itself.

This feature represents PyTorch's approach to closing the performance gap with ahead-of-time compiled frameworks while preserving the dynamic, Pythonic development experience. Combined with mixed-precision training (using float16 or bfloat16 where full precision is unnecessary), these optimizations make PyTorch competitive for both training throughput and inference latency.

PyTorch Use Cases

Computer Vision

PyTorch is the dominant framework for computer vision research and applications. Image classification, object detection, semantic segmentation, and image generation models are commonly developed and trained in PyTorch. The convolutional neural network architectures that underpin these tasks, including ResNet, EfficientNet, and Vision Transformers, all have reference implementations in the PyTorch ecosystem.

Medical imaging systems, autonomous vehicle perception pipelines, satellite image analysis tools, and industrial quality inspection systems are built on PyTorch-trained models. The framework's flexibility makes it straightforward to experiment with novel architectures and loss functions that these specialized domains often require.

Natural Language Processing

The modern NLP landscape runs on PyTorch. The GPT family, LLaMA, BERT, T5, and most other large language models are trained and served using PyTorch. The Hugging Face ecosystem, which provides pre-trained models, tokenizers, and fine-tuning utilities, is built on top of PyTorch and has become the standard toolkit for NLP practitioners.

Tasks like text classification, named entity recognition, machine translation, question answering, and summarization all leverage PyTorch-based transformer model implementations. Organizations fine-tune pre-trained language models on domain-specific data to build chatbots, content generators, document classifiers, and search systems.

Generative AI and GANs

PyTorch is the primary framework behind generative AI development. Diffusion models like Stable Diffusion, which generate images from text descriptions, are implemented in PyTorch. Generative adversarial network architectures, including StyleGAN and its variants, were developed and trained using PyTorch's dynamic graph capabilities.

The framework's ability to handle unconventional training procedures, such as the alternating generator-discriminator training in GANs or the iterative denoising process in diffusion models, makes it the natural choice for generative modeling research. Audio synthesis, video generation, and 3D content creation systems also rely heavily on PyTorch.

Reinforcement Learning

Reinforcement learning research depends on the ability to define flexible agent architectures that interact with dynamic environments. PyTorch's dynamic computation graphs are well suited to this because RL algorithms often involve variable-length episodes, conditional logic based on agent state, and architectures that adapt during training.

Libraries like Stable Baselines3, RLlib, and CleanRL build on PyTorch to provide implementations of policy gradient methods, Q-learning variants, and actor-critic algorithms. Applications range from game-playing agents and robotic control systems to recommendation engines and resource optimization.

Scientific Computing and Research

PyTorch has found adoption in scientific domains beyond traditional AI applications. Physics simulations, protein structure prediction (as seen in AlphaFold-related work), climate modeling, and computational chemistry all leverage PyTorch's automatic differentiation and GPU acceleration capabilities.

The framework's autograd engine is useful for any problem that requires computing gradients, not just neural network training. Researchers in optimization, computational physics, and differential equations use PyTorch as a general-purpose differentiable programming platform. This breadth of application reflects the framework's flexibility and the strength of its core tensor and autograd abstractions.

Challenges and Limitations

Memory Management

PyTorch's dynamic computation graphs store operation history for every tensor that requires gradients. For very large models or long sequences, this history can consume substantial GPU memory. Out-of-memory errors are among the most common issues PyTorch users encounter, and diagnosing them requires understanding how the framework allocates and retains memory.

Techniques like gradient checkpointing (recomputing intermediate activations during the backward pass instead of storing them), mixed-precision training, and gradient accumulation help manage memory constraints. However, they add complexity to the training pipeline and require the practitioner to make informed trade-offs between memory usage and computation time.

Learning Curve for Production Deployment

While PyTorch excels in research and prototyping, moving models to production still requires additional tooling and expertise. Exporting models through TorchScript or ONNX, optimizing inference latency, setting up model serving infrastructure, and managing model versioning are all steps that go beyond writing training code.

The gap between a working research prototype and a production-ready system remains significant. Frameworks like TorchServe, Triton Inference Server, and various MLOps platforms help bridge this gap, but they introduce their own learning curves and operational requirements.

Debugging Distributed Training

Scaling from a single GPU to a distributed training setup introduces new failure modes. Deadlocks from mismatched collective operations, gradient synchronization bugs, and uneven data distribution across workers can be difficult to diagnose. While PyTorch's distributed training APIs are well designed, debugging issues that only appear at scale requires specialized knowledge and tooling.

Ecosystem Fragmentation

The richness of the PyTorch ecosystem is a strength, but it also creates fragmentation. Multiple libraries may solve the same problem with different APIs and conventions. PyTorch Lightning, Hugging Face Trainer, and custom training loops are all valid approaches to model training, but teams must choose among them and maintain consistency. Keeping up with rapid changes in the ecosystem requires continuous attention to library updates and migration paths.

How to Get Started with PyTorch

Learning PyTorch effectively requires foundational knowledge in Python programming and the mathematical concepts that underpin deep learning. Here is a practical path from first installation to building real models.

- Install PyTorch. Visit the official PyTorch website and use the configuration selector to generate the installation command for your operating system, package manager (pip or conda), and hardware (CPU-only or specific CUDA version). A CPU-only installation is sufficient for learning the basics.

- Learn tensor operations. Start by creating tensors, performing arithmetic, reshaping, indexing, and moving data between CPU and GPU. These operations are the building blocks of everything else in the framework. The official PyTorch tutorials cover tensor fundamentals thoroughly.

- Build a simple neural network. Define a model by subclassing nn.Module, write an explicit training loop, and train on a standard dataset like MNIST or CIFAR-10. Focus on understanding the forward pass, loss computation, backward pass, and optimizer step. This four-step cycle is the foundation of all PyTorch training.

- Study existing architectures. Read the source code of established models in TorchVision or Hugging Face Transformers. Understanding how professional implementations use nn.Module composition, weight initialization, and data loading patterns teaches best practices faster than building everything from scratch.

- Experiment with pre-trained models. Use Hugging Face or TorchVision to load a pre-trained model and fine-tune it on your own data. Transfer learning is the most practical entry point for real-world applications because it achieves strong results without massive datasets or compute budgets.

- Explore the ecosystem. Once comfortable with core PyTorch, investigate PyTorch Lightning for reducing boilerplate, Weights & Biases for experiment tracking, and distributed training utilities for scaling beyond a single GPU. Each tool addresses a specific pain point in the model development workflow.

- Join the community. The PyTorch community on GitHub, the PyTorch Discussion Forums, and the annual PyTorch Conference are valuable resources. Reading papers that include PyTorch implementations helps practitioners stay current with new techniques in neural network design and training methodology.

Practitioners who approach PyTorch as a tool for solving specific problems, rather than an abstract framework to master in isolation, tend to learn faster. Start with a concrete task, build incrementally, and let the needs of the project guide what you learn next.

FAQ

What is the difference between PyTorch and TensorFlow?

PyTorch and TensorFlow are both deep learning frameworks, but they differ in philosophy and developer experience. PyTorch uses dynamic computation graphs and an imperative programming style that feels natural to Python developers. TensorFlow historically used static graphs (though its eager execution mode now offers a similar experience). PyTorch dominates in research due to its flexibility and debuggability.

TensorFlow maintains a strong presence in production deployment, especially in mobile and edge environments through TensorFlow Lite.

Is PyTorch good for beginners?

PyTorch is widely considered the most accessible deep learning framework for beginners who already know Python. Its API maps closely to the underlying mathematical concepts, and models are written as standard Python classes. The explicit training loop, while requiring more code than a single model.fit() call, makes the training process transparent and easier to understand. Most university deep learning courses now use PyTorch as their teaching framework.

Can PyTorch run on CPUs only?

Yes. PyTorch runs on CPUs without any GPU or CUDA installation. CPU execution is sufficient for learning, small-scale experimentation, and inference with smaller models. However, training large neural network models on CPUs is impractically slow because neural network training involves massive matrix multiplications that GPUs handle orders of magnitude faster.

For serious training workloads, GPU access through local hardware or cloud platforms is effectively required.

What programming language does PyTorch use?

PyTorch's user-facing API is Python, which is what most practitioners interact with. Under the hood, performance-critical operations are implemented in C++ and CUDA for GPU acceleration. The framework also supports a C++ frontend for deployment scenarios where Python is not available or not desired. For the vast majority of use cases, including research, prototyping, and training, Python is the only language you need.

How does PyTorch handle large models that do not fit on a single GPU?

PyTorch provides several strategies for large-model training. Fully Sharded Data Parallel (FSDP) partitions model parameters, gradients, and optimizer states across multiple GPUs so that no single device needs to hold the entire model. Pipeline parallelism splits the model across devices by layer. Tensor parallelism distributes individual layers across GPUs.

These techniques, combined with gradient checkpointing and mixed-precision training, enable training of models with billions of parameters across GPU clusters.

Is PyTorch used in production or only for research?

PyTorch is used extensively in both research and production. Companies like Meta, Microsoft, Tesla, and Uber run PyTorch models in production at massive scale. Tools like TorchServe, TorchScript, and ONNX export enable deployment in serving infrastructure, mobile applications, and embedded devices. The perception that PyTorch is research-only is outdated and no longer reflects the state of the framework or its ecosystem.

Further reading

Vision Language Models (VLMs): How They Work, Architectures, and Practical Applications

Learn what vision language models are, how VLMs combine visual and textual understanding, key architectures like CLIP and LLaVA, real-world use cases, and how to get started.

What Is a Neural Network? How It Works, Types, and Use Cases

A neural network is a computing system modeled on the human brain. Learn how neural networks work, explore key types and architectures, and discover real-world applications.

Kolmogorov-Arnold Network (KAN): How It Works and Why It Matters

A Kolmogorov-Arnold Network (KAN) places learnable activation functions on edges instead of nodes. Learn how KANs work, how they compare to MLPs, and where they excel.

Cognitive Bias: Types, Real Examples, and How to Reduce It

Cognitive bias is a systematic pattern in how people process information and make decisions. Learn the most common types, real examples, and practical strategies to reduce bias.

Diffusion Models: How They Work, Types, and Use Cases

Learn how diffusion models generate images, audio, and video by adding and removing noise. Explore types, use cases, and practical guidance.

%201.avif)

AutoML (Automated Machine Learning): What It Is and How It Works

AutoML automates the end-to-end machine learning pipeline. Learn how automated machine learning works, its benefits, limitations, and real-world use cases.