Home Prompt Chaining: What It Is, How It Works, and Practical Use Cases

Prompt Chaining: What It Is, How It Works, and Practical Use Cases

Prompt chaining explained: learn what prompt chaining is, how it connects sequential LLM calls, and how to use it for complex AI workflows in practice.

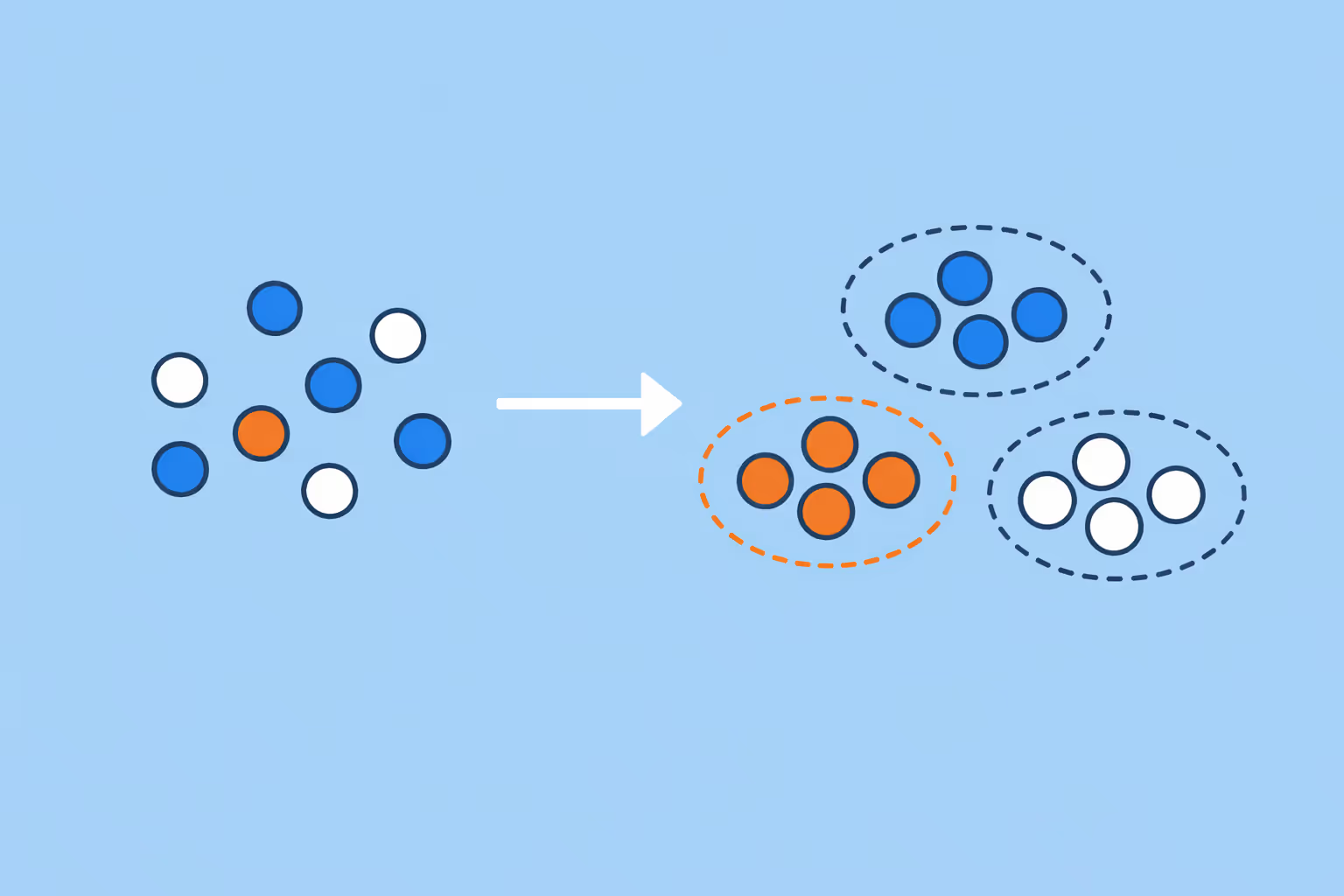

What Is Prompt Chaining?

Prompt chaining is a technique in which the output of one AI prompt is used as the input for the next, creating a sequential pipeline of large language model (LLM) calls that collectively accomplish a complex task. Rather than relying on a single prompt to handle everything at once, prompt chaining breaks a workflow into discrete steps where each step performs a focused subtask and passes its result forward.

The concept draws on a simple principle: most tasks worth automating are too complex for a single prompt to handle reliably. Summarizing a document, translating the summary, then formatting it for a specific audience involves three distinct operations. Prompt chaining structures these operations into a pipeline where each stage receives clean, targeted input and produces a well-defined output.

The result is a system that is more accurate, more controllable, and easier to debug than a monolithic prompt.

Prompt chaining sits at the intersection of prompt engineering and software architecture. It borrows the idea of composability from traditional programming, where small, reliable functions are combined to build complex behavior, and applies it to LLM interactions.

This makes prompt chaining a foundational pattern for anyone building applications powered by generative AI.

The technique is distinct from simply writing a longer prompt. A long prompt asks the model to juggle multiple objectives simultaneously. Prompt chaining separates those objectives into individual calls, each optimized for a single purpose. This separation of concerns is what gives prompt chaining its reliability advantage.

How Prompt Chaining Works

Prompt chaining operates through a structured sequence of LLM calls connected by data flow. Each call in the chain has a defined role, receives specific input, and produces output that feeds into the next step. Understanding the mechanics clarifies why this pattern outperforms single-prompt approaches for complex tasks.

The Basic Pipeline

A prompt chain starts with an initial input, such as a user query, a document, or a data set. The first prompt processes this input and generates an intermediate result. That result becomes part of the input for the second prompt, which refines, transforms, or extends it. The process continues until the final prompt produces the desired output.

Consider a practical example: generating a course outline from a set of learning objectives. The first prompt takes raw learning objectives and organizes them into logical modules. The second prompt takes those modules and writes detailed lesson descriptions for each. The third prompt reviews the entire outline for coherence and suggests revisions. Each step is a standalone AI prompt that does one thing well.

How Data Flows Between Steps

The connection between prompts is managed by an orchestration layer, which can be as simple as a Python script or as sophisticated as a framework like LangChain. This layer extracts the output from one prompt, optionally processes or validates it, and injects it into the template for the next prompt.

Data flow between steps can follow several patterns. In a linear chain, each step feeds directly into the next. In a branching chain, one step's output may trigger different subsequent prompts depending on the content. In a loop, a step's output is evaluated against criteria, and if it fails, the step is repeated with adjusted instructions. These patterns mirror control flow in traditional software, adapted for LLM interactions.

The Role of Prompt Templates

Each step in a chain uses a prompt template, a pre-written structure with placeholders for dynamic content. The orchestration layer fills these placeholders with the output from the previous step. Well-designed templates are critical because they constrain the model's behavior at each stage and ensure consistent formatting across the chain.

A template for step two of a content generation chain might read: "Given the following topic outline: {outline}. Write a 200-word introduction for each section. Use a professional tone. Do not include conclusions." The {outline} placeholder receives the output from step one, and the explicit constraints prevent the model from drifting off task.

Validation and Error Handling

Robust prompt chains include validation between steps. After each LLM call, the orchestration layer checks whether the output meets expected criteria before passing it forward. This might involve verifying that the output is valid JSON, that it falls within a word count range, or that it contains required fields.

When validation fails, the chain can retry the step with a modified prompt, escalate to a human reviewer, or terminate gracefully with an error message. This error handling is what distinguishes a production-grade prompt chain from a quick prototype. It is also the mechanism that makes prompt chaining relevant to LLMOps practices, where monitoring and reliability are essential.

| Component | Function | Key Detail |

|---|---|---|

| The Basic Pipeline | A prompt chain starts with an initial input, such as a user query, a document. | A user query, a document, or a data set |

| How Data Flows Between Steps | The connection between prompts is managed by an orchestration layer. | This layer extracts the output from one prompt |

| The Role of Prompt Templates | Each step in a chain uses a prompt template. | — |

| Validation and Error Handling | Robust prompt chains include validation between steps. | — |

Why Prompt Chaining Matters

Prompt chaining addresses fundamental limitations in how large language models handle complex tasks. Its importance grows as organizations move from experimental AI usage to production systems that require consistency, transparency, and control.

Improved Accuracy on Complex Tasks

Single prompts that attempt to perform multiple operations at once often produce degraded results. The model must simultaneously interpret instructions, manage multiple constraints, and maintain coherence across different subtasks. This cognitive juggling leads to errors, omissions, and inconsistencies.

Prompt chaining eliminates this problem by giving the model one clear objective per step. A model that only needs to summarize text will do so more accurately than one simultaneously asked to summarize, translate, and format. Research in chain-of-thought prompting has shown that breaking tasks into steps improves reasoning quality.

Prompt chaining extends this principle from a single prompt's internal reasoning to the architecture of entire workflows.

Transparency and Debuggability

When a monolithic prompt produces an incorrect output, diagnosing the cause is difficult. You cannot see which part of the instruction the model mishandled. Prompt chaining exposes every intermediate result, making it straightforward to identify where the chain broke down.

If step three of a five-step chain produces poor output, you can inspect the output from step two to determine whether the issue is a bad intermediate result or a bad prompt at step three. This visibility is especially valuable for teams building AI agents that must operate reliably without constant human supervision.

Modularity and Reusability

Each step in a prompt chain is an independent module. A summarization step can be reused across different chains. A translation step can be swapped for a different language pair without modifying the rest of the pipeline. This modularity accelerates development and reduces the cost of maintaining AI workflows.

Modular chains also simplify testing. You can evaluate each step in isolation, measure its accuracy against a test set, and optimize it independently. This is far more manageable than testing a monolithic prompt where changing one instruction might affect the output of every other instruction.

Scalability for Production Systems

Production AI systems rarely solve problems with a single prompt. Customer support automation, content generation pipelines, data analysis workflows, and educational content creation all involve multi-step processes. Prompt chaining provides a principled way to structure these systems, making them easier to build, maintain, and scale.

As the complexity of artificial intelligence applications grows, prompt chaining becomes a necessary architectural pattern rather than an optional optimization. It is the bridge between one-off prompt experiments and sustainable AI-powered products.

Prompt Chaining Use Cases

Prompt chaining applies across industries and task types wherever a workflow requires multiple distinct processing steps. The following use cases illustrate the breadth of its applicability.

Content Creation Pipelines

Content teams use prompt chaining to produce structured, multi-step written content. A typical chain for blog post creation might include: research and outline generation, section drafting, editorial review and tone adjustment, SEO optimization, and metadata generation. Each step receives the accumulated output from previous steps and contributes its specific transformation.

This approach produces more consistent content than asking a model to write a complete article in one pass. It also allows content teams to inject human review at specific checkpoints, combining AI efficiency with editorial judgment.

Data Extraction and Analysis

Prompt chaining excels at processing unstructured data into structured formats. A chain might first extract key entities from a raw document, then classify those entities into categories, then generate a summary report. Each step applies a different analytical lens to the data, producing a final output that no single prompt could achieve reliably.

Organizations that work with large volumes of text, such as research institutions, legal firms, and educational publishers, use prompt chains to convert raw material into usable, structured information. The sequential processing ensures that each analytical step builds on validated intermediate results.

Automated Assessment and Feedback

In education and corporate training, prompt chaining powers automated assessment workflows. A chain might first analyze a student submission against a rubric, then generate detailed feedback for each criterion, then synthesize an overall assessment with recommendations for improvement. This mirrors how experienced instructors evaluate work: criterion by criterion, building toward a holistic judgment.

This use case benefits from prompt chaining's transparency. Instructors can review the intermediate analysis to verify that the AI's reasoning aligns with their standards. The approach also scales well, allowing consistent feedback across hundreds of submissions while maintaining quality. It connects directly to the growing role of agentic AI in educational settings.

Code Generation and Review

Software development teams use prompt chaining for multi-step code tasks. A chain might first parse a feature request into technical requirements, then generate code that satisfies those requirements, then write unit tests for the generated code, then review the code for security vulnerabilities. Each step focuses on one aspect of the development process, producing higher-quality results than a single "write code for this feature" prompt.

Chatbot and Conversational Systems

Production chatbots frequently use prompt chaining behind the scenes. When a user sends a message, a first prompt classifies the intent. A second prompt retrieves relevant context based on that classification. A third prompt generates the response using both the classification and context.

This chain ensures that responses are contextually appropriate and grounded in relevant information, a pattern closely related to retrieval-augmented generation.

Translation and Localization Workflows

Prompt chaining handles multi-step translation tasks that go beyond word-for-word conversion. A chain might first translate the content, then adapt cultural references for the target locale, then verify terminology consistency with a glossary, then format the output for the target platform.

This produces localized content that reads naturally rather than sounding machine-translated, leveraging capabilities rooted in natural language processing.

Challenges and Limitations

Prompt chaining introduces significant advantages, but it also comes with trade-offs that practitioners need to understand before adopting it in production.

Error Propagation

The sequential nature of prompt chaining means that an error in an early step propagates through the entire chain. If step one produces an inaccurate summary, every subsequent step builds on flawed input. Unlike parallel systems where errors are isolated, a chain amplifies early mistakes.

Mitigation requires validation gates between steps. These gates check output quality before allowing the chain to proceed. Designing effective validation is itself a challenge, since it often requires domain expertise to define what constitutes acceptable output at each stage.

Increased Latency

Each step in a prompt chain involves a separate API call to an LLM, and each call incurs latency. A five-step chain takes roughly five times longer than a single prompt, assuming sequential execution. For applications that require real-time responses, such as live chat or interactive tools, this latency can be prohibitive.

Some chains can be partially parallelized when steps do not depend on each other. Identifying and exploiting these parallelization opportunities requires careful analysis of data dependencies within the chain.

Token Cost Accumulation

Prompt chaining consumes more tokens than single-prompt approaches. Each step includes its own system prompt, instructions, and the output from the previous step as context. For chains with many steps or large intermediate outputs, the cumulative token cost can be substantial. Teams working with models like those developed by OpenAI or other providers need to factor this cost into their planning.

Managing cost requires optimizing each step's prompt length, trimming unnecessary context before passing it forward, and choosing the right model size for each step. Not every step requires the most capable model. Simpler steps like formatting or classification can often use smaller, cheaper models without sacrificing quality.

Complexity of Orchestration

Building and maintaining prompt chains requires software engineering skills that go beyond writing good prompts. The orchestration layer must handle data flow, error recovery, logging, and monitoring. As chains grow in complexity, the engineering overhead can rival that of traditional software systems.

Frameworks like LangChain reduce this overhead by providing pre-built components for common orchestration patterns. However, teams still need to understand the underlying architecture to debug issues and optimize performance. This is a practical consideration for organizations evaluating their machine learning infrastructure investments.

Context Window Constraints

Each step in a chain operates within the model's context window limit. As chains grow longer and intermediate outputs accumulate, the combined context may exceed the window size. When this happens, earlier context must be truncated or summarized, potentially losing important information.

Effective chain design accounts for context window limits by keeping intermediate outputs concise and summarizing accumulated context at strategic points. This is especially relevant when working with transformer model architectures, which have fixed context window sizes that directly constrain how much information can flow through the chain.

How to Get Started with Prompt Chaining

Implementing prompt chaining does not require advanced infrastructure. You can start with simple tools and progressively increase sophistication as your needs grow.

Step 1: Identify a Multi-Step Task

Start by selecting a task you currently accomplish with a single, long prompt that produces inconsistent results. Look for tasks that naturally decompose into subtasks, where each subtask has a clear input and output. Content creation, data processing, and evaluation workflows are common starting points.

Write down each subtask in order. If the task has three or more distinct operations, it is a candidate for prompt chaining. If it only has one or two, a single well-crafted prompt with chain-of-thought prompting techniques may be sufficient.

Step 2: Design Individual Prompts

Write a separate prompt for each subtask. Each prompt should have a single, clear objective. Include explicit instructions about the expected output format, since the next step in the chain depends on receiving consistently structured input.

Test each prompt in isolation before connecting them. Feed it representative inputs and verify that the outputs are usable by the next step. This isolated testing catches issues early and prevents cascading failures when the chain runs end to end. Strong prompt engineering practices at each step are the foundation of a reliable chain.

Step 3: Build the Orchestration Layer

For simple chains, a Python script that calls an LLM API in sequence is sufficient. Store each prompt as a template string with placeholders. After each API call, extract the response, optionally validate it, and insert it into the next template.

For more complex chains, use a framework. LangChain provides built-in support for sequential chains, conditional routing, and integration with external tools. Other options include custom orchestration with libraries that manage API calls, retries, and logging.

Step 4: Add Validation Between Steps

Define acceptance criteria for each step's output. At minimum, check that the output is non-empty and in the expected format. For higher-stakes applications, add content-level validation: does the summary actually cover the key points? Does the classification fall into one of the expected categories?

Validation can be rule-based (checking for required JSON fields) or model-based (using a separate LLM call to evaluate quality). Model-based validation adds cost but provides more nuanced quality control for steps where rule-based checks are insufficient.

Step 5: Monitor and Iterate

Log every step's input and output. When the chain produces poor final results, use these logs to trace the problem back to a specific step. Refine the prompt for that step, retest, and redeploy. Over time, each step stabilizes and the chain's overall reliability improves.

Track metrics that matter for your use case: end-to-end latency, token cost per run, accuracy of final output, and failure rate at each step. These metrics guide optimization decisions, such as where to invest in better prompts, where to add caching, and where to substitute a cheaper model. This iterative approach aligns with LLMOps best practices for managing AI systems in production.

Step 6: Scale Gradually

Start with a linear chain of two or three steps. Once that works reliably, add branching logic, parallel execution, or feedback loops as needed. Resist the temptation to build a complex chain from the outset. Simplicity at each stage makes the system easier to understand, debug, and maintain.

As your chains mature, consider how they integrate with broader systems. Prompt chains often become components within larger AI agent architectures, where the chain handles a specific capability and the agent decides when to invoke it. Solutions powered by platforms like ChatGPT Enterprise increasingly support these orchestrated workflows out of the box.

FAQ

What is the difference between prompt chaining and chain-of-thought prompting?

Prompt chaining connects multiple separate LLM calls in a sequence, where each call performs a distinct subtask. Chain-of-thought prompting is a technique within a single prompt that encourages the model to reason step by step before answering. Prompt chaining operates at the workflow level, structuring how multiple prompts interact.

Chain-of-thought prompting operates at the prompt level, structuring how a single prompt generates its response. The two techniques are complementary and are often used together.

Do I need a framework like LangChain to use prompt chaining?

No. You can implement prompt chaining with basic scripting in any programming language that supports API calls. A simple loop that calls an LLM API, captures the response, and feeds it into the next prompt template is a valid prompt chain. Frameworks like LangChain provide convenience features such as built-in error handling, memory management, and tool integration, but they are not required.

Start simple and adopt a framework when your chains become complex enough to justify the additional dependency.

How many steps should a prompt chain have?

There is no fixed rule, but practical chains typically range from two to seven steps. Each step should correspond to a meaningful subtask. If a step does not transform the data in a significant way, it can usually be merged with the adjacent step. Chains with more than ten steps tend to accumulate latency and token costs that may outweigh the accuracy benefits.

Optimize by starting with the minimum number of steps that produce acceptable quality, then add steps only where they demonstrably improve the output.

Can prompt chaining work with different models at different steps?

Yes. One of the advantages of prompt chaining is that you can assign different models to different steps based on their requirements. A complex reasoning step might use a large model like GPT-3 or its successors, while a simpler formatting step can use a smaller, faster, and cheaper model. This mixed-model approach optimizes both cost and performance across the chain.

How does prompt chaining relate to AI agents?

AI agents use prompt chaining as one of their core mechanisms. An agent typically decides which action to take, executes that action (often via a prompt chain), observes the result, and decides the next action. Prompt chaining provides the execution layer where each action is broken into reliable subtasks.

In agentic AI systems, chains are invoked dynamically based on the agent's planning decisions, making prompt chaining a building block of autonomous AI behavior.

Is prompt chaining suitable for real-time applications?

Prompt chaining introduces latency because each step requires a separate LLM call. For real-time applications that need responses within milliseconds, this latency can be a limitation. However, many near-real-time applications (response within a few seconds) can accommodate short chains of two or three steps.

Strategies like parallel execution of independent steps, caching of common intermediate results, and using faster models for simpler steps can reduce latency to acceptable levels for most interactive use cases.

Further reading

Clustering in Machine Learning: Methods, Use Cases, and Practical Guide

Clustering in machine learning groups unlabeled data by similarity. Learn the key methods, real-world use cases, and how to choose the right approach.

Data Poisoning: How Attacks Compromise AI Models and What to Do About It

Learn what data poisoning is, how attackers corrupt AI training data, the main attack types, real-world risks, and practical defenses organizations can implement.

What Is Natural Language Generation (NLG)? Definition, Techniques, and Use Cases

Learn what natural language generation is, how NLG systems convert data into human-readable text, the types of NLG architectures, real-world use cases, and how to get started.

Data Dignity: What It Is and Why It Matters

Data dignity is the principle that people should have agency, transparency, and fair compensation for the personal data they generate. Learn how it works and why it matters.

Generative AI vs Predictive AI: The Ultimate Comparison Guide

Explore the key differences between generative AI and predictive AI, their real-world applications, and how they can work together to unlock new possibilities in creative tasks and business forecasting.

What Is Natural Language Understanding? Definition, How It Works, and Use Cases

Learn what natural language understanding (NLU) is, how it works, and where it applies. Explore the difference between NLU, NLP, and NLG, plus real use cases and how to get started.