Home What Is a Neural Network? How It Works, Types, and Use Cases

What Is a Neural Network? How It Works, Types, and Use Cases

A neural network is a computing system modeled on the human brain. Learn how neural networks work, explore key types and architectures, and discover real-world applications.

What Is a Neural Network?

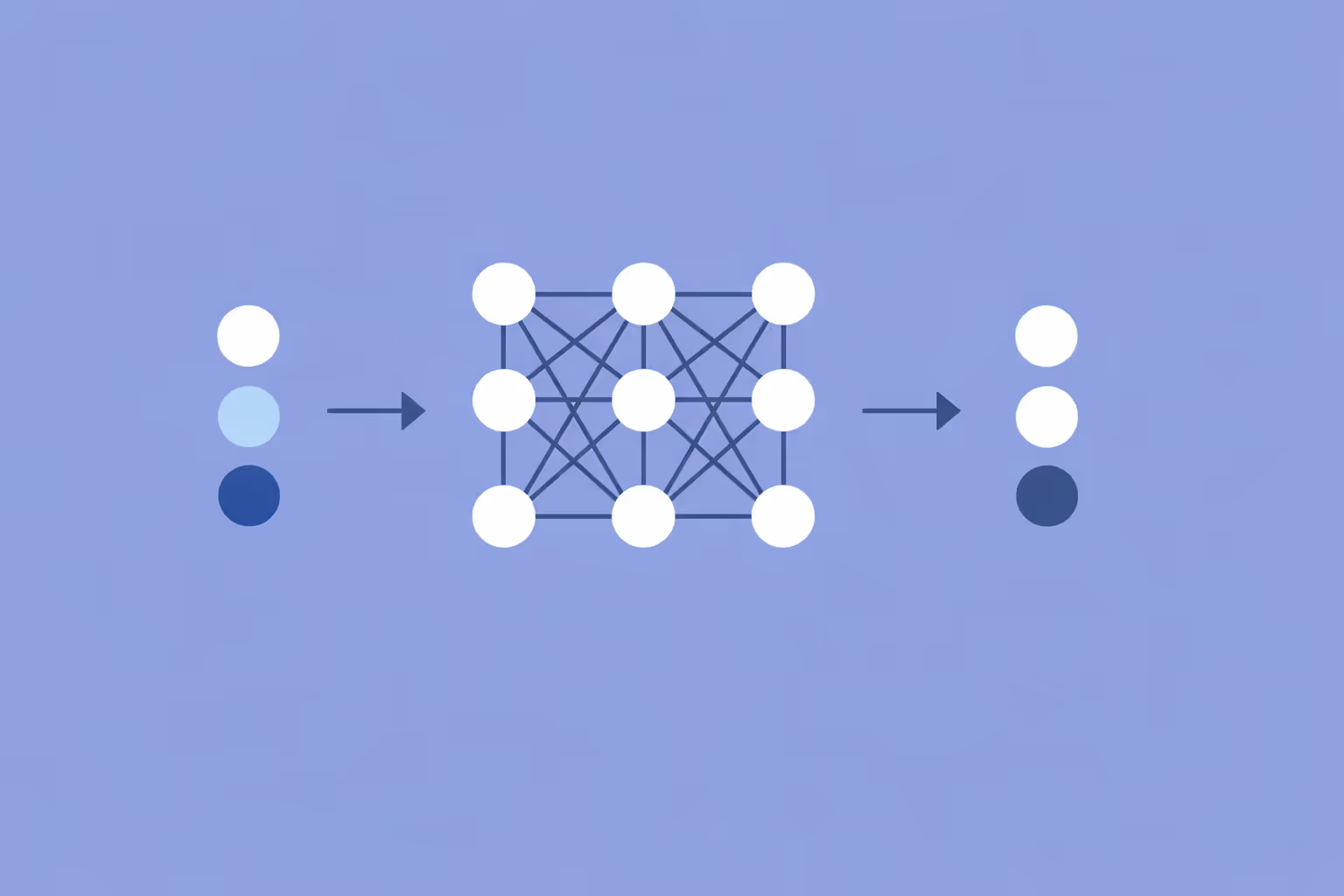

A neural network is a computational model inspired by the structure of the human brain. It consists of interconnected nodes, called neurons, organized into layers that process data and learn patterns through experience. Neural networks form the foundation of most modern artificial intelligence systems, from image classifiers to large language models.

Each neuron receives one or more inputs, applies a mathematical function to those inputs, and produces an output that feeds into the next layer. During training, the network adjusts the strength of connections between neurons to minimize the difference between its predictions and the correct answers. This process of iterative adjustment is what allows a neural network to learn from data rather than following explicitly programmed rules.

The concept dates back to the 1940s with the McCulloch-Pitts neuron model, but practical neural networks only became viable with the availability of large datasets and powerful hardware. The basic unit of computation, the artificial neuron, loosely mirrors biological neurons in that it aggregates signals, applies a threshold, and transmits an output. However, the analogy is limited.

Artificial neural networks are mathematical constructs optimized for pattern recognition, not biological simulations.

Neural networks are the core technology behind deep learning, machine learning, and a wide range of applied AI systems. Understanding how they work is essential for anyone working in data science, engineering, or AI strategy.

How Neural Networks Work

Layers and Neurons

A neural network is organized into three types of layers. The input layer receives raw data, such as pixel values from an image or numerical features from a dataset. One or more hidden layers perform mathematical transformations on that data. The output layer produces the final result, whether that is a classification label, a probability score, or a continuous value.

Each neuron in a hidden layer computes a weighted sum of its inputs, adds a bias term, and passes the result through an activation function. The weights determine how much each input contributes to the neuron's output. The bias allows the neuron to shift its activation threshold. Together, weights and biases are the learnable parameters that the network adjusts during training.

Activation Functions

Activation functions introduce non-linearity into the network. Without them, a neural network of any depth would behave identically to a single-layer linear regression model, regardless of how many layers it contains. Non-linearity is what allows neural networks to approximate complex, non-linear relationships in data.

The most common activation function is ReLU (Rectified Linear Unit), which outputs the input directly if it is positive and zero otherwise. Sigmoid functions compress outputs to a range between 0 and 1, making them useful for binary classification. Softmax extends this to multi-class problems by producing a probability distribution across all possible output classes.

Forward Propagation

During forward propagation, data flows from the input layer through each hidden layer to the output. At every layer, the network multiplies the input vector by a weight matrix, adds the bias vector, and applies the activation function. The output layer then produces a prediction.

The prediction is compared to the known target using a loss function. Common loss functions include cross-entropy for classification tasks and mean squared error for regression. The loss quantifies how wrong the prediction is. The objective of training is to make this loss as small as possible across the full training dataset.

Backpropagation and Training

Backpropagation is the algorithm that enables neural networks to learn. After computing the loss, backpropagation calculates the gradient of the loss with respect to every weight in the network. It does this by applying the chain rule of calculus, propagating the error signal backward from the output layer through each hidden layer to the input.

Once the gradients are computed, gradient descent updates each weight by subtracting a fraction of its gradient, scaled by a value called the learning rate. A learning rate that is too large causes the network to overshoot optimal weight values. A learning rate that is too small makes training prohibitively slow.

Modern neural networks use optimized variants of gradient descent, such as Adam, RMSprop, and SGD with momentum. These optimizers adapt the learning rate for each parameter individually, which leads to faster and more stable convergence. Training proceeds over multiple epochs, with each epoch representing one complete pass through the training data.

Regularization

Overfitting occurs when a neural network memorizes the training data instead of learning generalizable patterns. Several techniques address this problem. Dropout regularization randomly deactivates a fraction of neurons during each training step, forcing the network to distribute learned features across multiple pathways. L2 regularization penalizes large weight values, encouraging the network to find simpler solutions. Early stopping halts training when performance on a validation set stops improving.

Types of Neural Networks

Neural networks come in many architectures, each designed for specific data types and problem domains.

Feedforward Neural Networks

Feedforward networks are the simplest architecture. Data flows in one direction, from input to output, with no cycles or loops. They are effective for tabular data problems like classification and regression, where the input features are structured and independent.

A feedforward network with one or two hidden layers can approximate any continuous function given enough neurons, a property known as the universal approximation theorem. However, this theoretical capability does not guarantee practical performance. Architecture design, training data quality, and optimization choices all affect real-world results.

Convolutional Neural Networks (CNNs)

Convolutional neural networks are built for grid-structured data, primarily images and video. They use convolutional layers that slide small filters across the input to detect local patterns such as edges, textures, and shapes. Pooling layers reduce spatial dimensions while preserving the most salient features.

The key innovation of CNNs is parameter sharing. A single filter learns to detect a specific pattern and applies that detection across the entire input. This makes CNNs far more parameter-efficient than fully connected networks for image tasks and gives them translational invariance, meaning they can recognize an object regardless of its position in the image.

CNNs are the backbone of medical imaging diagnostics, autonomous vehicle perception, facial recognition, and satellite imagery analysis.

Recurrent Neural Networks (RNNs)

Recurrent neural networks are designed for sequential data. Unlike feedforward networks, RNNs maintain a hidden state that carries information from one time step to the next. This makes them suitable for time series forecasting, speech recognition, and language modeling.

Standard RNNs suffer from the vanishing gradient problem, where gradients shrink exponentially as they propagate through long sequences. Long Short-Term Memory (LSTM) networks address this with gating mechanisms that control what information to retain, discard, or output at each step. Gated Recurrent Units (GRUs) provide a simpler alternative with comparable results in many settings.

Transformer Networks

Transformer models replaced recurrence with self-attention, a mechanism that allows every element in a sequence to attend to every other element simultaneously. This eliminates the sequential processing bottleneck of RNNs and enables massive parallelization during training.

Transformers are the architecture behind large language models like GPT and BERT, as well as vision transformers (ViT) for image classification. Their ability to capture long-range dependencies and scale to billions of parameters has made them the dominant architecture in natural language processing and an increasingly important one in computer vision, audio processing, and multimodal AI.

Graph Neural Networks (GNNs)

Graph neural networks operate on graph-structured data, where entities are represented as nodes and their relationships as edges. GNNs propagate information along edges, allowing each node to aggregate features from its neighbors.

This architecture is used in social network analysis, molecular property prediction, recommendation systems, and fraud detection. Any problem where the data is naturally relational, rather than tabular or sequential, is a candidate for graph neural networks.

Kolmogorov-Arnold Networks (KANs)

Kolmogorov-Arnold networks represent a newer approach that places learnable activation functions on the edges of the network rather than on the nodes. This architectural shift is grounded in the Kolmogorov-Arnold representation theorem and offers improved interpretability compared to traditional multilayer perceptrons.

KANs are an active area of research, with early results suggesting they can achieve competitive accuracy with fewer parameters in certain scientific computing and function approximation tasks.

| Type | Description | Best For |

|---|---|---|

| Feedforward Neural Networks | Feedforward networks are the simplest architecture. | Tabular data problems like classification and regression |

| Convolutional Neural Networks (CNNs) | Convolutional neural networks are built for grid-structured data. | Edges, textures, and shapes |

| Recurrent Neural Networks (RNNs) | Recurrent neural networks are designed for sequential data. | Sequential data |

| Transformer Networks | Transformer models replaced recurrence with self-attention. | — |

| Graph Neural Networks (GNNs) | Graph neural networks operate on graph-structured data. | Social network analysis, molecular property prediction |

| Kolmogorov-Arnold Networks (KANs) | Kolmogorov-Arnold networks represent a newer approach that places learnable activation. | KANs are an active area of research |

Neural Network Use Cases

Computer Vision

Neural networks power nearly all modern computer vision systems. CNNs classify images, detect and localize objects within scenes, segment images at the pixel level, and generate realistic synthetic images. Applications range from quality inspection in manufacturing to real-time video analysis in security systems.

Medical imaging represents one of the highest-impact applications. Neural networks trained on labeled scans detect conditions like diabetic retinopathy, lung cancer, and cardiovascular disease with accuracy that matches or exceeds specialist physicians. These systems augment clinical decision-making rather than replace it.

Natural Language Processing

Transformer-based neural networks have redefined what is possible in natural language processing. They perform machine translation, text summarization, question answering, and sentiment analysis at scales and accuracy levels that earlier approaches could not achieve.

Pre-trained language models serve as the foundation for enterprise applications including contract analysis, customer support automation, and content generation. Organizations building supervised learning pipelines for text classification rely on neural network architectures to extract meaningful features from unstructured text.

Speech and Audio

Neural networks convert speech to text, synthesize speech from text, and identify speakers from voice samples. Modern speech recognition systems use a combination of convolutional, recurrent, and transformer layers to process raw audio waveforms.

Voice assistants, real-time transcription services, and accessibility tools for hearing-impaired users all depend on neural network models. Music generation and audio enhancement systems use similar architectures to analyze and produce sound.

Autonomous Systems and Robotics

Self-driving vehicles use neural networks to perceive their environment, combining object detection from camera feeds with point cloud processing from lidar. Reinforcement learning trains agents to make sequential decisions by maximizing long-term rewards, enabling robots to learn manipulation tasks, locomotion, and navigation through simulated or real-world practice.

Healthcare and Drug Discovery

Beyond diagnostic imaging, neural networks accelerate pharmaceutical research. They predict molecular properties, simulate protein folding, identify promising drug candidates, and forecast clinical trial outcomes. Graph neural networks are particularly well suited for molecular modeling, where atoms are nodes and chemical bonds are edges.

Education and Adaptive Learning

Neural networks enable adaptive learning platforms that personalize instruction based on real-time learner performance. NLP models evaluate written responses and provide formative feedback. Recommendation systems suggest learning pathways tailored to individual strengths and weaknesses. Speech recognition supports language learning tools that assess pronunciation accuracy.

Finance

Financial institutions apply neural networks to fraud detection, credit scoring, algorithmic trading, and risk modeling. Unsupervised learning techniques like autoencoders learn the distribution of legitimate transactions and flag anomalies in real time, processing millions of events with low false-positive rates.

Challenges and Limitations

Data Requirements

Neural networks are data-intensive. Training a competitive model often requires thousands to millions of labeled examples. In specialized domains like radiology or rare-event detection, acquiring sufficient labeled data is expensive and time-consuming. Biased or unrepresentative training data leads to models that generalize poorly or encode societal disparities.

Transfer learning mitigates this by fine-tuning a model pre-trained on a large general dataset for a smaller domain-specific task. This approach reduces data requirements but does not eliminate the need for high-quality, representative samples.

Computational Cost

Training large neural networks demands substantial computational resources. State-of-the-art language models can require thousands of GPUs running for weeks, with significant energy consumption and associated costs.

Specialized hardware such as neural net processors and neuromorphic computing chips aim to make neural network training and inference more efficient.

Inference costs matter in production deployments. Serving a large model to millions of users requires infrastructure that scales dynamically. Techniques like quantization, pruning, and knowledge distillation reduce model size and latency, but they trade some accuracy for efficiency.

Interpretability

Neural networks are often described as black boxes. A trained network distributes its learned logic across millions of parameters, making it difficult to explain why a specific input produced a specific output. This opacity is a serious concern in regulated industries such as healthcare, finance, and criminal justice, where decisions must be explainable and auditable.

Research into explainability has produced methods like SHAP values, LIME, and attention visualization. These tools provide useful approximations but fall short of complete, formal explanations. Improving interpretability without sacrificing performance remains an open research challenge.

Overfitting and Generalization

A neural network that achieves near-perfect accuracy on training data but performs poorly on new, unseen data has overfit. This is one of the most common failure modes, particularly when the training set is small relative to the number of parameters. Regularization techniques help, but they require careful tuning and do not guarantee generalization.

Adversarial Vulnerability

Neural networks are susceptible to adversarial examples, where imperceptible modifications to input data cause confident but incorrect predictions. An image classifier might misidentify a stop sign after tiny pixel-level perturbations that are invisible to the human eye. Defending against adversarial attacks is an active research area with no complete solution.

How to Build a Neural Network

Building a neural network involves a systematic process that spans problem definition, data preparation, model design, training, and evaluation.

Step 1: Define the Problem

Determine whether the task is classification, regression, generation, or something else. The problem type determines the output layer configuration, the loss function, and the evaluation metrics.

Step 2: Collect and Prepare Data

Gather a representative dataset and split it into training, validation, and test sets. Preprocess the data by normalizing numerical features, encoding categorical variables, and handling missing values. For image data, apply augmentations like rotation, cropping, and flipping to increase effective dataset size.

Step 3: Choose an Architecture

Select a network architecture that matches the data type and problem structure. Use feedforward networks for tabular data, CNNs for images, RNNs or transformers for sequences, and GNNs for graph data. Starting with a proven baseline architecture is more effective than designing from scratch.

Step 4: Select a Framework

PyTorch and TensorFlow are the two dominant deep learning frameworks. PyTorch is widely preferred in research for its dynamic computation graph and intuitive debugging. TensorFlow, with its Keras API, is commonly used in production. Both provide pre-built layers, loss functions, optimizers, and data loading utilities.

Step 5: Train the Model

Configure the optimizer, learning rate, and batch size. Train the network by iterating over the training data for multiple epochs, monitoring the loss on both the training and validation sets. Use early stopping to prevent overfitting and learning rate schedulers to adjust the learning rate as training progresses.

Step 6: Evaluate and Iterate

Assess the model on the held-out test set using appropriate metrics: accuracy, precision, recall, F1 score for classification; mean absolute error or root mean squared error for regression. Analyze errors to identify systematic failure patterns. Iterate by adjusting the architecture, hyperparameters, or data pipeline based on what the evaluation reveals.

Step 7: Deploy

Move the trained model into a production environment. This typically involves exporting the model, setting up an inference server, and integrating it with the application. Monitor performance in production to detect data drift, latency issues, or accuracy degradation over time.

FAQ

What is the difference between a neural network and deep learning?

A neural network is the computational architecture consisting of layers of interconnected neurons. Deep learning is a subset of machine learning that uses neural networks with many hidden layers. A neural network with one or two hidden layers is a shallow network. When the number of hidden layers increases significantly, the model qualifies as a deep learning system. All deep learning models are neural networks, but not all neural networks are deep.

How many layers does a neural network need?

There is no universal answer. A simple classification task on tabular data might only need one or two hidden layers. Complex tasks like image recognition or natural language understanding typically require dozens to hundreds of layers. The right number of layers depends on the complexity of the problem, the amount of available data, and the computational budget.

Can neural networks learn without labeled data?

Yes. Unsupervised learning methods like autoencoders learn to represent data without labels. Self-supervised learning, used in pre-training models like BERT and GPT, creates training signals from the data itself. Reinforcement learning trains networks through rewards and penalties rather than labeled examples. The choice of learning paradigm depends on the task and the data available.

What hardware is best for training neural networks?

GPUs are the standard for neural network training because they handle the parallel matrix operations that neural networks require. NVIDIA GPUs with CUDA support are the most widely used. For very large models, specialized hardware like Google TPUs or custom neural net processors provide additional efficiency. Cloud platforms offer on-demand access to this hardware without requiring capital investment.

Are neural networks the same as the human brain?

No. Neural networks are inspired by biological neural systems, but the resemblance is superficial. Biological neurons communicate through electrochemical signals with complex temporal dynamics. Artificial neurons perform simple mathematical operations. The brain contains roughly 86 billion neurons with trillions of synapses operating in parallel. Even the largest artificial neural networks are orders of magnitude simpler. The "neural" in neural network is a metaphor, not a description.

Further reading

Cognitive Search: Definition and Enterprise Examples

Learn what cognitive search is, how it differs from keyword search, its core components, and real enterprise examples across industries.

Artificial General Intelligence (AGI): What It Is and Why It Matters

Artificial general intelligence (AGI) refers to AI that matches human-level reasoning across any domain. Learn what AGI is, how it differs from narrow AI, and why it matters.

Generative Model: How It Works, Types, and Use Cases

Learn what a generative model is, how it learns to produce new data, and where it is applied. Explore types like GANs, VAEs, diffusion models, and transformers.

Graph Neural Networks (GNNs): How They Work, Types, and Practical Applications

Learn what graph neural networks are, how GNNs process graph-structured data through message passing, their main types, real-world use cases, and how to get started.

AI Winter: What It Was and Why It Happened

Learn what the AI winter was, why AI funding collapsed twice, the structural causes behind each period, and what today's AI landscape can learn from the pattern.

Machine Teaching: How Humans Guide AI to Learn Faster

Machine teaching is the practice of designing optimal training data and curricula so AI models learn faster and more accurately. Explore how it works, key use cases, and how it compares to machine learning.