Home What Is a Neural Net Processor (NPU)? Definition, Architecture, and Use Cases

What Is a Neural Net Processor (NPU)? Definition, Architecture, and Use Cases

Learn what a neural net processor is, how NPUs accelerate AI workloads through dedicated hardware, how they compare to GPUs and CPUs, and where they are deployed across industries.

What Is a Neural Net Processor?

A neural net processor, commonly referred to as a neural processing unit (NPU), is a specialized hardware chip designed to accelerate the mathematical operations that underpin neural networks. Unlike general-purpose processors, an NPU is purpose-built to handle the matrix multiplications, convolutions, and activation functions that deep learning models depend on.

The result is faster inference, lower power consumption, and higher throughput for AI workloads compared to running the same tasks on a standard CPU.

The term "neural net processor" encompasses a family of dedicated silicon architectures that share a common goal: making artificial intelligence computation more efficient at the hardware level.

This category includes NPUs embedded in mobile system-on-chips (SoCs), standalone AI accelerator cards for data centers, and neuromorphic processors that mimic the structure of biological neurons. What unites them is a design philosophy that prioritizes parallel numerical computation over the sequential instruction execution that traditional CPUs excel at.

NPUs have become a defining feature of modern computing. Apple, Qualcomm, Intel, AMD, Samsung, and Google all ship processors with integrated neural engines or dedicated AI cores. In smartphones, laptops, and IoT devices, these chips handle tasks like real-time language translation, photo enhancement, voice recognition, and on-device machine learning inference.

In data centers, larger NPU architectures power the training and serving of large-scale models. The proliferation of NPUs reflects a broader industry shift: as AI workloads grow in volume and complexity, general-purpose hardware alone cannot keep pace.

How Neural Net Processors Work

To understand how a neural net processor operates, it helps to consider what a neural network actually requires from hardware. A typical deep learning model consists of layers of interconnected nodes. Each layer performs a series of multiply-accumulate (MAC) operations, taking input values, multiplying them by learned weights, summing the results, and passing the output through a nonlinear activation function.

A single inference pass through a large transformer model can involve billions of these operations.

A conventional CPU processes instructions sequentially across a small number of powerful cores. It can run neural network operations, but it does so inefficiently because its architecture was not designed for the massive parallelism that matrix math demands. A GPU improves on this by providing thousands of smaller cores that execute operations in parallel. NPUs take specialization further by building the logic for neural network operations directly into the hardware.

The core architectural features of a neural net processor include:

- Massive parallelism. NPUs contain arrays of processing elements (sometimes called MAC units or tensor cores) that execute thousands of multiply-accumulate operations simultaneously. This maps directly to the structure of matrix and tensor computations in neural networks.

- On-chip memory hierarchy. Moving data between a processor and external memory is slow and energy-intensive. NPUs include large on-chip SRAM buffers and scratchpad memory to keep weights and activations close to the compute units, minimizing data movement.

- Reduced precision arithmetic. Many neural network operations do not require the full 32-bit floating-point precision that CPUs and GPUs typically use. NPUs support lower precision formats such as INT8, INT4, FP16, and BF16. Reducing precision increases throughput and decreases power consumption with minimal impact on model accuracy.

- Dataflow architecture. Rather than fetching instructions from memory one at a time, many NPUs use a dataflow execution model. Data moves through a pipeline of compute stages, with each stage performing a specific operation. This reduces instruction overhead and keeps the compute units continuously fed.

- Hardware support for common operations. NPUs include dedicated circuits for operations that appear frequently in neural networks, such as convolution (critical for convolutional neural networks), matrix multiplication, pooling, batch normalization, and activation functions like ReLU and sigmoid.

The result of this specialization is significant. An NPU can deliver tens to hundreds of tera-operations per second (TOPS) for neural network workloads while consuming a fraction of the power that a GPU would require for the same task. This efficiency is what makes it possible to run sophisticated AI models on battery-powered devices like smartphones and wearables.

The software stack that accompanies an NPU is equally important. Compilers and runtime frameworks translate high-level model definitions written in tools like PyTorch or TensorFlow into optimized instruction sequences for the specific NPU hardware. Model optimization techniques such as quantization, layer fusion, and graph compilation are applied during this process to maximize hardware utilization.

NPU vs GPU vs CPU

The relationship between NPUs, GPUs, and CPUs is not one of replacement but of complementary specialization. Each processor type has strengths that make it suitable for different parts of the AI pipeline and different deployment contexts.

CPUs are general-purpose processors optimized for sequential logic, branching, and diverse instruction sets. They handle operating system tasks, application logic, and data preprocessing effectively. For AI workloads, CPUs can run inference on smaller models, but their limited parallelism makes them inefficient for the large matrix operations that dominate neural network computation. A CPU might deliver performance in the range of a few GOPS (giga-operations per second) for AI tasks.

GPUs were originally designed for graphics rendering, which requires processing millions of pixels in parallel. This parallel architecture translates well to neural network computation, making GPUs the dominant hardware for model training. A modern data center GPU can deliver hundreds of TFLOPS and includes specialized tensor cores for accelerated matrix math.

However, GPUs consume substantial power (often 200 to 700 watts per card) and require active cooling, making them impractical for most edge and mobile deployments.

NPUs occupy the efficiency-optimized end of the spectrum. They sacrifice the general-purpose flexibility of CPUs and the raw peak throughput of high-end GPUs in exchange for maximum performance per watt on neural network workloads. A mobile NPU might deliver 10 to 45 TOPS while consuming only a few watts, enabling on-device AI features that would drain a battery in minutes if run on the CPU or GPU alone.

Key differences at a glance:

- Power efficiency. NPUs lead for inference workloads. They deliver more AI operations per watt than either CPUs or GPUs.

- Flexibility. CPUs are the most versatile, handling any computation. GPUs handle parallel workloads broadly. NPUs are narrow, optimized specifically for neural network operations.

- Training vs. inference. GPUs dominate model training due to their high memory bandwidth and massive parallelism. NPUs are primarily designed for inference, though some data center NPUs also support training.

- Deployment context. CPUs are everywhere. GPUs are common in workstations, servers, and gaming hardware. NPUs are increasingly embedded in mobile SoCs, laptops, automobiles, and edge AI devices.

In practice, modern devices use all three processor types together. A smartphone SoC, for example, contains CPU cores for general tasks, a GPU for graphics and display, and an NPU for AI inference. The operating system's AI framework routes each workload to the processor best suited for it. This heterogeneous computing model is now the standard approach for balancing performance, power, and capability.

Neural Net Processor Use Cases

NPUs enable a range of applications that would be impractical or impossible without dedicated AI hardware. The following use cases illustrate how neural net processors are deployed across industries.

Smartphones and Consumer Devices

Every major smartphone platform now includes an NPU. These chips power features that users interact with daily: computational photography (scene detection, noise reduction, portrait mode), real-time language translation, voice assistants with on-device speech recognition, and intelligent text prediction. Running these features locally on the NPU rather than in the cloud delivers faster response times, preserves battery life, and keeps personal data on the device.

Laptop processors from Intel, AMD, and Apple now include integrated NPUs as well. These enable features like intelligent video conferencing (background blur, eye tracking, noise suppression), on-device document summarization, and AI-assisted creative tools in photo and video editing software.

Autonomous Vehicles

Self-driving cars generate enormous volumes of sensor data from cameras, lidar, radar, and ultrasonic arrays. The vehicle's perception system must process this data in real time to detect objects, classify road users, predict trajectories, and make driving decisions within milliseconds. Dedicated neural net processors, often multiple NPUs working in parallel, provide the computational backbone for these perception pipelines.

Automotive NPU platforms from companies like NVIDIA, Mobileye, and Tesla are designed for the thermal constraints, safety certification requirements, and reliability standards of the automotive industry. They deliver the high throughput and low latency that safety-critical driving decisions demand.

Machine Vision and Industrial Automation

Factory floors use machine vision systems for quality inspection, defect detection, robotic guidance, and process monitoring. These systems rely on convolutional neural networks to analyze visual data in real time.

NPUs embedded in industrial cameras and inspection stations handle this inference locally, ensuring that production lines operate at full speed without waiting for cloud-based processing.

The low power consumption of NPUs also matters in industrial settings where adding active cooling or high-wattage hardware to existing equipment is impractical.

Healthcare and Medical Imaging

Medical devices increasingly incorporate neural net processors for diagnostic assistance. Portable ultrasound units, retinal scanners, and pathology imaging systems use on-device AI to highlight areas of concern, measure anatomical structures, and flag potential abnormalities for clinician review. Running inference on an embedded NPU means the device works in settings without reliable internet connectivity, such as rural clinics and field hospitals.

Wearable health monitors use NPUs to continuously analyze biosignals (heart rate variability, blood oxygen, sleep patterns) and detect anomalies that may indicate a health event, all without transmitting sensitive patient data to external servers.

Natural Language Processing and Conversational AI

NPUs accelerate on-device natural language processing tasks including speech-to-text conversion, text generation, sentiment analysis, and language translation. By running these models locally on a neural net processor, devices can respond to voice commands, transcribe meetings, and translate conversations in real time without depending on cloud connectivity or exposing private conversations to external services.

As transformer models become more efficient and quantization techniques improve, the range of language tasks that can run entirely on-device continues to expand. NPUs are central to this shift toward private, low-latency language AI.

Neuromorphic and Research Applications

A specialized subset of neural net processors takes inspiration from biological neural systems. Neuromorphic computing chips, such as Intel's Loihi and IBM's neurosynaptic chips, use spiking neural network architectures that process information through discrete spikes rather than continuous numerical values.

These processors are still primarily in research, but they offer the potential for extreme energy efficiency and real-time learning at the hardware level.

Challenges and Limitations

Despite their advantages, neural net processors come with trade-offs that engineers, product teams, and organizations must weigh carefully.

Software Ecosystem Fragmentation

Each NPU vendor provides its own compiler, runtime, and optimization toolchain. A model optimized for Apple's Neural Engine may not run on Qualcomm's Hexagon NPU without significant rework. This fragmentation increases development effort and creates vendor lock-in. Standardization efforts exist (ONNX, TensorFlow Lite, Android NNAPI), but gaps remain, especially for advanced model architectures and custom operations.

Limited Flexibility

NPUs are designed for a specific set of operations. If a model uses novel layer types, custom activation functions, or unconventional architectures, portions of the computation may fall back to the CPU or GPU, negating the performance benefits of the NPU. The hardware's strength is its specialization, but that same specialization limits its ability to handle workloads outside its design parameters.

Model Size Constraints

On-chip memory in mobile and edge NPUs is limited. Large models must be compressed, quantized, or pruned to fit within the memory budget. This optimization process can reduce model accuracy, and not every model architecture compresses well. Engineers must balance model capability against hardware constraints, sometimes making architectural choices driven by NPU limitations rather than optimal model performance.

Rapid Hardware Obsolescence

The NPU landscape evolves quickly. A chip released this year may be significantly outperformed by next year's architecture. Organizations investing in NPU-dependent products must plan for hardware refresh cycles, ensure their software is portable across NPU generations, and avoid over-optimizing for a single hardware target.

Debugging and Profiling Complexity

Profiling and debugging model execution on an NPU is more difficult than on a CPU or GPU. Standard debugging tools may not have visibility into the NPU's internal execution. Performance bottlenecks can be caused by data movement, scheduling decisions in the NPU compiler, or unsupported operations that silently fall back to less efficient processors. Diagnosing these issues requires specialized tooling and deep knowledge of the target hardware.

| Challenge | Impact | Mitigation |

|---|---|---|

| Software Ecosystem Fragmentation | Each NPU vendor provides its own compiler, runtime, and optimization toolchain. | — |

| Limited Flexibility | NPUs are designed for a specific set of operations. | A specific set of operations |

| Model Size Constraints | On-chip memory in mobile and edge NPUs is limited. | Large models must be compressed, quantized |

| Rapid Hardware Obsolescence | The NPU landscape evolves quickly. | — |

| Debugging and Profiling Complexity | Profiling and debugging model execution on an NPU is more difficult than on a CPU or GPU. | Data movement, scheduling decisions in the NPU compiler |

How to Get Started

Working with neural net processors does not require designing custom silicon. The hardware and software ecosystems are mature enough that developers and organizations can begin leveraging NPUs with existing tools and platforms.

Identify your inference requirements. Determine which AI workloads in your product or workflow would benefit from dedicated hardware acceleration. Common candidates include real-time image classification, speech recognition, on-device language models, and sensor data analysis. If your workloads involve high-throughput machine learning inference with latency or power constraints, an NPU is likely relevant.

Choose a hardware platform. For mobile and laptop applications, the NPU is typically integrated into the SoC (Apple M-series Neural Engine, Qualcomm Hexagon, Intel AI Boost, AMD XDNA). For edge AI deployments, standalone NPU modules like Google Coral, Hailo, and NVIDIA Jetson provide dedicated AI acceleration. For data center workloads, options include Google TPUs, AWS Inferentia, and Intel Gaudi.

Learn the software toolchain. Each NPU platform has an associated SDK and compiler. Apple provides Core ML, Qualcomm offers the AI Engine Direct SDK, Google has the TensorFlow Lite delegate for Coral, and NVIDIA provides TensorRT for Jetson. Frameworks like PyTorch and TensorFlow include export paths that target these runtimes. Start by converting an existing trained model to the format your target NPU requires and running a benchmark.

Optimize your models. Apply quantization (converting FP32 weights to INT8 or INT4) to reduce model size and increase NPU throughput. Use pruning to remove redundant parameters. Profile execution on the target NPU to identify bottlenecks and ensure that all critical layers are accelerated by the NPU rather than falling back to the CPU.

Build iteratively. Start with a single model on a single NPU platform. Validate accuracy, measure latency and power consumption, and compare against CPU and GPU baselines. Once you have confidence in the workflow, expand to additional models and deployment targets. The learning curve is steepest at the beginning, particularly around model conversion and debugging, but the toolchains improve with each hardware generation.

For teams building AI-powered applications or evaluating hardware for deep learning deployment, understanding NPU capabilities is becoming a core competency. The performance, power, and privacy benefits that neural net processors deliver make them an increasingly central component of the modern AI stack.

FAQ

What is the difference between an NPU and a GPU?

A GPU is a massively parallel processor originally designed for graphics rendering that has been adapted for AI workloads. It offers high raw throughput and is the standard hardware for training large models. An NPU is a processor designed exclusively for neural network operations. It is more power-efficient than a GPU for inference tasks because its architecture eliminates the overhead of general-purpose graphics capabilities. GPUs excel at training and high-end inference in data centers.

NPUs excel at efficient inference on edge devices, mobile hardware, and power-constrained environments.

Do all smartphones have neural net processors?

Most smartphones released since 2020 from major manufacturers include some form of neural processing unit or AI accelerator integrated into the main SoC. Apple's A-series and M-series chips include a Neural Engine. Qualcomm's Snapdragon processors include the Hexagon NPU. Samsung's Exynos and Google's Tensor chips include dedicated AI cores. Budget devices may have less capable or no dedicated NPU hardware, falling back to the CPU or GPU for AI tasks.

Can an NPU be used for training machine learning models?

Most mobile and edge NPUs are designed for inference, not training. Training requires large memory capacity, high memory bandwidth, and the ability to compute gradients across the entire model, capabilities that are better served by data center GPUs or specialized training accelerators like Google TPUs. However, some emerging NPU architectures support on-device fine-tuning and federated learning, where a pretrained model is updated with local data without sending it to the cloud.

What programming frameworks support NPUs?

Major machine learning frameworks including PyTorch and TensorFlow provide export and compilation paths for NPU deployment. Apple Core ML, Qualcomm AI Engine Direct, Google AI Edge (formerly TensorFlow Lite), ONNX Runtime, and NVIDIA TensorRT are the primary runtimes for converting and executing models on specific NPU hardware.

The Android Neural Networks API (NNAPI) provides a hardware abstraction layer for NPU access on Android devices.

How do NPUs affect battery life?

NPUs improve battery life for AI workloads compared to running the same tasks on a CPU or GPU. Because NPUs are architecturally optimized for neural network operations, they complete inference tasks using significantly less energy. A voice assistant running on an NPU, for example, can listen for wake words continuously while consuming minimal power. Without an NPU, the same task would draw more current from the CPU, reducing battery endurance noticeably.

Further reading

What Is Edge AI? Definition, Benefits, and Use Cases

Learn what edge AI is, how it processes data locally on devices, its core benefits for latency and privacy, and real-world use cases across industries.

What Is Retrieval-Augmented Generation (RAG)? How It Works and Why It Matters

Retrieval-augmented generation (RAG) combines information retrieval with language model generation to produce accurate, grounded responses. Learn how RAG works, its use cases, and implementation strategies.

Responsible AI: Principles, Frameworks, and Implementation

Responsible AI is the practice of designing, developing, and deploying artificial intelligence systems that are ethical, transparent, and accountable. Learn the core principles, leading frameworks, and practical steps for implementation.

ChatGPT Enterprise: Pricing, Features, and Use Cases for Organizations

Learn what ChatGPT Enterprise offers, how its pricing works, key features like data privacy and admin controls, and practical use cases across industries.

Create a Course Using ChatGPT - A Guide to AI Course Design

Learn how to create an online course, design curricula, and produce marketing copies using ChatGPT in simple steps with this guide.

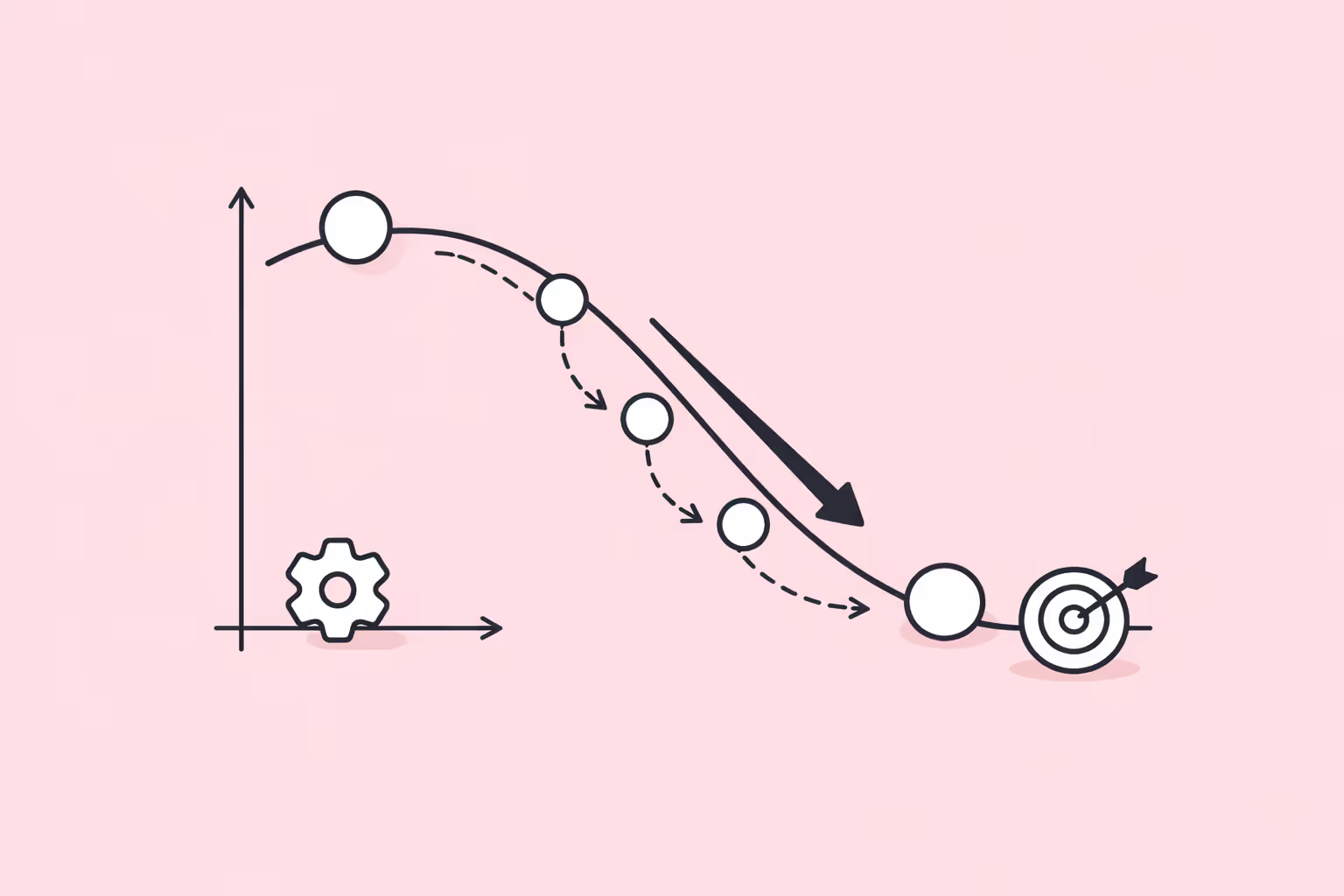

Gradient Descent: How It Works, Types, and Practical Implementation

Learn what gradient descent is, how it optimizes machine learning models, its main variants, and how to implement it in practice.