Home What Is Natural Language Understanding? Definition, How It Works, and Use Cases

What Is Natural Language Understanding? Definition, How It Works, and Use Cases

Learn what natural language understanding (NLU) is, how it works, and where it applies. Explore the difference between NLU, NLP, and NLG, plus real use cases and how to get started.

What Is Natural Language Understanding?

Natural language understanding (NLU) is a subfield of natural language processing that focuses on enabling machines to comprehend the meaning behind human language. While NLP handles the broader mechanics of language interaction, NLU zeroes in on interpretation. It transforms unstructured text or speech into structured data that software systems can act on.

NLU goes beyond recognizing individual words. It identifies intent, extracts entities, resolves ambiguity, and maps language to actionable meaning. When a user types "cancel my subscription effective immediately," an NLU system determines that the intent is cancellation, the entity is the user's subscription, and the temporal modifier is "now." This structured understanding is what allows downstream systems to execute the right action.

The significance of NLU within artificial intelligence has grown as organizations increasingly rely on language-driven interfaces. Every virtual assistant, smart search engine, and automated support system depends on NLU to bridge the gap between how humans communicate and how machines process information. Without NLU, these systems would only match keywords rather than grasp what users actually mean.

How NLU Works

NLU operates through a series of layered processes that progressively extract deeper meaning from raw text. Each layer builds on the output of the previous one, moving from surface-level parsing to semantic interpretation.

Tokenization and Preprocessing

The first step breaks raw input into discrete units called tokens. Tokens are typically words or subwords, though they can also be characters depending on the model architecture. Preprocessing also handles normalization tasks such as lowercasing, removing punctuation, and expanding contractions. This stage prepares the text for deeper analysis by creating a clean, consistent representation.

Syntactic Analysis

Syntactic analysis examines the grammatical structure of a sentence. Part-of-speech tagging identifies whether each token functions as a noun, verb, adjective, or other grammatical category. Dependency parsing maps the relationships between words, establishing which words modify or depend on others. This structural understanding is essential for disambiguating sentences where word order or phrasing creates multiple possible interpretations.

Semantic Analysis

Semantic analysis is where NLU begins to interpret meaning rather than just structure. This layer maps words and phrases to concepts, resolving issues like polysemy (words with multiple meanings) and synonymy (different words with the same meaning). Modern NLU systems use vector embeddings to represent words as numerical vectors in high-dimensional space, capturing semantic relationships that rule-based systems cannot.

Intent Recognition

Intent recognition classifies the overall purpose of a user's input.

In a customer service context, intents might include "check order status," "request refund," or "update address." Intent classifiers are typically built using machine learning models trained on labeled datasets where each example is mapped to a specific intent category. Deep learning architectures, particularly those based on the transformer model, have significantly improved intent recognition accuracy.

Entity Extraction

Entity extraction identifies specific pieces of information within the input. Named entities include people, places, dates, monetary amounts, product names, and other structured data points. For the input "Ship the blue jacket to 45 Oak Street by Thursday," entity extraction pulls out "blue jacket" (product), "45 Oak Street" (address), and "Thursday" (date). This structured data feeds directly into backend systems that fulfill the user's request.

Context and Coreference Resolution

Advanced NLU systems maintain context across multiple turns of conversation. Coreference resolution links pronouns and references to their antecedents. When a user says "I want to return it," the system must determine that "it" refers to the item mentioned three sentences earlier.

Models like BERT and its successors excel at this type of contextual understanding because they process entire sequences of text bidirectionally, capturing relationships that earlier sequential models missed.

| Component | Function | Key Detail |

|---|---|---|

| Tokenization and Preprocessing | The first step breaks raw input into discrete units called tokens. | Lowercasing, removing punctuation, and expanding contractions |

| Syntactic Analysis | Syntactic analysis examines the grammatical structure of a sentence. | — |

| Semantic Analysis | Semantic analysis is where NLU begins to interpret meaning rather than just structure. | This layer maps words and phrases to concepts |

| Intent Recognition | Intent recognition classifies the overall purpose of a user's input. | In a customer service context |

| Entity Extraction | Entity extraction identifies specific pieces of information within the input. | Named entities include people, places, dates, monetary amounts |

| Context and Coreference Resolution | Advanced NLU systems maintain context across multiple turns of conversation. | — |

NLU vs NLP vs NLG

These three terms are frequently confused, but they describe distinct components within the language AI pipeline. Understanding the boundaries between them clarifies where NLU fits and why it matters.

Natural language processing is the umbrella discipline. It encompasses every computational method for working with human language, including reading, understanding, and generating text. NLP is the full stack. NLU and NLG are specialized layers within it.

Natural language understanding is the comprehension layer. It takes raw human input and converts it into structured, machine-readable representations. NLU answers the question: "What does this person mean?" It handles intent classification, entity extraction, sentiment analysis, and semantic parsing. NLU is an input-side technology. Its job is to listen and interpret.

Natural language generation is the production layer. It takes structured data, logical outputs, or model-generated content and converts it into human-readable text. NLG answers the question: "How should the system respond?" It handles response formulation, text summarization, report writing, and dialogue responses. NLG is an output-side technology. Its job is to speak and write.

In a conversational AI system, NLU processes the user's message, a dialogue manager determines the appropriate action, and NLG formulates the reply. All three stages operate under the NLP umbrella. Removing any one of them breaks the pipeline. A system with strong NLG but weak NLU will produce fluent responses to misunderstood requests. A system with strong NLU but weak NLG will understand perfectly but respond awkwardly.

The distinction also matters for evaluation. NLU performance is measured by intent accuracy and entity extraction precision. NLG performance is measured by fluency, relevance, and coherence. NLP performance, as the parent category, is assessed holistically across the full input-to-output cycle.

NLU Use Cases

Natural language understanding powers a wide range of applications across industries. The common thread is any scenario where machines must interpret human language to take action or deliver insight.

Virtual Assistants and Chatbots

Every major virtual assistant relies on NLU as its comprehension engine. When a user asks a voice assistant to "set an alarm for 6 AM tomorrow" or tells a customer service chatbot "I was charged twice for the same order," NLU is what transforms those utterances into structured commands. The accuracy of intent recognition and entity extraction directly determines whether the assistant performs the correct action or frustrates the user with irrelevant responses.

Sentiment Analysis

NLU enables organizations to gauge public opinion, customer satisfaction, and brand perception at scale. Sentiment analysis systems parse reviews, social media posts, survey responses, and support tickets to classify emotional tone as positive, negative, or neutral. More granular systems detect specific emotions such as frustration, satisfaction, or urgency. This capability feeds into product development, marketing strategy, and customer experience optimization.

Semantic Search

Traditional keyword search matches documents based on literal word overlap. Semantic search uses NLU to understand the intent behind a query and return results based on meaning rather than exact phrasing.

A search for "how to fix a leaking faucet" should return results about plumbing repair even if those documents never use the word "fix." NLU-powered search systems use language modeling and vector representations to match queries with semantically relevant content.

Machine Translation

Machine translation depends on NLU to preserve meaning across languages. Translating "the bank was steep" requires the system to understand that "bank" refers to a riverbank, not a financial institution. Without NLU's disambiguation capabilities, translation systems produce literal but nonsensical output.

Modern neural translation systems integrate NLU at every stage to handle idioms, context-dependent vocabulary, and grammatical restructuring.

Email and Document Classification

Enterprises process millions of emails, invoices, contracts, and support tickets. NLU automates the classification of these documents by intent, topic, urgency, and required action. An incoming email that says "We need to renegotiate payment terms before the contract renewal" is automatically routed to the appropriate department, tagged with relevant metadata, and prioritized based on urgency signals extracted by the NLU layer.

Healthcare and Clinical NLU

In healthcare, NLU processes clinical notes, patient intake forms, and medical literature. It extracts diagnoses, medication names, dosage information, and symptom descriptions from unstructured physician notes. This structured extraction feeds into electronic health records, clinical decision support systems, and population health analytics. The stakes are high in this domain, as misinterpretation can affect patient outcomes.

Education and Learning Platforms

NLU supports educational technology by powering automated essay scoring, adaptive tutoring systems, and intelligent content recommendations. When a learner asks a question in natural language, NLU interprets the query and retrieves the most relevant learning material. Platforms like Teachfloor leverage AI capabilities to help instructors deliver structured, cohort-based learning experiences where intelligent language understanding enhances learner support and engagement.

Challenges and Limitations

Natural language understanding has advanced substantially, but significant obstacles remain. These limitations are not peripheral issues. They define the boundaries of what NLU systems can reliably accomplish.

Ambiguity in Human Language

Human language is inherently ambiguous. Lexical ambiguity (words with multiple meanings), syntactic ambiguity (sentences with multiple valid parses), and referential ambiguity (unclear pronoun references) create constant challenges for NLU systems. The sentence "I saw her duck" has at least two valid interpretations. Humans resolve such ambiguities effortlessly using world knowledge and context.

Machines require large amounts of training data and sophisticated architectures to approximate this ability, and they still fail in edge cases.

Sarcasm, Irony, and Figurative Language

Literal interpretation is where most NLU systems default. Sarcasm inverts meaning entirely. "Great, another meeting" does not express enthusiasm. Irony, metaphor, hyperbole, and understatement all require the system to detect that the surface meaning diverges from the intended meaning. These phenomena are difficult to capture in training data because they depend on tone, context, cultural background, and shared knowledge between speaker and listener.

Domain Specificity and Transfer

An NLU model trained on customer service data will underperform when applied to legal documents or medical records. Each domain has its own vocabulary, conventions, and patterns of expression. Computational linguistics research has made progress on transfer learning, where knowledge from one domain is adapted to another, but fine-tuning on domain-specific data remains necessary for production-grade accuracy.

Low-Resource Languages

Most NLU research and tooling is concentrated on English and a handful of other widely spoken languages. Languages with smaller digital footprints lack the training data, pretrained models, and evaluation benchmarks needed to build accurate NLU systems. This creates an accessibility gap where speakers of underrepresented languages are excluded from the benefits of language AI.

Data Privacy and Bias

NLU systems are trained on text data that often contains personal information, cultural biases, and discriminatory patterns. A sentiment analysis model trained on biased review data may associate certain demographic markers with negative sentiment. An intent classifier trained on narrow demographic inputs may fail to understand dialectal variation. Addressing these issues requires careful dataset curation, bias auditing, and ongoing monitoring after deployment.

Computational Cost

State-of-the-art NLU models, particularly those built on large neural network architectures, require significant computational resources for both training and inference. This creates barriers for smaller organizations and raises environmental concerns about energy consumption.

Techniques like model distillation, quantization, and efficient architectures are active areas of research aimed at reducing these costs without sacrificing accuracy.

How to Get Started with NLU

Implementing natural language understanding does not require building models from scratch. The ecosystem of tools, pretrained models, and cloud services has matured to the point where organizations of varying technical capacity can adopt NLU effectively.

Define the Problem Clearly

Start with a specific, bounded use case. "We want to automatically classify incoming support tickets by intent and urgency" is actionable. "We want to understand language" is not. A well-defined problem constrains the scope of the NLU system, simplifies data requirements, and makes success measurable. Identify the intents you need to recognize, the entities you need to extract, and the downstream actions the system must trigger.

Choose Between Build and Buy

Cloud NLU services from major providers offer pretrained models for intent classification, entity extraction, sentiment analysis, and language detection through simple API calls. These are suitable for common use cases where customization requirements are moderate. For specialized domains or high-accuracy requirements, organizations may need to fine-tune open-source models such as BERT, RoBERTa, or domain-specific variants using their own labeled data.

Prepare Training Data

NLU models learn from examples. For intent classification, this means assembling a dataset of representative user inputs labeled with the correct intent. For entity extraction, it means annotating text spans with entity types. Data quality matters more than quantity. A small, well-labeled dataset outperforms a large, noisy one. Include variations in phrasing, misspellings, and edge cases to build a robust model.

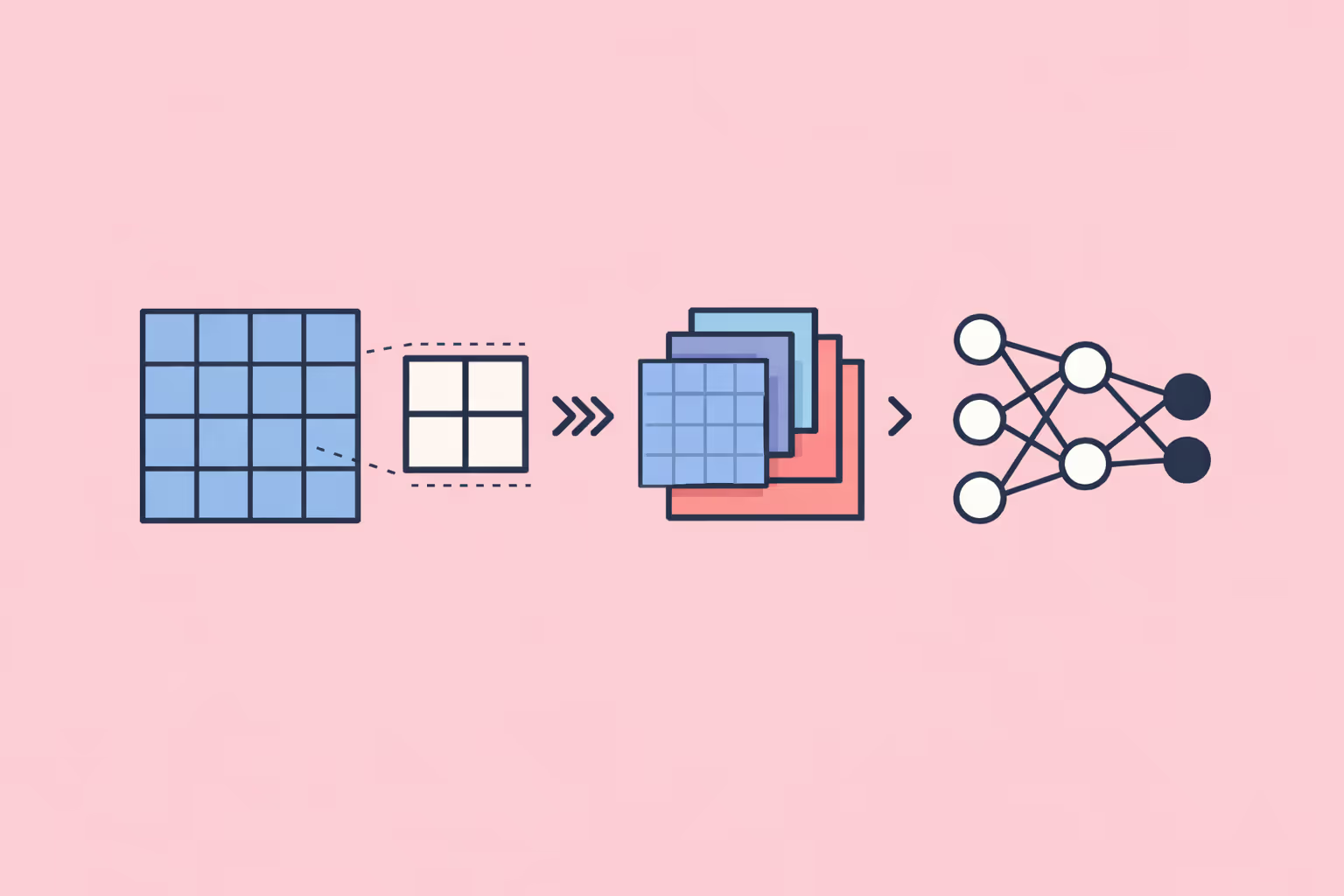

Select an Architecture

The transformer model architecture dominates modern NLU. Pretrained models like BERT provide strong baselines that can be fine-tuned with relatively small amounts of task-specific data. For simpler tasks with limited data, traditional machine learning classifiers such as support vector machines or gradient-boosted trees may suffice. The choice depends on accuracy requirements, latency constraints, and available infrastructure.

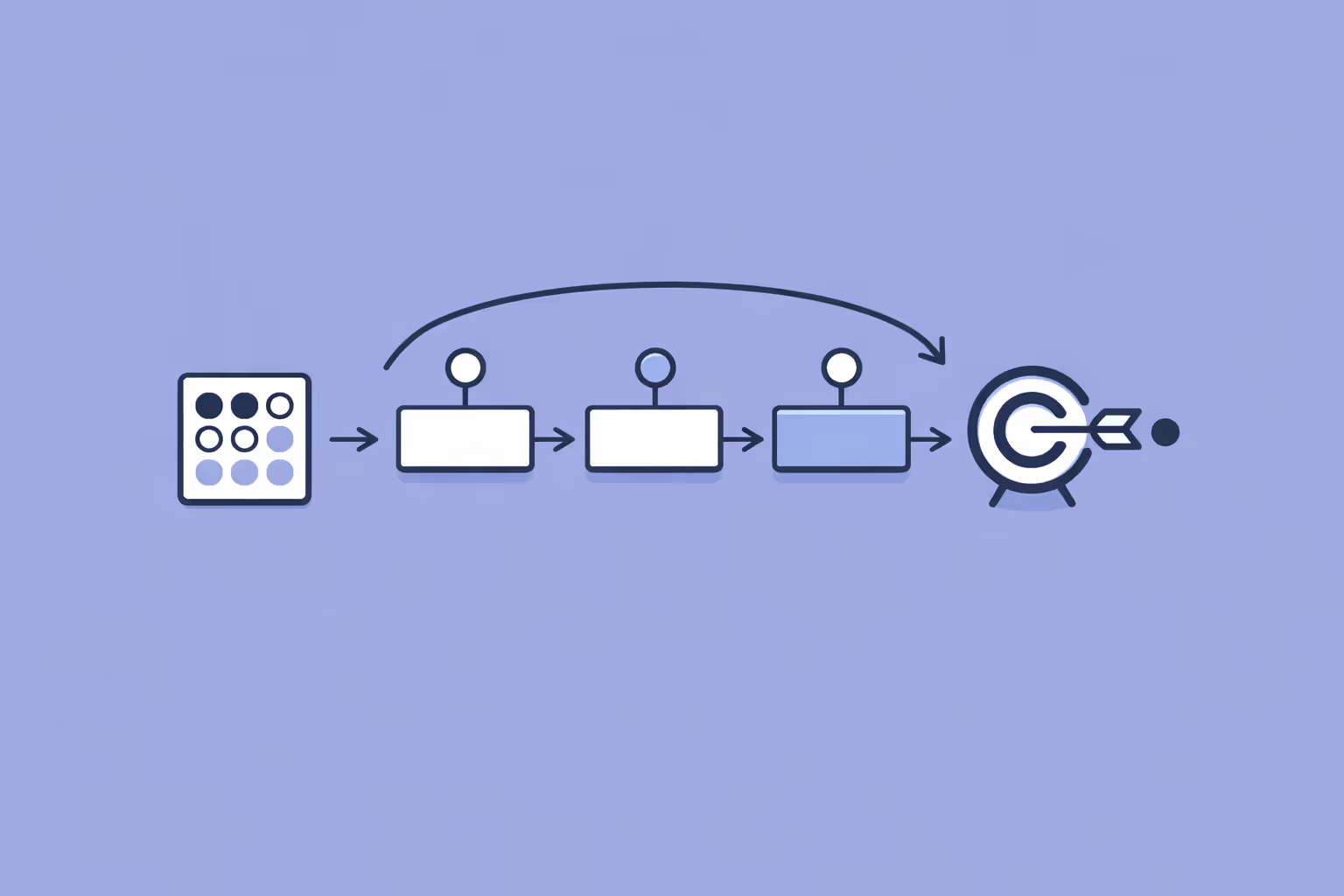

Evaluate and Iterate

Measure intent classification accuracy, entity extraction F1 scores, and end-to-end task completion rates. Test the system against adversarial inputs, out-of-scope queries, and ambiguous cases. Review failure cases manually to identify patterns. NLU is not a deploy-once technology. Continuous evaluation, retraining, and expansion of training data are essential for maintaining and improving performance over time.

Integrate with Downstream Systems

An NLU model in isolation produces structured predictions. Value is realized when those predictions connect to business logic. Route classified intents to the right workflow. Populate CRM records with extracted entities. Trigger automated responses based on sentiment scores. The integration layer is where NLU transitions from a research exercise to an operational capability.

FAQ

What is the difference between natural language understanding and natural language processing?

Natural language processing is the broad field that covers all computational interactions with human language, including understanding, generation, and translation. Natural language understanding is a specific subset of NLP focused on comprehension. NLU deals with interpreting meaning, intent, and context from text or speech.

NLP includes NLU but also encompasses natural language generation, text preprocessing, and other language tasks.

How is NLU used in virtual assistants?

Virtual assistants use NLU to convert spoken or typed commands into structured data that the system can act on. The NLU layer identifies what the user wants (intent) and extracts specific details (entities) such as names, dates, locations, and quantities. This structured output feeds into the assistant's dialogue manager, which determines the appropriate response or action.

Does NLU require deep learning?

Not necessarily. Traditional machine learning methods like logistic regression, support vector machines, and decision trees can handle simpler NLU tasks with small datasets.

However, deep learning models, particularly transformer-based architectures, deliver significantly better results on complex tasks involving ambiguity, long-range dependencies, and large vocabularies. Most production NLU systems use deep learning for at least some components.

What industries benefit most from NLU?

Customer service, healthcare, financial services, e-commerce, legal, and education all benefit significantly from NLU. Any industry that processes large volumes of unstructured text or relies on language-based interactions with customers, patients, or users is a strong candidate. The specific application varies, but the core value proposition is consistent: converting human language into structured, actionable data.

How accurate are modern NLU systems?

Accuracy varies by task, domain, and model. On well-defined intent classification tasks with sufficient training data, modern NLU systems routinely achieve above 90% accuracy. Entity extraction accuracy depends on entity type and domain complexity. Performance degrades on out-of-domain inputs, ambiguous language, and underrepresented languages. Regular evaluation and retraining are necessary to maintain accuracy as language patterns and user behavior evolve.

What is the role of transformers in NLU?

The transformer model architecture revolutionized NLU by enabling models to process entire sequences of text simultaneously rather than one token at a time. Self-attention mechanisms allow transformers to capture relationships between distant words in a sentence, dramatically improving performance on tasks like coreference resolution, semantic parsing, and intent classification. Pretrained transformer models such as BERT serve as the foundation for most modern NLU systems.

Further reading

Create a Course Using ChatGPT - A Guide to AI Course Design

Learn how to create an online course, design curricula, and produce marketing copies using ChatGPT in simple steps with this guide.

What is an AI Agent in eLearning? How It Works, Types, and Benefits

Learn what AI agents in eLearning are, how they differ from automation, their capabilities, limitations, and best practices for implementation in learning programs.

Convolutional Neural Network (CNN): How It Works, Use Cases, and Practical Guide

Learn what a convolutional neural network is, how CNNs process visual data, their real-world applications, and the key limitations practitioners should know.

Recurrent Neural Network (RNN): How It Works, Architectures, and Use Cases

Learn what a recurrent neural network is, how RNNs process sequential data, the main architecture variants, practical applications, and key limitations compared to transformers.

Kolmogorov-Arnold Network (KAN): How It Works and Why It Matters

A Kolmogorov-Arnold Network (KAN) places learnable activation functions on edges instead of nodes. Learn how KANs work, how they compare to MLPs, and where they excel.

Inception Score (IS): What It Is, How It Works, and Why It Matters

Learn what the Inception Score is, how it evaluates generative models, and why it remains a foundational metric for measuring image quality and diversity in AI.