Home Machine Translation: What It Is, How It Works, and Where It's Going

Machine Translation: What It Is, How It Works, and Where It's Going

Learn what machine translation is, how it works across rule-based, statistical, and neural approaches, its key use cases in education and business, and the challenges that still limit accuracy.

What Is Machine Translation?

Machine translation is the use of software to automatically convert text or speech from one language to another without human intervention. It is a subfield of computational linguistics and natural language processing that applies algorithmic models to produce translations at scale and speed that human translators cannot match.

The core task is deceptively simple in description but enormously complex in execution. Languages differ not only in vocabulary but in grammar, syntax, idiom, cultural context, and levels of formality. A machine translation system must navigate all of these dimensions simultaneously, mapping meaning from a source language into a target language while preserving intent, tone, and accuracy.

Modern machine translation systems are powered by deep learning and neural networks that learn translation patterns from massive parallel corpora, datasets containing sentences aligned across two or more languages.

These systems have improved dramatically over the past decade, moving from awkward, often unusable output to translations that are fluent and contextually appropriate for many language pairs and content types.

Machine translation is not a replacement for professional human translation in all contexts. It is a tool that serves a specific set of needs: rapid comprehension of foreign-language content, first-draft translation for subsequent human editing, real-time communication across language barriers, and large-scale translation of content where perfect quality is not required.

Understanding what machine translation does well, where it struggles, and how it fits into broader language workflows is essential for anyone working with multilingual content.

How Machine Translation Works

Machine translation has evolved through several distinct technical paradigms. Each generation addressed limitations of the previous one, and understanding these stages clarifies how current systems operate and why certain limitations persist.

The Input Pipeline

Before translation begins, the input text undergoes preprocessing. This includes tokenization (splitting text into individual words or subword units), sentence boundary detection, and in some systems, lemmatization or stemming to reduce words to their base forms. These steps normalize the input so the translation model can process it consistently.

For neural systems, the preprocessed tokens are converted into vector embeddings, numerical representations that capture semantic and syntactic properties of each word or subword. These embeddings serve as the actual input to the translation model, encoding meaning in a format the neural network can manipulate mathematically.

Encoding and Decoding

Modern neural machine translation follows an encoder-decoder architecture. The encoder reads the source sentence and compresses it into a dense internal representation, a sequence of vectors that captures the meaning of the entire input. The decoder then generates the target sentence one token at a time, using the encoded representation to inform each word choice.

The critical innovation that powers current systems is the transformer model, introduced in 2017. Transformers use a mechanism called self-attention, which allows the model to weigh the relevance of every word in the source sentence when generating each word in the target sentence.

This solves a fundamental problem that plagued earlier architectures: the ability to handle long-range dependencies, where a word at the end of a sentence depends on context established at the beginning.

Earlier recurrent neural network approaches processed sentences sequentially, one word at a time. This created a bottleneck where information from early words degraded as the sequence grew longer. Transformers process all positions in parallel, maintaining access to the full context throughout generation. This architectural shift produced substantial improvements in translation quality and training efficiency.

Training on Parallel Data

Neural machine translation models learn from parallel corpora: large datasets of sentences in the source language paired with their translations in the target language. During training, the model adjusts its internal parameters to minimize the difference between its predicted translations and the reference translations in the training data.

The quality and size of the training data directly determine the quality of the resulting system. High-resource language pairs like English-French or English-Chinese benefit from millions of aligned sentence pairs drawn from government proceedings, news articles, patents, and web content. Low-resource language pairs may have only thousands of examples, leading to significantly weaker translation quality.

Language modeling techniques have expanded the data available for training. By pretraining on large volumes of monolingual text in both languages, models develop a richer understanding of each language's structure before they encounter parallel translation data.

This approach, which draws on advances in generative AI and large language models, has proven especially valuable for low-resource language pairs.

Types of Machine Translation

Machine translation systems fall into several categories based on their underlying methodology. While neural machine translation dominates current applications, understanding all types provides context for how the field arrived at its current state.

Rule-Based Machine Translation (RBMT)

Rule-based systems translate by applying handcrafted linguistic rules. Linguists define grammar rules, morphological rules, and bilingual dictionaries that the system uses to parse source sentences, transfer meaning, and generate target sentences.

RBMT systems offer predictability and consistency. They produce the same output for the same input every time, and their behavior can be traced to specific rules. However, they are expensive to build, requiring years of expert effort for each language pair, and they struggle with ambiguity, idiomatic expressions, and any construction not anticipated by the rule writers.

Few new RBMT systems are being developed, but legacy systems remain in use in specialized domains like aviation and legal translation where predictability is valued.

Statistical Machine Translation (SMT)

Statistical machine translation, dominant from the early 2000s through the mid-2010s, replaced handcrafted rules with probabilistic models learned from data. SMT systems analyze parallel corpora to learn statistical associations between words and phrases across languages, then use these associations to generate the most probable translation of a given input.

The most successful SMT approach, phrase-based SMT, translates sequences of words (phrases) rather than individual words. This improved fluency because common multi-word expressions could be translated as units. However, SMT systems still struggled with long-distance dependencies, word order differences between languages, and generating truly fluent output.

SMT represented a paradigm shift: instead of encoding human knowledge about language explicitly, systems learned translation patterns from examples. This made it possible to build translation systems for new language pairs quickly, provided sufficient parallel data existed.

Neural Machine Translation (NMT)

Neural machine translation uses deep learning to build end-to-end translation models that learn to map entire sentences from source to target language. NMT systems do not decompose translation into separate steps like parsing, transfer, and generation. Instead, a single neural network handles the full translation process.

NMT produces significantly more fluent output than SMT. Because the model generates translations word by word while attending to the full source context, it can handle long-range dependencies, produce natural word order, and maintain consistency across sentences. The introduction of transformer-based models and large-scale pretraining further improved quality, particularly for complex sentence structures and morphologically rich languages.

The trade-off is interpretability. While a rule-based system can explain why it chose a particular translation, neural models operate as black boxes. Their internal representations are high-dimensional and not directly interpretable, making it difficult to diagnose specific errors or predict behavior on novel inputs.

Hybrid and Adaptive Approaches

Some systems combine multiple approaches. Hybrid systems may use neural models for core translation while applying rule-based post-processing for terminology consistency. Adaptive machine translation systems learn from translator corrections in real time, improving their output for specific domains or clients as they receive feedback.

Fine-tuning is a widely used adaptive technique. Organizations take a general-purpose NMT model and train it further on domain-specific parallel data, such as medical, legal, or technical content. This specialization produces significant quality improvements for the target domain without requiring a model to be built from scratch.

| Type | Description | Best For |

|---|---|---|

| Rule-Based Machine Translation (RBMT) | Rule-based systems translate by applying handcrafted linguistic rules. | Linguists define grammar rules, morphological rules |

| Statistical Machine Translation (SMT) | Statistical machine translation, dominant from the early 2000s through the mid-2010s. | — |

| Neural Machine Translation (NMT) | Neural machine translation uses deep learning to build end-to-end translation models that. | — |

| Hybrid and Adaptive Approaches | Some systems combine multiple approaches. | Medical, legal, or technical content |

Machine Translation Use Cases

Machine translation serves a broad range of practical needs across industries and contexts. Its value depends on matching the right approach to the right use case.

Cross-Border Education and E-Learning

Educational institutions and e-learning platforms increasingly operate across language boundaries. Machine translation enables course content, assessments, discussion forums, and instructor communications to reach learners in their native languages. For platforms serving global audiences, it reduces the cost and time required to localize content that would otherwise remain available only in the original language.

The quality bar matters here. Translated instructional content must be accurate enough to convey learning objectives without introducing confusion. For high-stakes content like certification materials, machine translation typically serves as a first draft that human reviewers refine. For lower-stakes content like forum posts or informal announcements, raw machine translation may be sufficient.

For a deeper look at AI-powered translation in educational contexts, see our guide to AI translation tools.

Enterprise Content Localization

Global businesses generate enormous volumes of content that need to reach audiences in multiple languages: product documentation, support articles, marketing materials, internal communications, and legal documents. Machine translation, particularly when combined with human post-editing, dramatically reduces the cost and turnaround time for localization workflows.

The post-editing model has become a standard industry practice. Machine translation generates a first draft, and professional translators review and correct it. This approach is consistently faster and less expensive than translating from scratch, while maintaining quality standards appropriate for published content.

Real-Time Communication

Machine translation powers real-time communication tools that bridge language gaps in conversations, meetings, customer support interactions, and collaborative work. Messaging platforms, video conferencing tools, and customer service chatbots use machine translation to enable participants who speak different languages to communicate without delays.

The tolerance for imperfection is higher in real-time contexts. Participants can ask clarifying questions and adjust for awkward phrasing. The value lies in enabling communication that would otherwise not happen at all, not in producing publication-quality text.

Information Access and Intelligence

Researchers, analysts, journalists, and intelligence professionals use machine translation to access information published in languages they do not read. The goal is comprehension, not publication. Machine translation allows a user to quickly scan foreign-language news, academic papers, government documents, or social media to extract relevant information and assess whether a full professional translation is warranted.

This gisting use case is one of the oldest and most reliable applications of machine translation. Even imperfect translations convey enough meaning for a knowledgeable reader to understand the main points and identify content worth investigating further.

Accessibility and Inclusion

Machine translation contributes to language accessibility for individuals and communities that would otherwise be excluded from information, services, and opportunities available only in dominant languages. Government agencies, healthcare organizations, and nonprofits use machine translation to provide critical information in languages they cannot afford to translate manually for every document.

The ethical dimension is important. When machine translation is used for high-consequence content like medical instructions, legal notices, or emergency communications, errors can cause real harm. Organizations must balance the accessibility benefit of providing some translation against the risk of providing an inaccurate one.

Challenges and Limitations

Machine translation has improved substantially, but significant challenges remain. Understanding these limitations is necessary for setting realistic expectations and designing workflows that account for them.

Quality Variability Across Languages

Translation quality varies dramatically depending on the language pair. High-resource pairs (English-Spanish, English-French, English-German, English-Chinese) benefit from large training datasets and sustained research investment. Translations between these languages are often remarkably fluent and accurate.

Low-resource language pairs tell a different story. Languages with limited digital text, fewer parallel corpora, and less research attention produce translations that are noticeably weaker. Many of the world's 7,000+ languages have essentially no machine translation coverage at all. This creates a structural inequality where speakers of well-resourced languages benefit disproportionately from translation technology.

Context and Ambiguity

Language is inherently ambiguous, and machine translation systems still struggle with many forms of ambiguity that humans resolve effortlessly. A word with multiple meanings (bank as a financial institution versus a river bank) requires contextual understanding that extends beyond the sentence level. Pronoun resolution, implied meaning, sarcasm, and cultural references all present challenges that current systems handle inconsistently.

Natural language understanding remains an active area of research precisely because these problems are not solved. Machine translation systems can produce fluent text that is confidently wrong, translating a sentence smoothly while completely misinterpreting its meaning. This failure mode is particularly dangerous because fluency masks the error.

Domain Specificity

General-purpose translation models perform unevenly across specialized domains. Medical, legal, financial, and technical texts use terminology, conventions, and sentence structures that differ significantly from the general-language data on which most models are trained. A model that translates news articles well may produce unreliable translations of patent claims or clinical trial protocols.

Domain adaptation through fine-tuning mitigates this problem but does not eliminate it. Fine-tuned models require domain-specific parallel data that may be expensive or difficult to obtain. They also risk overfitting to the training domain, degrading performance on general text.

Cultural and Stylistic Nuance

Translation is not just a linguistic task. It is a cultural one. Machine translation systems generally produce translations that are linguistically correct but culturally flat. They do not adapt tone, register, politeness levels, or cultural references for the target audience. A marketing message that resonates in English may produce a technically accurate but culturally awkward translation in Japanese.

Natural language generation research is exploring ways to give models greater control over style and tone, but current machine translation systems offer limited options for adjusting these parameters. For content where cultural adaptation matters, human involvement remains essential.

Bias and Fairness

Machine translation models learn from data that reflects existing societal biases. This manifests in several ways: defaulting to masculine pronouns when the source language is gender-neutral, reinforcing stereotypical associations between genders and professions, and producing translations that reflect the cultural perspective dominant in the training data.

These biases are not cosmetic. In contexts like hiring, legal proceedings, or healthcare, biased translations can produce material harm. Addressing translation bias is an ongoing challenge that intersects with broader efforts in artificial intelligence fairness and machine learning ethics.

How to Get Started

Adopting machine translation effectively requires matching tools and workflows to specific needs. A deliberate approach produces better results than defaulting to whatever free tool is most convenient.

Identify your use case clearly. The quality requirements for translating internal meeting notes differ fundamentally from those for publishing customer-facing documentation. Define whether you need gisting (understanding the gist of foreign-language content), publishable quality (output ready for external audiences), or something in between. This determines whether raw machine translation is sufficient or whether human post-editing is required.

Choose the right tool for the job. Consumer tools like Google Translate and DeepL serve well for individual gisting and informal communication. Enterprise translation platforms such as Google Cloud Translation, Amazon Translate, Microsoft Translator, and DeepL Pro offer APIs, custom glossaries, and domain adaptation features suited to organizational workflows. Evaluate tools based on supported language pairs, customization options, data privacy policies, and integration capabilities.

Invest in domain adaptation. If your content involves specialized terminology, general-purpose translation will likely underperform. Use fine-tuning, custom glossaries, or translation memory systems to tailor machine translation output to your domain. Even modest customization, like uploading a glossary of key terms and their approved translations, can meaningfully improve quality.

Design a post-editing workflow. For any content that will be published, shared externally, or used for consequential decisions, implement a human post-editing step. Train post-editors to work efficiently with machine translation output, focusing on meaning accuracy, terminology consistency, and cultural appropriateness rather than retranslating from scratch.

Measure and iterate. Establish quality metrics appropriate to your use case. Automated metrics like BLEU scores provide rough comparisons between systems but do not capture the full picture. Human evaluation, even on a sampled basis, gives the most reliable assessment of whether translations meet your standards. Track quality over time and feed corrections back into your customization pipeline.

Stay informed about advances. Machine translation is evolving rapidly, driven by advances in transformer models, BERT-based architectures, and large-scale language modeling. Capabilities that are unreliable today may become standard within a few years. Periodically reassess your tools and workflows to take advantage of improvements.

FAQ

How accurate is machine translation today?

For high-resource language pairs like English-Spanish, English-French, and English-Chinese, modern neural machine translation produces output that is fluent and largely accurate for general content. Professional translators evaluating machine translation output often find that 60 to 80 percent of segments require only minor or no corrections. For low-resource language pairs, specialized domains, or content with complex cultural nuance, accuracy drops significantly.

Machine translation should always be evaluated relative to the specific language pair, content type, and quality standard required.

What is the difference between machine translation and AI translation?

There is no strict technical distinction. "AI translation" is a general term that describes any translation performed by artificial intelligence, which includes machine translation. In marketing and product contexts, "AI translation" sometimes implies a newer, more capable system powered by large language models or generative AI, while "machine translation" may refer more broadly to all automated translation approaches including older statistical and rule-based systems. In practice, the terms overlap substantially.

Can machine translation replace human translators?

Not for all use cases. Machine translation excels at high-volume, lower-stakes translation tasks: gisting foreign-language content, producing first drafts for post-editing, enabling real-time communication, and translating content where perfect quality is not essential. Human translators remain necessary for literary translation, legally binding documents, culturally sensitive content, creative marketing copy, and any context where a subtle error in meaning carries significant consequences.

The most productive workflow for many organizations combines machine translation for speed and scale with human expertise for quality and nuance.

Is machine translation safe for confidential content?

It depends on the tool and deployment model. Free consumer translation services typically process text on external servers, and their terms of service may allow the provider to use submitted text for model improvement. Enterprise APIs from major cloud providers offer data processing agreements and commitments not to use customer data for training. On-premise and self-hosted translation models provide the highest level of data control.

Organizations handling confidential, proprietary, or regulated content should evaluate the data handling practices of any machine translation tool before use and choose deployment options that align with their security and privacy requirements.

What languages does machine translation support?

Coverage varies by provider. Google Translate supports over 130 languages. DeepL covers approximately 30 languages with a focus on quality for European language pairs. Enterprise services like Amazon Translate and Microsoft Translator support 75 to 100+ languages. However, support does not imply equal quality. Translation quality is highest for language pairs with abundant training data and active research communities.

Many of the world's languages remain unsupported or poorly served by current machine translation technology.

Further reading

.avif)

25+ Best ChatGPT Prompts for Instructional Designers

Discover over 25 best ChatGPT prompts tailored for instructional designers to enhance learning experiences and creativity.

Dropout in Neural Networks: How Regularization Prevents Overfitting

Learn what dropout is, how it prevents overfitting in neural networks, practical implementation guidelines, and when to use alternative regularization methods.

Autonomous AI: Definition, Capabilities, and Limitations

Autonomous AI refers to self-governing systems that operate without human intervention. Learn its capabilities, real-world applications, limitations, and safety.

What Is an Expert System? Definition, Architecture, and Examples

Learn what an expert system is, how it works, its core architecture, real-world examples across industries, and how it compares to machine learning.

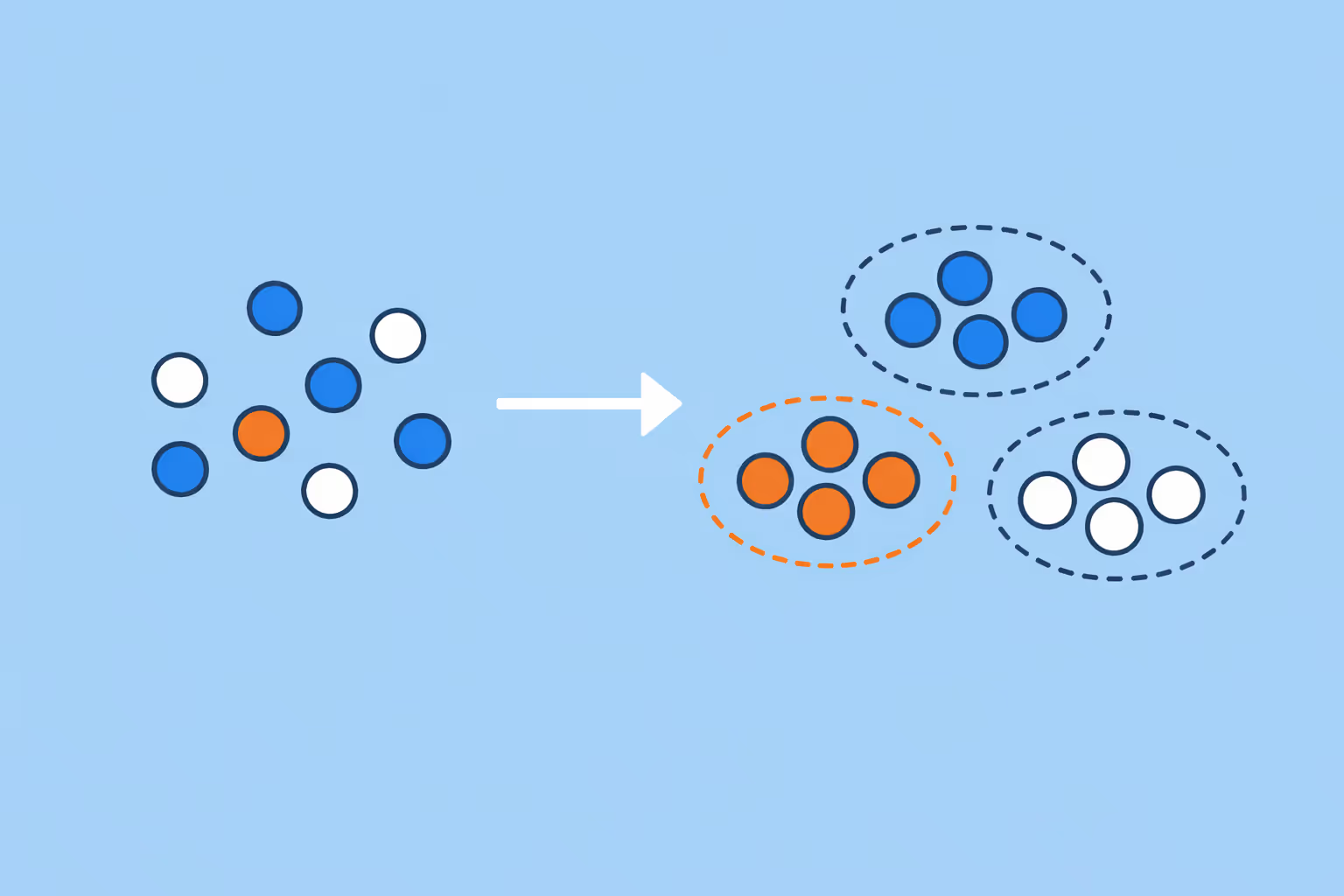

Clustering in Machine Learning: Methods, Use Cases, and Practical Guide

Clustering in machine learning groups unlabeled data by similarity. Learn the key methods, real-world use cases, and how to choose the right approach.

Data Dignity: What It Is and Why It Matters

Data dignity is the principle that people should have agency, transparency, and fair compensation for the personal data they generate. Learn how it works and why it matters.