Home Machine Learning Engineer: What They Do, Skills, and Career Path

Machine Learning Engineer: What They Do, Skills, and Career Path

Learn what a machine learning engineer does, the key skills and tools required, common career paths, and how to enter this high-demand field.

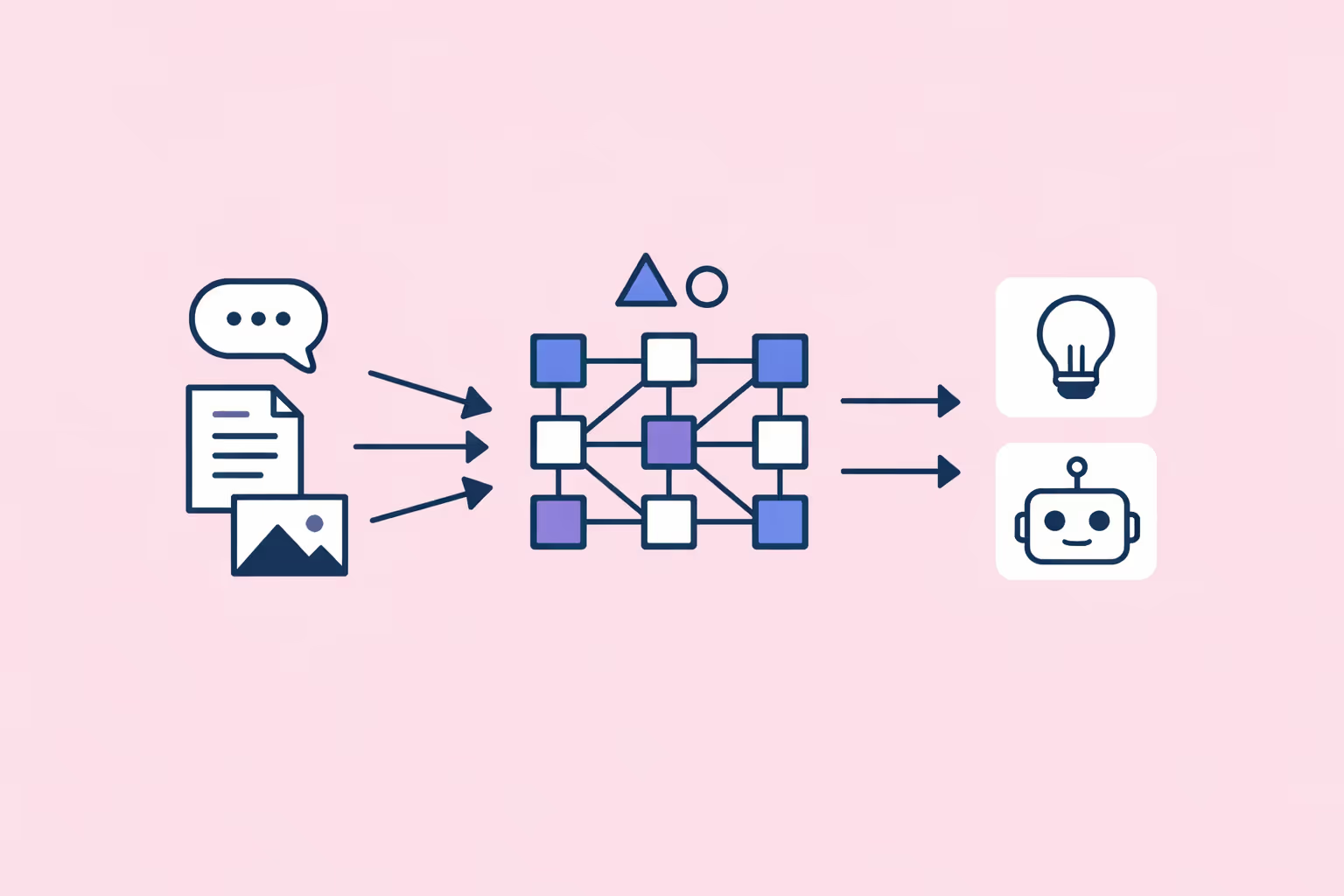

What Is a Machine Learning Engineer?

A machine learning engineer is a software professional who designs, builds, and deploys machine learning systems that operate at production scale. The role combines software engineering discipline with applied knowledge of artificial intelligence, statistics, and data infrastructure.

Where a data scientist focuses on analysis and experimentation, a machine learning engineer focuses on turning research prototypes into reliable, scalable software. The distinction is important. A model that performs well in a notebook but fails under real traffic, produces stale predictions, or cannot be retrained efficiently has limited business value. Machine learning engineers solve exactly those problems.

The role emerged as organizations moved from experimenting with AI to embedding it into their core products and operations. Recommendation engines, fraud detection pipelines, autonomous systems, and conversational interfaces all require engineers who understand both the algorithmic principles behind deep learning and the infrastructure demands of serving predictions at scale.

Machine learning engineers typically sit between research teams and platform engineering teams. They translate model architectures into production code, build training pipelines, optimize inference performance, and monitor deployed systems for drift or degradation. In many organizations, the machine learning engineer is the person who makes AI actually work.

What Machine Learning Engineers Do

The day-to-day work of a machine learning engineer spans model development, systems engineering, and operational monitoring. The balance shifts depending on the organization, but several responsibilities appear consistently across roles.

Designing and Training Models

Machine learning engineers select appropriate model architectures for a given problem. A recommendation system might use collaborative filtering or a neural network. A text classification task might call for a transformer model. The engineer evaluates tradeoffs between accuracy, latency, and computational cost, then builds the training pipeline.

Training involves preparing data, configuring hyperparameters, and running experiments across potentially thousands of configurations.

Engineers use techniques like gradient descent and backpropagation to optimize model weights, and they rely on frameworks such as PyTorch and TensorFlow to implement these workflows efficiently.

Building Data and Feature Pipelines

Models depend on clean, correctly transformed data. Machine learning engineers build the pipelines that extract raw data from storage, apply feature engineering transformations, and deliver training-ready datasets to model training jobs. These pipelines must handle missing values, encode categorical variables, normalize numerical features, and maintain consistency between training and serving environments.

Feature stores have become a standard component in mature ML systems. They centralize feature definitions so that the same transformations used during training are applied at inference time, preventing a common source of production bugs known as training-serving skew.

Deploying and Serving Models

Deployment is where much of the engineering complexity lives. A trained model needs to be packaged, versioned, and served through an API or embedded into an application. Machine learning engineers choose between real-time inference, batch prediction, and streaming architectures depending on the use case.

They containerize models using Docker, deploy them on cloud platforms like AWS SageMaker or Google Vertex AI, and implement load balancing, autoscaling, and failover strategies. The goal is to deliver predictions reliably, with consistent latency, even under variable traffic loads.

Monitoring and Maintaining Production Systems

A model's accuracy degrades over time as the real world changes. Customer behavior shifts, product catalogs evolve, and new patterns emerge that the original training data did not capture. Machine learning engineers build monitoring systems that track prediction quality, detect data drift, and trigger retraining when performance drops below acceptable thresholds.

This operational dimension is often grouped under the term LLMOps or MLOps, depending on the type of model being managed. It includes logging predictions, running shadow models for comparison, and maintaining rollback mechanisms to revert to a previous model version if a new deployment underperforms.

Key Skills and Tools

The machine learning engineer skill set is broad, spanning software development, applied mathematics, and infrastructure management. The role demands both depth in data science fundamentals and strong engineering execution.

Programming and Software Engineering

- Python. The dominant language for machine learning development. Engineers use it for data processing, model training, API development, and scripting automation workflows.

- C++ and Java. Used in performance-critical inference systems where Python's overhead is too high. Some production serving frameworks are written in C++ with Python bindings.

- Software engineering practices. Version control with Git, code review, testing, continuous integration, and documentation. Machine learning engineers write production code, not just research scripts.

Machine Learning Frameworks and Libraries

- PyTorch. Widely adopted for research and increasingly for production workloads. Its dynamic computation graph makes it flexible for experimentation.

- TensorFlow. Mature framework with strong production tooling, including TensorFlow Serving for model deployment and TensorFlow Lite for edge devices.

- scikit-learn. Standard library for classical machine learning algorithms. Useful for baseline models and feature preprocessing.

- Hugging Face Transformers. The default starting point for natural language processing tasks built on transformer model architectures.

ML Paradigms and Theory

- Supervised learning. Classification and regression tasks where models learn from labeled examples. This covers the majority of production machine learning systems.

- Unsupervised learning. Clustering, anomaly detection, and dimensionality reduction. Useful when labeled data is unavailable or expensive to obtain.

- Reinforcement learning. Training agents to make sequential decisions through reward signals. Applied in robotics, game playing, and recommendation optimization.

- Deep learning. Neural networks with multiple layers that learn hierarchical representations. Essential for computer vision, natural language processing, and speech recognition.

Infrastructure and DevOps

- Docker and Kubernetes. Containerization and orchestration for deploying models as microservices.

- Cloud platforms. AWS, Google Cloud, and Azure each offer managed services for training, serving, and monitoring ML models.

- Data processing. Apache Spark, Apache Kafka, and Apache Airflow for batch and streaming data pipelines.

- Experiment tracking. MLflow, Weights & Biases, and Neptune for logging experiments, comparing runs, and reproducing results.

Specialized Tools

- AutoML. Automated machine learning platforms that handle model selection and hyperparameter tuning, allowing engineers to focus on data quality and system architecture.

- LangChain. A framework for building applications powered by large language models, increasingly relevant as organizations integrate generative AI into products.

- Fine-tuning toolkits. Libraries and platforms for adapting pretrained models to domain-specific tasks, reducing the data and compute required for high-quality results.

Career Path and Opportunities

The demand for machine learning engineers has grown steadily as organizations move AI projects from experimentation to production. The role offers strong compensation, diverse industry options, and clear progression pathways.

Entry Points

Most machine learning engineers enter the field through one of two routes. The first is a software engineering background with growing specialization in ML. The second is a data science or research background with increasing focus on engineering and deployment. Both paths converge on the same set of production-oriented skills.

Common entry-level titles include junior ML engineer, ML platform engineer, or data engineer with an ML focus. Some professionals begin as software engineers on teams that happen to build ML features, then transition into dedicated ML engineering roles as their expertise deepens.

Mid-Level and Senior Roles

With three to five years of experience, machine learning engineers often specialize. Some focus on a specific domain such as natural language processing, computer vision, or recommendation systems. Others move toward platform engineering, building the internal tools and infrastructure that other ML teams depend on.

Senior machine learning engineers lead the technical design of ML systems, mentor junior engineers, and make architectural decisions that affect reliability, cost, and performance. They are expected to evaluate tradeoffs rigorously and communicate technical constraints to product and business stakeholders.

Leadership and Staff-Level Positions

At the staff or principal level, machine learning engineers influence technical strategy across an organization. They define standards for model development, champion best practices in MLOps, and evaluate whether new ML capabilities are feasible and worthwhile.

Some transition into engineering management, leading teams of ML engineers and data scientists. Others move into applied research roles, bridging the gap between academic advances and practical deployment. A smaller number become ML architects, designing the end-to-end systems that support an organization's entire AI portfolio.

Industry Landscape

Machine learning engineers work across virtually every sector. Technology companies remain the largest employers, but finance, healthcare, autonomous vehicles, e-commerce, manufacturing, and defense all employ ML engineers in growing numbers. The diversity of applications means that engineers can specialize in problems that match their interests, from medical image analysis to real-time bidding systems.

Challenges in the Role

Machine learning engineering presents a distinct set of challenges that go beyond typical software development problems. Understanding these difficulties helps set realistic expectations for anyone entering the field.

Data Quality and Availability

Production ML systems depend on large volumes of high-quality data. In practice, data is often incomplete, mislabeled, inconsistently formatted, or biased. Engineers spend considerable time building validation checks, cleaning pipelines, and working with domain experts to resolve ambiguities. Poor data quality is the most common reason ML projects fail, and it is not a problem that better algorithms can fix.

Reproducibility and Debugging

Machine learning systems are inherently harder to debug than traditional software. A model's behavior depends on the data it was trained on, the random seeds used during initialization, the order of training examples, and dozens of hyperparameter choices. Reproducing a specific result requires careful tracking of every variable.

When a model underperforms in production, isolating the root cause often involves investigating data pipeline changes, feature engineering bugs, and infrastructure issues simultaneously.

Machine Learning Bias

Models trained on historical data can encode and amplify existing biases. A hiring model trained on past decisions may penalize candidates from underrepresented groups. A credit scoring model may disadvantage applicants from certain geographic areas. Machine learning engineers must evaluate fairness metrics, implement bias mitigation techniques, and advocate for responsible development practices within their teams.

Bridging Research and Production

Academic papers often present results under idealized conditions: clean datasets, unlimited compute, and no latency requirements. Translating those results into production systems requires significant adaptation. A state-of-the-art model that takes minutes to generate a single prediction is useless for a real-time application. Machine learning engineers must optimize models through techniques like quantization, pruning, and distillation to meet the practical constraints of deployed systems.

Keeping Pace with Rapid Change

The field evolves at an extraordinary pace. New model architectures, training techniques, frameworks, and deployment patterns emerge regularly. Engineers must allocate time for continuous learning while also delivering on project commitments. Falling behind on foundational advances can make it harder to evaluate new tools and approaches objectively.

How to Become a Machine Learning Engineer

There is no single prescribed path, but successful machine learning engineers typically build their skills through a combination of formal education, deliberate practice, and progressive job experience.

Build a Strong Foundation

A bachelor's degree in computer science, mathematics, statistics, or a related quantitative field provides the necessary theoretical grounding. Coursework in linear algebra, calculus, probability, algorithms, and data structures forms the mathematical backbone that machine learning theory rests on.

Graduate degrees are common in this field but not universally required. A master's degree in machine learning, computer science, or data science strengthens both theoretical depth and research skills. A PhD is advantageous for roles that involve developing novel algorithms, but many production-focused positions prioritize engineering experience over academic credentials.

Develop Core Technical Skills

Start with Python and a modern ML framework. Build small projects that cover the full lifecycle: data collection, preprocessing, model training, evaluation, and deployment. Work through the fundamentals of supervised learning, unsupervised learning, and deep learning before specializing.

Learn software engineering practices alongside ML techniques. Version control, testing, code review, and continuous integration are just as important for a machine learning engineer as understanding loss functions and optimization algorithms. The ability to write clean, maintainable, production-quality code separates ML engineers from ML researchers.

Gain Hands-On Experience

Entry-level positions as a software engineer, data analyst, or data engineer provide exposure to production systems and real-world data problems. These roles build the operational instincts that are essential for machine learning engineering.

Personal projects and open-source contributions demonstrate practical capability. Building an end-to-end ML application, from data ingestion through model serving, provides more useful experience than completing isolated tutorials. Participating in ML competitions on platforms like Kaggle develops modeling intuition, though production engineering skills require separate practice.

Specialize and Grow

Once the foundational skills are solid, choose a specialization based on interest and market demand. Natural language processing, computer vision, recommendation systems, and MLOps are all high-demand areas with distinct skill requirements.

Stay current by reading research papers, following industry practitioners, and experimenting with new tools. The machine learning engineer who understands both the latest transformer model architectures and the infrastructure required to serve them at scale will remain consistently in demand.

| Component | Function | Key Detail |

|---|---|---|

| Build a Strong Foundation | A bachelor's degree in computer science, mathematics, statistics. | A master's degree in machine learning, computer science |

| Develop Core Technical Skills | Start with Python and a modern ML framework. | Build small projects that cover the full lifecycle: data collection |

| Gain Hands-On Experience | Entry-level positions as a software engineer, data analyst. | — |

| Specialize and Grow | Once the foundational skills are solid. | Natural language processing, computer vision, recommendation systems |

FAQ

What is the difference between a machine learning engineer and a data scientist?

A machine learning engineer focuses on building, deploying, and maintaining ML systems in production. A data scientist focuses on analysis, experimentation, and generating insights from data. Data scientists typically work in notebooks and communicate findings through reports and visualizations. Machine learning engineers write production code, build pipelines, and optimize systems for reliability and scale. In smaller organizations, one person may handle both sets of responsibilities.

What programming languages do machine learning engineers use?

Python is the primary language for nearly all machine learning work. It has the richest ecosystem of ML libraries and frameworks, including PyTorch, TensorFlow, and scikit-learn. SQL is essential for data access and manipulation. C++ and Java appear in performance-sensitive applications where inference speed is critical.

Familiarity with shell scripting and infrastructure-as-code tools like Terraform supports deployment and operations workflows.

Do I need a master's degree to become a machine learning engineer?

A master's degree is helpful but not strictly required. Many machine learning engineers hold bachelor's degrees in computer science or related fields and built their ML expertise through work experience, online courses, and self-directed projects. A graduate degree provides deeper theoretical knowledge and can accelerate career progression, particularly for roles at research-oriented organizations.

Practical engineering ability and demonstrated project work carry significant weight in hiring decisions.

What is the typical salary range for a machine learning engineer?

Compensation varies by geography, experience, and industry. In the United States, entry-level machine learning engineers typically earn between $100,000 and $140,000 annually. Mid-level engineers with three to five years of experience often fall in the $140,000 to $200,000 range. Senior and staff-level engineers at major technology companies can earn total compensation exceeding $300,000, including base salary, bonuses, and equity. The role consistently ranks among the highest-paid positions in software engineering.

How is machine learning engineering different from software engineering?

Machine learning engineering is a specialization within software engineering. Both roles require strong programming skills, system design knowledge, and software development best practices. The key difference is that machine learning engineers work with probabilistic systems whose behavior is shaped by data rather than purely by code.

This introduces unique challenges around data quality, model evaluation, experiment tracking, and production monitoring that traditional software engineering does not address. Machine learning engineers also need a working knowledge of statistics and machine learning theory that goes beyond what most software engineering roles require.

Further reading

Transformer Model: What It Is, How It Works, and Why It Matters

Learn what a transformer model is, how the self-attention mechanism works, explore key architectures like BERT and GPT, and discover practical use cases across AI.

%201.avif)

AI Prompt Engineer: Role, Skills, and Salary

AI prompt engineer role explained: daily responsibilities, core skills, salary ranges, career paths, and how organizations hire for this emerging position.

%201.avif)

AutoML (Automated Machine Learning): What It Is and How It Works

AutoML automates the end-to-end machine learning pipeline. Learn how automated machine learning works, its benefits, limitations, and real-world use cases.

Generative Model: How It Works, Types, and Use Cases

Learn what a generative model is, how it learns to produce new data, and where it is applied. Explore types like GANs, VAEs, diffusion models, and transformers.

AgentGPT: What It Is, How It Works, and Practical Use Cases

Understand what AgentGPT is, how its autonomous agent loop works, what it can and cannot do, how it compares to other platforms, and practical tips for getting value from it.

What Is an Expert System? Definition, Architecture, and Examples

Learn what an expert system is, how it works, its core architecture, real-world examples across industries, and how it compares to machine learning.