Home What Is Machine Learning? How It Works, Types, and Use Cases

What Is Machine Learning? How It Works, Types, and Use Cases

Machine learning enables systems to learn from data and improve without explicit programming. Explore how it works, key types, real-world applications, and how to get started.

What Is Machine Learning?

Machine learning is a branch of artificial intelligence that enables computer systems to learn from data and improve their performance on a given task without being explicitly programmed for every scenario. Instead of following rigid, hand-coded rules, a machine learning model identifies patterns in training data and uses those patterns to make predictions or decisions on new, unseen inputs.

The core idea is straightforward. A model receives a dataset, adjusts its internal parameters to minimize prediction errors, and gradually becomes more accurate as it processes more examples. This learning process is driven by mathematical optimization, where an algorithm iteratively refines the model's parameters until its outputs closely match the expected results.

Machine learning differs from traditional software engineering in a fundamental way. In conventional programming, a developer writes explicit instructions for every possible input. In machine learning, the developer provides data and an algorithm, and the system derives its own rules. This makes machine learning especially valuable for problems where the underlying rules are too complex to articulate manually, such as recognizing speech, detecting fraud, or recommending content.

The field has matured from an academic curiosity into a foundational technology that powers search engines, medical diagnostics, autonomous vehicles, and financial systems. Its growth has been accelerated by three converging factors: the availability of massive datasets, advances in computing hardware, and improvements in algorithmic techniques developed within data science research.

How Machine Learning Works

Data Collection and Preparation

Every machine learning project begins with data. The quality and quantity of training data determine the upper bound of model performance. Raw data rarely arrives in a form that algorithms can consume directly, so preparation is a critical step.

Data preparation involves cleaning (removing duplicates, handling missing values, correcting errors), transforming features into numerical representations, and normalizing values so that no single feature dominates the learning process. Data splitting is standard practice: the dataset is divided into training, validation, and test subsets to evaluate how well the model generalizes beyond the examples it was trained on.

Model Training

During training, the algorithm processes the training data and adjusts the model's internal parameters to minimize a loss function, which quantifies the difference between predicted and actual outcomes. For a linear regression model, this might mean finding the line that best fits a scatter plot of data points.

For a neural network, it involves tuning millions of weights across interconnected layers.

The optimization process typically relies on gradient descent, an algorithm that calculates how much each parameter contributes to the overall error and adjusts each one in the direction that reduces the loss. The backpropagation algorithm makes this process efficient for neural networks by propagating error signals backward through the layers.

Training proceeds over multiple iterations called epochs. In each epoch, the model sees the full training dataset and refines its parameters. The learning rate controls how large each adjustment step is. Too aggressive a learning rate causes the model to overshoot good solutions. Too conservative, and training becomes impractically slow.

Evaluation and Deployment

Once a model is trained, it is evaluated against the held-out test set to measure its performance on data it has never seen. Common metrics include accuracy, precision, recall, F1 score, and mean squared error, depending on whether the task is classification or regression.

A model that performs well on training data but poorly on test data is overfitting, meaning it has memorized the training examples rather than learning generalizable patterns. Techniques like regularization, cross-validation, and early stopping help mitigate overfitting. After evaluation confirms acceptable performance, the model is deployed into a production environment where it processes real-world inputs and returns predictions.

Types of Machine Learning

Supervised Learning

Supervised learning is the most widely used paradigm. The model trains on a labeled dataset, where each input example is paired with a known correct output. The objective is to learn a mapping function from inputs to outputs that generalizes to new examples.

Classification and regression are the two main supervised learning tasks. Classification assigns inputs to discrete categories, such as identifying whether an email is spam or legitimate. Regression predicts continuous numerical values, such as forecasting house prices based on features like square footage and location.

Common supervised learning algorithms include decision trees, support vector machines, random forests, and gradient-boosted ensembles. For complex tasks involving images, text, or audio, deep learning models built on neural networks are the standard approach.

Unsupervised Learning

Unsupervised learning works with unlabeled data. The model receives inputs without corresponding outputs and must discover structure or patterns on its own. This approach is useful when labeled data is scarce or when the goal is exploratory analysis rather than prediction.

Clustering is a primary unsupervised technique. Algorithms like k-means, DBSCAN, and hierarchical clustering group similar data points together based on feature similarity. Customer segmentation, anomaly detection, and topic modeling are common applications.

Dimensionality reduction is another unsupervised method. Techniques like principal component analysis (PCA) and t-SNE compress high-dimensional data into fewer dimensions while preserving meaningful relationships. This is valuable for visualization and for reducing computational costs in downstream tasks.

Reinforcement Learning

Reinforcement learning takes a fundamentally different approach. An agent interacts with an environment, takes actions, and receives rewards or penalties based on the outcomes. The goal is to learn a policy that maximizes cumulative reward over time.

Unlike supervised learning, reinforcement learning does not rely on labeled examples. The agent discovers optimal behavior through trial and error. This makes it well suited for sequential decision-making problems such as game playing, robotic control, resource allocation, and autonomous navigation.

Reinforcement learning produced some of the field's most notable achievements, including systems that defeated world champions in Go and chess. It is also applied in practical domains like data center energy optimization, automated trading strategies, and personalized content recommendation.

Semi-Supervised and Self-Supervised Learning

Semi-supervised learning combines a small amount of labeled data with a large pool of unlabeled data. The labeled examples guide the model, while the unlabeled data helps it learn the underlying data distribution. This approach is practical in domains where labeling is expensive, such as medical imaging and natural language annotation.

Self-supervised learning generates its own labels from the data. A language model, for instance, masks random words in a sentence and trains itself to predict the missing tokens. This technique powers the pre-training phase of transformer models like BERT and GPT, enabling them to learn rich representations from vast amounts of unlabeled text before being fine-tuned for specific tasks.

| Type | Description | Best For |

|---|---|---|

| Supervised Learning | Supervised learning is the most widely used paradigm. | Identifying whether an email is spam or legitimate |

| Unsupervised Learning | Unsupervised learning works with unlabeled data. | Visualization and for reducing computational costs in downstream tasks |

| Reinforcement Learning | Reinforcement learning takes a fundamentally different approach. | Game playing, robotic control, resource allocation |

| Semi-Supervised and Self-Supervised Learning | Semi-supervised learning combines a small amount of labeled data with a large pool of. | Medical imaging and natural language annotation |

Machine Learning Use Cases

Healthcare and Medical Diagnostics

Machine learning models analyze medical images to detect tumors, fractures, and retinal diseases with accuracy that rivals trained specialists. Beyond imaging, predictive modeling helps hospitals forecast patient deterioration, optimize staffing, and identify individuals at high risk for chronic conditions.

Drug discovery benefits from machine learning's ability to screen molecular compounds at scale. Models predict how candidate molecules will interact with biological targets, dramatically reducing the time and cost of bringing new therapies to clinical trials.

Finance and Fraud Detection

Banks and payment processors use machine learning to flag fraudulent transactions in real time. Models trained on historical transaction data learn the patterns of legitimate behavior and identify deviations that suggest fraud. These systems process thousands of transactions per second while maintaining low false-positive rates.

Credit scoring, algorithmic trading, risk assessment, and insurance underwriting all depend on machine learning models that extract signals from structured financial data. The ability to process large volumes of data and detect subtle correlations gives machine learning a clear advantage over manual analysis.

Natural Language Processing

Natural language processing (NLP) is one of the most visible machine learning application areas. Machine learning powers translation services, chatbots, voice assistants, text summarization, and sentiment analysis. Transformer-based models have elevated NLP capabilities to the point where systems can generate coherent text, answer complex questions, and summarize lengthy documents.

Organizations are increasingly exploring the distinctions between AI and generative AI to determine how these NLP capabilities fit into their products and workflows.

Autonomous Vehicles and Robotics

Self-driving vehicles rely on machine learning for perception (identifying pedestrians, vehicles, lane markings), prediction (anticipating the behavior of other road users), and planning (determining the safest route). These systems fuse data from cameras, lidar, and radar using deep learning architectures.

In manufacturing and logistics, machine learning enables robotic arms to handle variable objects, drones to navigate complex environments, and automated systems to optimize warehouse operations. Reinforcement learning is especially valuable here, allowing robots to learn physical tasks through simulated practice.

Education and Personalized Learning

Machine learning enables adaptive learning platforms that adjust content difficulty based on individual learner performance. Models analyze response patterns, time on task, and error frequency to estimate proficiency and recommend the most effective next activity.

Automated essay scoring, intelligent tutoring systems, and curriculum recommendation engines all use machine learning to personalize education at scale. A data scientist working in educational technology might build models that predict dropout risk or identify learners who need additional support before they fall behind.

Recommendation Systems

Streaming platforms, e-commerce sites, and social media feeds rely on machine learning recommendation engines. These systems analyze user behavior, preferences, and contextual signals to surface relevant content, products, or connections.

Collaborative filtering, content-based filtering, and hybrid approaches each use different signals to generate recommendations. The scale of these systems is immense. A single platform might serve personalized recommendations to hundreds of millions of users daily, with each recommendation produced by a machine learning model processing user and item features in real time.

Challenges and Limitations

Data Quality and Bias

Machine learning models are only as good as the data they learn from. Biased training data produces biased predictions. If a hiring model is trained on historical decisions that favored certain demographics, it will replicate and potentially amplify those patterns. Machine learning bias is a well-documented challenge that requires careful dataset curation, fairness auditing, and ongoing monitoring.

Data quality issues extend beyond bias. Missing values, inconsistent labeling, and unrepresentative samples all degrade model performance. Organizations often underestimate the effort required to build and maintain high-quality datasets.

Interpretability and Explainability

Many high-performing machine learning models operate as black boxes. A deep neural network with millions of parameters can make accurate predictions without providing human-readable explanations for its reasoning. This lack of transparency is problematic in regulated industries like healthcare, finance, and criminal justice, where stakeholders need to understand why a decision was made.

Research into explainable AI has produced tools like SHAP and LIME that approximate feature importance for individual predictions. Decision trees and linear models are inherently interpretable but often sacrifice accuracy on complex tasks. Choosing the right balance between performance and explainability depends on the application's requirements.

Overfitting and Generalization

A model that fits the training data too closely may fail to generalize to new inputs. This overfitting problem is especially acute when the training dataset is small relative to the model's complexity. Regularization techniques (L1, L2, dropout), cross-validation, and ensemble methods help, but they do not guarantee robust generalization.

The related problem of distribution shift occurs when the data a model encounters in production differs from its training data. A fraud detection model trained on last year's transaction patterns may perform poorly when fraudsters adopt new tactics. Continuous monitoring and periodic retraining are necessary to maintain performance.

Computational Resources

Training large machine learning models requires significant computational investment. Deep learning models in particular demand GPUs or TPUs and can take days or weeks to train. Cloud computing has made these resources more accessible, but costs accumulate quickly for organizations running multiple experiments or serving models at scale.

AutoML platforms partially address this by automating hyperparameter tuning, architecture search, and model selection, reducing the computational overhead of manual experimentation. However, the most demanding applications still require substantial infrastructure.

How to Get Started with Machine Learning

Building machine learning competence requires a combination of theoretical understanding, practical skills, and domain knowledge. The following steps provide a structured path.

- Learn the mathematical foundations. Linear algebra, calculus, probability, and statistics are the theoretical pillars. Understanding how optimization algorithms work, why Bayes' theorem matters for probabilistic models, and how statistical distributions describe data will make every subsequent concept clearer.

- Master a programming language. Python is the standard language for machine learning. Libraries like scikit-learn, pandas, and NumPy cover data manipulation and classical algorithms. For deep learning, frameworks like PyTorch and TensorFlow provide the tools to build and train neural networks.

- Start with supervised learning projects. Begin with well-documented datasets like Iris (classification), Boston Housing (regression), or MNIST (image classification). These datasets have clear baselines, extensive tutorials, and straightforward evaluation metrics that help build confidence.

- Progress to real-world datasets. Kaggle competitions and open data repositories offer messier, more realistic data. Working with imperfect data teaches essential skills in data cleaning, feature engineering, and model selection that textbook datasets do not require.

- Study model evaluation rigorously. Understand confusion matrices, ROC curves, precision-recall trade-offs, and cross-validation. Knowing how to evaluate a model properly is as important as knowing how to build one.

- Explore career pathways. A machine learning engineer focuses on building and deploying models in production systems. A data scientist applies machine learning within broader analytical workflows. Both roles require strong programming skills and statistical literacy, but they emphasize different aspects of the machine learning lifecycle.

- Stay current with the field. Machine learning evolves rapidly. Reading research papers, following open-source projects, and experimenting with new techniques keeps practitioners informed. Resources on topics like deep learning and transformer architectures are essential for anyone working at the frontier.

FAQ

What is the difference between machine learning and artificial intelligence?

Artificial intelligence is the broader field concerned with creating systems that can perform tasks requiring human-like intelligence. Machine learning is a subset of AI that focuses specifically on algorithms that learn from data. All machine learning is AI, but not all AI involves machine learning. Rule-based expert systems and symbolic reasoning are examples of AI approaches that do not use machine learning.

Does machine learning require big data?

Not always. Some algorithms work effectively with modest datasets. Linear regression, decision trees, and support vector machines can produce useful models with hundreds or thousands of examples. Deep learning models typically require larger datasets because of their higher parameter counts. Techniques like transfer learning and data augmentation help when labeled data is limited.

How long does it take to build a machine learning model?

The timeline depends on the problem complexity, data availability, and desired accuracy. A simple classification model on clean, structured data can be built in hours. A production-grade system processing unstructured data might take months, with most of that time spent on data collection, cleaning, feature engineering, and iterative evaluation rather than on the modeling itself.

What programming languages are used for machine learning?

Python dominates the machine learning ecosystem due to its extensive library support (scikit-learn, TensorFlow, PyTorch, pandas, NumPy). R remains popular in statistical modeling and academic research. Julia is gaining traction for performance-critical applications. Java, Scala, and C++ are used in production systems where latency and scalability are primary concerns.

Can machine learning models make mistakes?

Yes. Machine learning models are probabilistic, not deterministic. They make predictions based on patterns in training data, and those predictions carry uncertainty. Models can produce incorrect outputs when they encounter data that differs significantly from their training distribution, when training data contains errors or biases, or when the model overfits to noise rather than signal. Robust evaluation, monitoring, and human oversight are essential safeguards.

Further reading

What Is Case-Based Reasoning? Definition, Examples, and Practical Guide

Learn what case-based reasoning (CBR) is, how the retrieve-reuse-revise-retain cycle works, and see real examples across industries.

%201.avif)

9 Best AI Course Curriculum Generators for Educators 2026

Discover the 9 best AI course curriculum generators to simplify lesson planning, personalize courses, and engage students effectively. Explore Teachfloor, ChatGPT, Teachable, and more.

Linear Regression: Definition, How It Works, and Practical Use Cases

Linear regression models the relationship between variables by fitting a straight line to data. Learn how it works, its types, use cases, and implementation steps.

%201.avif)

AI Watermarking: What It Is, Benefits, and Limits

Understand AI watermarking, how it works for text and images, its benefits for content authenticity, and the practical limits that affect real-world deployment.

AgentOps: Tools and Practices for Managing AI Agents in Production

Learn what AgentOps is, why it matters for AI agent deployments, the core components of observability, cost tracking, and governance, and how to implement AgentOps in your organization.

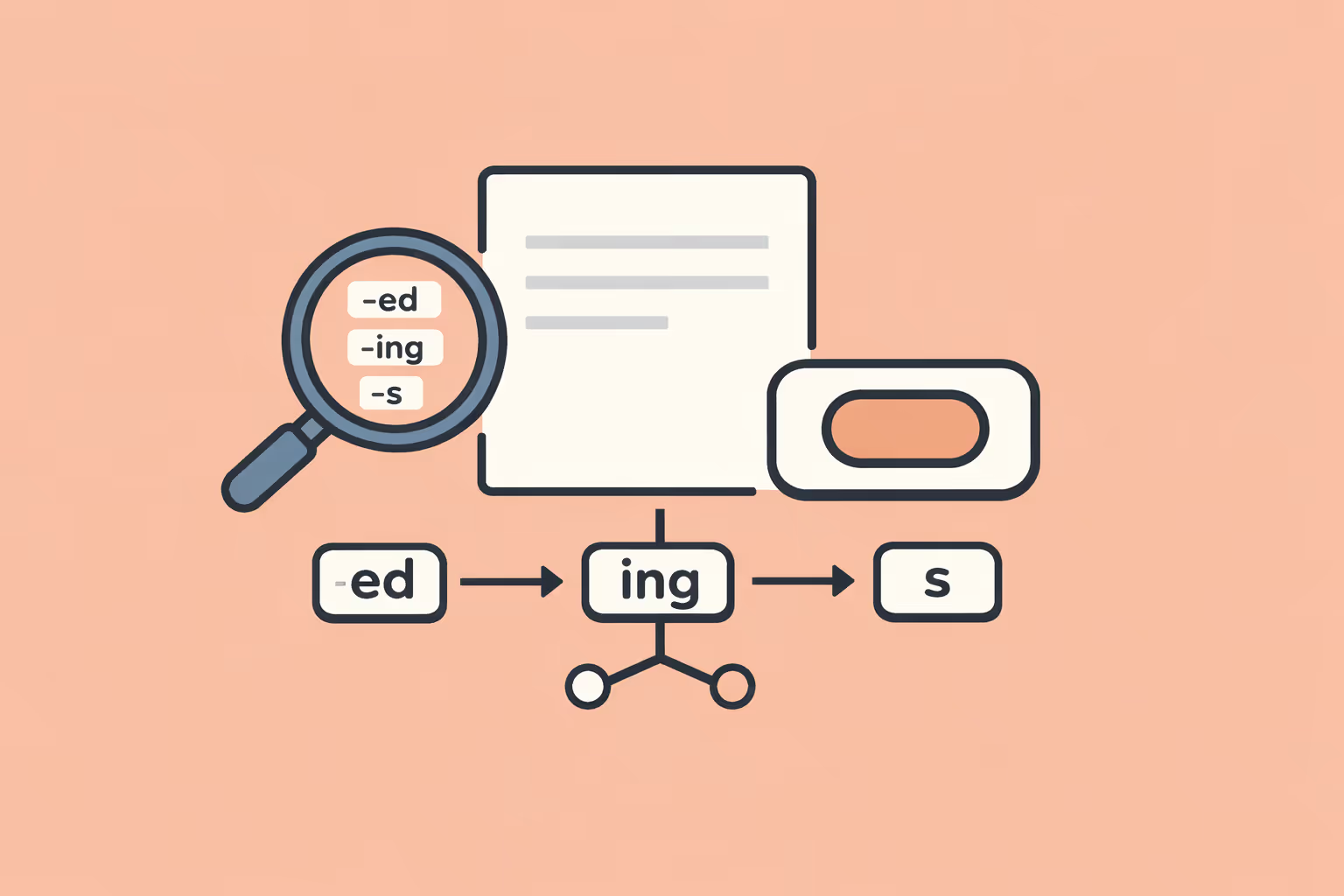

What Is Lemmatization? Definition, Process, and NLP Applications

Learn what lemmatization is, how it reduces words to their dictionary form, how it differs from stemming, and why it matters for NLP, search, and machine learning.