Home Kolmogorov-Arnold Network (KAN): How It Works and Why It Matters

Kolmogorov-Arnold Network (KAN): How It Works and Why It Matters

A Kolmogorov-Arnold Network (KAN) places learnable activation functions on edges instead of nodes. Learn how KANs work, how they compare to MLPs, and where they excel.

What Is a Kolmogorov-Arnold Network?

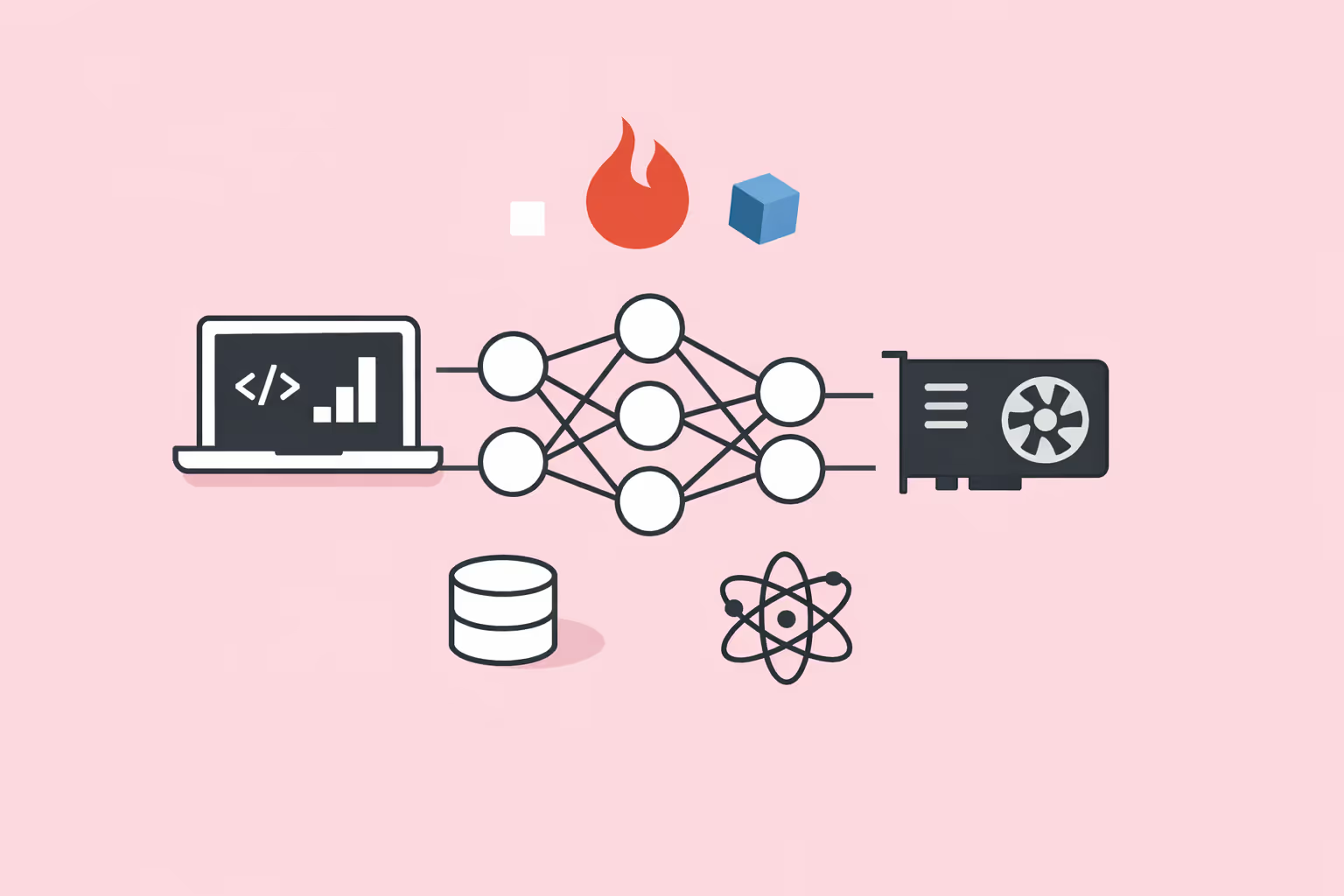

A Kolmogorov-Arnold Network (KAN) is a type of neural network that places learnable activation functions on the connections between neurons rather than on the neurons themselves.

This architectural choice is grounded in the Kolmogorov-Arnold representation theorem, a mathematical result from 1957 proving that any continuous multivariate function can be decomposed into a finite composition of continuous single-variable functions and addition.

In practical terms, a KAN replaces the fixed activation functions used in standard deep learning models with flexible, parameterized functions that live on the edges of the network graph. Each edge learns its own univariate function, typically represented as a B-spline or similar basis expansion. The network then combines these edge-level transformations through summation at each node, producing the final output.

The concept was introduced as a research architecture by Ziming Liu and collaborators at MIT in 2024. It challenges a core assumption that has guided neural network design for decades: that activation functions should be fixed nonlinearities applied at neurons. By moving learnable complexity to the edges, KANs achieve competitive or superior accuracy with significantly fewer parameters on certain classes of problems, particularly those involving scientific and mathematical data.

How KANs Work

The Kolmogorov-Arnold Representation Theorem

The theoretical foundation of KANs is the Kolmogorov-Arnold representation theorem. This theorem states that any continuous function of multiple variables can be expressed as a superposition of continuous functions of a single variable. Formally, for any continuous function f defined on a bounded domain, there exist continuous single-variable functions such that f can be written as sums of compositions of these univariate functions.

This is a powerful existence result, but for decades it was considered a mathematical curiosity with limited practical value. The original proof was non-constructive, meaning it guaranteed that such a decomposition exists without providing a method to find it. The functions involved could be highly irregular, and classical attempts to use the theorem for computation ran into problems with non-smooth, difficult-to-approximate functions.

KANs overcome this limitation by using smooth, learnable spline functions as the univariate building blocks and by generalizing the theorem's two-layer structure to arbitrary depth. Rather than strictly following the theorem's original formulation, KANs use it as architectural inspiration, stacking multiple layers of learnable edge functions to approximate complex mappings.

Edge-Based Learnable Activation Functions

In a standard multilayer perceptron (MLP), each neuron applies a fixed activation function, such as ReLU or sigmoid, after computing a weighted sum of its inputs. The weights on the connections are the learnable parameters. The activation function itself does not change during training.

A KAN inverts this design. Each edge in the network carries its own learnable activation function, typically parameterized as a B-spline. A B-spline is a piecewise polynomial defined by a set of control points and a knot vector. During training, the control points are adjusted through gradient descent, allowing each edge function to take whatever shape best fits the data.

The nodes in a KAN perform only summation. They receive the outputs of the incoming edge functions and add them together, with no additional nonlinear transformation. This means all the representational power of the network resides in the edge functions rather than in the nodes.

Training and Optimization

KANs are trained using the same backpropagation algorithm that powers standard neural networks. The loss function is computed at the output, and gradients are propagated backward through the network to update the B-spline parameters on each edge. Because B-splines are differentiable, standard automatic differentiation frameworks can compute these gradients without modification.

One distinctive feature of KAN training is grid refinement. As training progresses, the resolution of the B-spline grids can be increased, adding more knot points and control parameters. This allows the network to start with a coarse approximation and progressively refine it, similar to how adaptive mesh methods work in numerical simulation. Grid refinement enables KANs to improve accuracy without changing the overall network topology.

Regularization in KANs often involves penalizing the complexity of edge functions. Sparsity regularization encourages the network to set unnecessary edge functions to near-zero, effectively pruning connections. Smoothness regularization penalizes high-frequency oscillations in the spline functions. Together, these techniques help produce compact, interpretable models.

Symbolic Regression and Interpretability

One of the most distinctive capabilities of KANs is their potential for symbolic regression. After training, individual edge functions can be inspected and matched to known mathematical functions. If an edge function closely resembles sin(x), x squared, or log(x), it can be replaced with that exact symbolic expression. This process can convert a trained KAN into a closed-form mathematical formula.

This property is significant for scientific applications where the goal is not just prediction but understanding. A physicist modeling a dynamical system wants an equation, not a black box. KANs offer a path from data to symbolic expressions, bridging the gap between the flexibility of machine learning and the interpretability of classical mathematical modeling.

| Component | Function | Key Detail |

|---|---|---|

| The Kolmogorov-Arnold Representation Theorem | The theoretical foundation of KANs is the Kolmogorov-Arnold representation theorem. | — |

| Edge-Based Learnable Activation Functions | In a standard multilayer perceptron (MLP), each neuron applies a fixed activation function. | ReLU or sigmoid, after computing a weighted sum of its inputs |

| Training and Optimization | KANs are trained using the same backpropagation algorithm that powers standard neural. | The loss function is computed at the output |

| Symbolic Regression and Interpretability | One of the most distinctive capabilities of KANs is their potential for symbolic. | — |

KAN vs Traditional Neural Networks

Architectural Differences

The fundamental difference between KANs and traditional MLPs lies in where learnable complexity is placed. In an MLP, the learnable parameters are the weight matrices connecting layers, and activation functions are fixed. In a KAN, the edges carry learnable functions, and the nodes are simple summation operators.

This distinction has practical consequences. An MLP with 100 neurons in a hidden layer applies the same ReLU function 100 times, varying only the linear combination of inputs fed to each neuron. A KAN with the same topology has 100 distinct learned functions, each shaped independently by the data. This gives KANs greater representational flexibility per parameter.

Parameter Efficiency

Research on KANs has shown that they can match or exceed the accuracy of MLPs while using significantly fewer parameters on certain tasks. In experiments involving mathematical function fitting and scientific data, KANs with a few dozen parameters achieved accuracy that required hundreds or thousands of parameters in an equivalent MLP.

This efficiency comes from the fact that B-spline functions can represent smooth curves with a small number of control points, while an MLP must use many neurons and layers to approximate the same curves through combinations of fixed nonlinearities. For problems with underlying smooth structure, KANs are a more natural fit.

However, parameter efficiency does not always translate to computational efficiency. B-spline evaluations involve lookups and piecewise polynomial calculations that are less hardware-optimized than the matrix multiplications that dominate MLP computation. Modern GPUs and PyTorch operations are heavily optimized for dense linear algebra, which gives MLPs a practical speed advantage despite their larger parameter counts.

Interpretability Comparison

Traditional neural networks, including standard MLPs and transformer models, are widely regarded as black boxes. Understanding why a trained network produces a specific output requires post-hoc explanation tools, and these tools provide approximations rather than exact accounts of the model's reasoning.

KANs offer a structural advantage in interpretability. Because each edge function can be visualized as a simple curve, practitioners can inspect the network's learned behavior at the granularity of individual connections. This makes it possible to identify which input features matter, how they are transformed, and how those transformations combine to produce the output.

For supervised learning tasks in domains such as physics, biology, and engineering, this interpretability is not a luxury. It is often a requirement. Regulatory bodies, peer reviewers, and engineering teams need to understand and validate the model's reasoning, not just its predictions.

Scaling Behavior

MLPs and deep learning architectures like transformers have demonstrated remarkable scaling properties. Increasing the number of parameters, training data, and compute leads to predictable improvements in performance. This scaling behavior is well-studied and underlies the success of large language models and vision systems.

KANs are still in the early stages of scaling research. Initial results suggest they scale well for low-dimensional scientific problems, but their behavior on high-dimensional tasks like natural language processing or large-scale image recognition is not yet well characterized. The spline-based parameterization may face challenges in very high-dimensional input spaces where the number of required knot points grows rapidly.

KAN Use Cases

Scientific Discovery and Equation Learning

The most compelling use case for KANs is scientific discovery. Researchers working in physics, chemistry, and applied mathematics frequently need to identify the governing equations behind observed data. KANs can learn these equations directly from data and present them in symbolic form.

In benchmark experiments, KANs have successfully recovered known physical laws, including conservation equations and mathematical identities, from noisy data. This capability extends beyond curve fitting. It represents a form of automated scientific reasoning where the network discovers the functional relationships that traditional artificial intelligence models would only approximate numerically.

Function Approximation and Regression

For tasks involving linear regression and nonlinear function approximation, KANs offer a compelling alternative to standard approaches. Their spline-based edge functions naturally handle smooth, continuous mappings and can achieve high precision with compact network architectures.

This makes KANs particularly useful in engineering applications where a mathematical model must be both accurate and computationally lightweight. Surrogate models for expensive simulations, real-time control systems, and sensor calibration are all areas where KANs can deliver precise function approximation with minimal parameters.

Partial Differential Equations

Solving partial differential equations (PDEs) is central to fields ranging from fluid dynamics to financial modeling. Neural network approaches to PDEs, sometimes called physics-informed neural networks, have gained traction as alternatives to classical numerical methods. KANs bring an advantage to this domain because their spline-based architecture is naturally suited to representing the smooth solutions that PDEs typically produce.

Early research shows KANs achieving faster convergence and lower error on PDE benchmarks compared to MLP-based solvers. The ability to increase spline resolution through grid refinement aligns well with the adaptive resolution strategies used in traditional numerical PDE solvers.

Graph-Structured and Relational Data

Researchers have begun exploring KAN-based architectures for graph neural networks, replacing the linear transformations in message-passing frameworks with learnable spline functions. This combination, sometimes called a Graph KAN, allows the network to learn more expressive edge-level transformations in graph-structured data.

Applications include molecular property prediction, where atoms are nodes and bonds are edges, and social network analysis, where the relationships between entities carry meaningful structure. The edge-centric design of KANs maps naturally onto graph problems where the interactions between nodes are as important as the nodes themselves.

Time Series and Sequential Data

KAN architectures have also been adapted for time series forecasting and sequential modeling tasks traditionally handled by recurrent neural networks. By applying learnable spline functions to temporal features, KAN-based time series models can capture nonlinear temporal patterns with fewer parameters than LSTM or GRU networks.

These models are particularly promising for scientific time series data, such as climate measurements, sensor readings, and experimental recordings, where the underlying dynamics follow smooth, physically motivated functions.

Challenges and Limitations

Computational Overhead

Despite their parameter efficiency, KANs are computationally slower to train and evaluate than equivalently sized MLPs on current hardware. B-spline evaluations involve conditional branching and memory lookups that do not map efficiently onto GPU architectures optimized for bulk matrix operations.

This overhead means that while a KAN may need fewer parameters to reach a given accuracy, the wall-clock time for training can be longer. As hardware and software optimizations catch up, this gap may narrow, but it remains a practical barrier for large-scale applications today.

Scaling to High Dimensions

The Kolmogorov-Arnold representation theorem applies to functions of any finite number of variables, but the practical efficiency of KANs decreases as input dimensionality grows. High-dimensional inputs require either very wide networks with many edges or deep architectures where spline parameters accumulate rapidly.

Tasks like image classification, where inputs may have tens of thousands of dimensions, or language modeling, where context windows span thousands of tokens, currently favor architectures specifically designed for those data types. KANs have not yet demonstrated competitive performance on these large-scale, high-dimensional benchmarks.

Limited Ecosystem and Tooling

Standard deep learning architectures benefit from years of ecosystem development. Frameworks like PyTorch and TensorFlow include optimized implementations, extensive documentation, pre-trained model libraries, and hardware-specific acceleration. KANs are still early in this lifecycle.

The primary open-source KAN library, pykan, provides a working implementation but lacks the optimization, documentation depth, and community support of mature frameworks. Researchers working with KANs often need to implement custom training loops, debugging tools, and visualization utilities that come built-in for standard architectures.

Theoretical Gaps

While the Kolmogorov-Arnold representation theorem provides a theoretical foundation, important questions remain open. The approximation theory for multi-layer KANs, the optimal strategies for grid refinement, and the conditions under which KANs provably outperform MLPs are all active research areas.

The relationship between network depth, spline resolution, and generalization is not yet fully understood. Practitioners must rely on empirical experimentation rather than established theoretical guidelines when designing KAN architectures, which increases the trial-and-error cost of model development.

Training Stability

Spline-based parameterizations can introduce training instabilities that do not occur with standard activation functions. B-splines with too many knot points may overfit to noise. Grid refinement, if applied too aggressively, can create sharp transitions in the loss landscape that make optimization difficult.

Careful regularization and conservative grid refinement schedules are necessary to avoid these issues. This adds hyperparameter tuning complexity beyond what standard MLP training requires, and best practices are still being established by the research community.

How to Get Started with KANs

Getting started with Kolmogorov-Arnold Networks requires familiarity with standard deep learning concepts and a willingness to work with a relatively new and evolving codebase.

- Understand the prerequisites. A solid grasp of neural network fundamentals, including backpropagation, gradient descent, and activation functions, is essential. Familiarity with B-splines or piecewise polynomial approximation is helpful but not strictly required, as the framework handles most spline mechanics internally.

- Read the foundational paper. The original 2024 paper, "KAN: Kolmogorov-Arnold Networks" by Ziming Liu et al., provides the theoretical motivation, architectural details, and benchmark results. Understanding this paper gives context for design decisions that the documentation alone may not explain.

- Install pykan. The official Python library, pykan, is available on GitHub and installable via pip. It is built on PyTorch and provides classes for building, training, and visualizing KAN architectures. Start with the provided tutorials, which walk through simple function-fitting tasks.

- Begin with low-dimensional problems. KANs shine on problems with a small number of input variables and smooth underlying structure. Start with fitting known mathematical functions (polynomials, trigonometric functions, or elementary physics equations) before attempting more complex tasks. This builds intuition for how spline resolution, network width, and regularization interact.

- Experiment with symbolic regression. After training a KAN on a known function, use the library's symbolic fitting tools to see if the network can recover the exact formula. This exercise demonstrates the interpretability advantage and helps calibrate expectations for what KANs can and cannot extract from data.

- Compare against MLPs. For any problem you solve with a KAN, also train an equivalent MLP. Comparing accuracy, parameter count, training time, and interpretability will clarify where KANs add value. This practice helps build an informed perspective on when to choose KANs over conventional architectures.

Teams exploring reinforcement learning, unsupervised learning, or other paradigms within machine learning should monitor KAN research closely.

As the architecture matures and tooling improves, KANs may become a standard option in the practitioner's toolkit, particularly for scientific and mathematical applications where interpretability and parameter efficiency are priorities.

FAQ

How is a KAN different from an MLP?

An MLP places fixed activation functions on neurons and learns the weights on connections. A KAN places learnable activation functions on the connections (edges) and uses simple summation at the nodes. This means a KAN learns how to transform each input through flexible spline functions rather than relying on fixed nonlinearities. The result is often greater accuracy per parameter on smooth, low-dimensional problems.

What is the Kolmogorov-Arnold representation theorem?

The Kolmogorov-Arnold representation theorem is a mathematical result stating that any continuous function of several variables can be decomposed into sums and compositions of continuous functions of a single variable. KANs use this theorem as the architectural blueprint, implementing the univariate functions as learnable B-splines arranged in a layered network.

Can KANs replace transformers or CNNs?

Not currently. KANs excel at low-dimensional scientific and mathematical problems where interpretability and parameter efficiency matter most. For high-dimensional tasks like natural language processing and large-scale image recognition, transformer models and convolutional neural networks remain the established and more practical choice.

KANs and traditional architectures are better viewed as complementary tools suited to different problem types.

Are KANs difficult to train?

KANs use standard backpropagation and gradient-based optimization, so the basic training workflow is familiar to anyone with deep learning experience. However, additional considerations like spline grid resolution, grid refinement schedules, and spline-specific regularization add complexity.

The tooling is also less mature, so debugging and experimentation may require more hands-on effort than working with well-established architectures.

What programming frameworks support KANs?

The primary implementation is pykan, an open-source Python library built on PyTorch. Several community-maintained variants exist, including efficient-kan, which optimizes the original implementation for speed. As the architecture gains adoption, integration into mainstream frameworks is likely to improve, but for now, pykan and its forks are the standard starting points.

Further reading

Crypto-Agility: What It Is and Why It Matters for Security Teams

Crypto-agility is the ability to swap cryptographic algorithms without rebuilding systems. Learn how it works, why it matters, and how to implement it.

What Is PyTorch? How It Works, Key Features, and Use Cases

PyTorch is an open-source deep learning framework built on Python. Learn how it works, its core features, real-world use cases, and how to get started.

AI Art: How It Works, Top Tools, and What Creators Should Know

Learn how AI art is made using text-to-image generation and style transfer, compare top AI art tools, and understand the ethical and legal considerations for creators.

Dropout in Neural Networks: How Regularization Prevents Overfitting

Learn what dropout is, how it prevents overfitting in neural networks, practical implementation guidelines, and when to use alternative regularization methods.

AI Adaptive Learning: The Next Frontier in Education and Training

Explore how AI Adaptive Learning is reshaping education. Benefits, tools, and how Teachfloor is leading the next evolution in personalized training.

Machine Learning Bias: How It Happens, Types, and How to Fix It

Machine learning bias is a systematic error in ML models that produces unfair or inaccurate outcomes for certain groups. Learn the types, real-world examples, and proven strategies for detection and mitigation.