Home Frechet Inception Distance (FID): What It Is and How It Works

Frechet Inception Distance (FID): What It Is and How It Works

Learn what Frechet Inception Distance (FID) is, how it measures the quality of generated images, how to calculate it, and why it matters for evaluating generative AI models.

What Is Frechet Inception Distance?

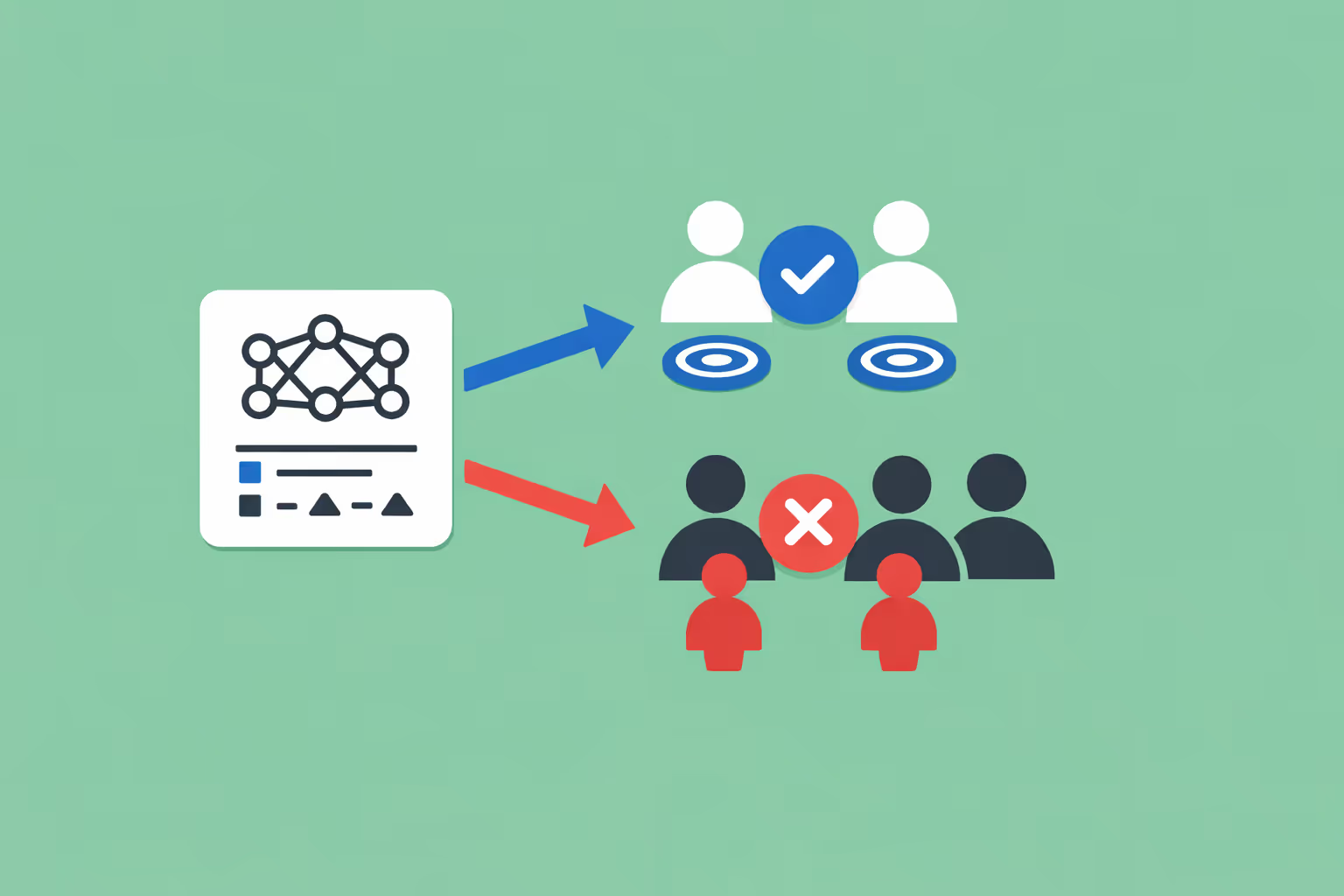

Frechet inception distance (FID) is a metric used to evaluate the quality of images produced by generative models. It measures how similar a set of generated images is to a set of real images by comparing statistical properties extracted from a pretrained convolutional neural network.

A lower FID score indicates that the generated images more closely resemble the real ones in terms of visual quality and diversity, while a higher score signals a larger gap between the two distributions.

The metric was introduced by Martin Heusel and colleagues in their 2017 paper "GANs Trained by a Two Time-Scale Update Rule Converge to a Local Nash Equilibrium." It was designed as an improvement over the Inception Score, which evaluates generated images in isolation without comparing them to a reference dataset.

FID addresses this limitation by explicitly measuring the distance between real and generated image distributions, making it a more reliable indicator of how well a generative adversarial network or other generative system captures the characteristics of its training data.

At a high level, FID works by passing both real and generated images through the Inception v3 network, a deep learning model originally trained for image recognition. Instead of using the network's final classification output, FID extracts feature representations from an intermediate layer.

These features encode high-level visual properties such as texture, shape, and object structure. The metric then models each set of features as a multivariate Gaussian distribution and computes the Frechet distance (also known as the Wasserstein-2 distance) between the two Gaussians.

FID has become the standard quantitative benchmark for evaluating generative image models. Researchers and practitioners routinely report FID scores when comparing generative AI architectures, tracking improvements across model versions, or validating that a trained model produces outputs of acceptable quality.

Its widespread adoption reflects a practical consensus that, despite known limitations, FID captures meaningful differences in image quality and diversity that align reasonably well with human perception.

How FID Works

Feature Extraction with Inception v3

The foundation of FID is the Inception v3 neural network, a deep convolutional architecture trained on ImageNet for image classification. Rather than classifying images, FID uses Inception v3 as a feature extractor. Each image is passed through the network, and activations are collected from the final pooling layer (the 2048-dimensional output before the classification head). These activations serve as compact representations of the visual content in each image.

The choice of Inception v3 is significant because the network has learned to identify a broad hierarchy of visual features during its supervised learning training on millions of labeled images. Low-level features capture edges and textures. Mid-level features capture parts and patterns. High-level features capture object-level semantics.

By extracting activations from a deep layer, FID operates on representations that encode rich visual information rather than raw pixel values.

This reliance on a pretrained network means FID measures perceptual similarity rather than pixel-level similarity. Two images can look very different at the pixel level but produce similar feature vectors if they contain similar objects, textures, and spatial arrangements. This property makes FID more aligned with human judgment than metrics that operate directly on pixel values, such as mean squared error.

Modeling Distributions as Gaussians

Once feature vectors have been extracted for all real images and all generated images, FID summarizes each set using two statistics: the mean vector and the covariance matrix. The mean captures the average position of the features in the 2048-dimensional space. The covariance matrix captures how the features vary and co-vary across the dataset.

Together, the mean and covariance define a multivariate Gaussian distribution for each set of images. This is a simplifying assumption. Real feature distributions are not perfectly Gaussian. However, modeling them as Gaussians makes the distance calculation tractable and has proven effective in practice for distinguishing between good and poor generative models.

The Gaussian assumption also means that FID captures two distinct aspects of generation quality. The mean reflects whether the generated images are centered in the same region of feature space as the real images, which relates to visual fidelity. The covariance reflects whether the generated images exhibit the same spread and correlation structure as the real images, which relates to diversity.

A model that produces high-quality but repetitive images will have a similar mean but a different covariance, and FID will penalize it accordingly.

Computing the Frechet Distance

The Frechet distance between two multivariate Gaussians with means (m_r, m_g) and covariance matrices (C_r, C_g) is calculated as:

FID = ||m_r - m_g||^2 + Tr(C_r + C_g - 2 (C_r C_g)^(1/2))

The first term measures the squared distance between the two mean vectors. If the generated images systematically differ from real images in their average visual properties, this term increases. The second term measures the difference between the covariance structures using the matrix trace and square root. If the generated images have different variance or correlation patterns compared to real images, this term increases.

A perfect score of zero would mean the two Gaussian distributions are identical, implying that the generated images are statistically indistinguishable from real images in the Inception feature space. In practice, FID scores for state-of-the-art models on standard benchmarks (such as CIFAR-10 or ImageNet) typically range from single digits to low double digits, with lower values representing better quality.

| Component | Function | Key Detail |

|---|---|---|

| Feature Extraction with Inception v3 | The foundation of FID is the Inception v3 neural network. | Mean squared error |

| Modeling Distributions as Gaussians | Once feature vectors have been extracted for all real images and all generated images. | — |

| Computing the Frechet Distance | The Frechet distance between two multivariate Gaussians with means (m_r. | CIFAR-10 or ImageNet) typically range from single digits to low |

Why FID Matters

FID matters because evaluating generative models is inherently difficult, and FID provides a standardized, reproducible, and reasonably informative way to do it. Unlike classification or regression tasks where accuracy or error rates offer clear performance signals, generative tasks produce open-ended outputs with no single correct answer. FID fills this evaluation gap by reducing a complex perceptual judgment to a single number that can be compared across models and experiments.

The metric captures two properties that are central to generative quality: fidelity and diversity. Fidelity measures whether individual generated images look realistic and contain plausible visual content. Diversity measures whether the model produces a wide range of different outputs rather than repeating the same image with minor variations. Both properties matter for practical applications.

A model with high fidelity but low diversity has likely experienced mode collapse, a common failure mode in generative adversarial networks where the generator learns to produce only a narrow subset of the target distribution.

FID's role as a standard benchmark has enabled meaningful progress tracking across the artificial intelligence research community. When a new architecture claims to outperform existing models, FID provides a common yardstick for verification.

This standardization has accelerated research by giving teams a shared reference point, reducing ambiguity in model comparisons, and enabling meta-analyses that track improvement trends over time.

For practitioners working with machine learning systems in production, FID serves as a practical quality gate. Teams training or fine-tuning generative models can monitor FID during development to detect regressions, compare hyperparameter configurations, and decide when a model is ready for deployment.

While FID alone is not sufficient for a complete quality assessment, it provides a fast, automated signal that complements more expensive human evaluation.

FID Use Cases

Benchmarking Generative Models

The most common use of FID is comparing generative architectures on standard datasets.

Researchers training generative adversarial networks, variational autoencoders, diffusion models, and other generative systems report FID scores to demonstrate the quality of their models relative to prior work.

This benchmarking practice has established FID as the primary quantitative metric in generative modeling research papers.

Standard benchmark protocols typically involve generating a fixed number of images (commonly 50,000), computing FID against a reference set of real images from the same dataset, and reporting the result. Consistent use of these protocols allows direct comparison across papers and time periods, making FID an essential tool for tracking the state of the art in image generation.

Evaluating Text-to-Image Systems

Text-to-image models such as DALL-E, Stable Diffusion, and Midjourney use FID as one of several evaluation metrics. In these systems, the generated images must not only look realistic but also correspond accurately to text prompts. FID measures the visual quality and distributional similarity component, while other metrics (such as CLIP score) measure text-image alignment separately.

Teams building and refining text-to-image pipelines use FID to ensure that architectural changes, training data updates, or inference parameter adjustments do not degrade output quality. A rising FID during training may indicate overfitting, data issues, or hyperparameter problems that need attention.

Image-to-Image Translation

Image-to-image translation tasks, such as converting sketches to photographs, transforming daytime scenes to nighttime, or translating satellite imagery to map views, also rely on FID for evaluation. In these applications, the metric measures whether the translated images match the statistical properties of the target domain.

FID is especially useful here because pixel-level metrics can be misleading. A translated image might differ significantly from any specific ground truth example at the pixel level while still being a high-quality, realistic representation of the target domain. FID's feature-level comparison captures this distinction effectively.

Monitoring Model Training and Fine-Tuning

During the training of generative models, FID can be computed at regular intervals to track progress. A decreasing FID curve indicates that the model is learning to produce more realistic and diverse outputs. Sudden increases may signal training instability, learning rate issues, or data pipeline problems.

This monitoring capability is particularly valuable when fine-tuning pretrained models on new domains. Teams adapting a general-purpose image generator to a specific visual style or subject category can use FID to verify that the fine-tuned model maintains quality while adapting to the new distribution. Frameworks like PyTorch provide the computational infrastructure needed to integrate FID monitoring into training loops.

Comparing Data Augmentation Strategies

FID can evaluate the effectiveness of data augmentation techniques by measuring whether augmented training data leads to better generative models. If a particular augmentation strategy improves the FID of the resulting model, it provides evidence that the augmentation is helping the model learn a more accurate representation of the target distribution.

This application extends to evaluating synthetic data quality more broadly. Organizations using generative models to produce synthetic training data for deep learning systems can use FID to assess whether the synthetic data is sufficiently similar to real data to be useful for downstream tasks.

Challenges and Limitations

Dependence on Inception v3

FID's reliance on the Inception v3 network introduces a specific bias. The metric measures similarity through the lens of features that Inception v3 has learned from ImageNet classification. If the images being evaluated differ substantially from ImageNet content (for example, medical images, satellite imagery, or abstract art), the extracted features may not capture the most relevant visual properties.

This means FID can be less informative or even misleading for domains that fall outside ImageNet's training distribution.

Researchers have explored alternatives, such as computing the Frechet distance using features from different pretrained networks or using vector embeddings from models trained on domain-specific data. These variants can improve evaluation accuracy for specialized domains, but they sacrifice the standardization that makes FID valuable as a universal benchmark.

Sample Size Sensitivity

FID scores are sensitive to the number of images used in the calculation. With small sample sizes, the estimated mean and covariance may not accurately represent the underlying distributions, leading to noisy or biased FID estimates. Research has shown that FID computed on fewer than 10,000 images can be unreliable, and the standard recommendation is to use at least 50,000 images for stable results.

This requirement creates a practical barrier. Generating 50,000 images for each evaluation run takes time and compute resources, especially for large models with slow inference. Teams working with limited budgets or tight iteration cycles may be tempted to compute FID on smaller samples, which risks producing unreliable scores that do not accurately reflect model quality.

Gaussian Assumption

The assumption that feature distributions are multivariate Gaussian is a simplification. Real feature distributions can be multimodal, skewed, or otherwise non-Gaussian. When the true distributions deviate significantly from Gaussians, the Frechet distance between the fitted Gaussians may not accurately capture the true distance between the distributions.

This limitation is most pronounced when comparing models with very different failure modes. A model that produces a small number of distinct image types (multimodal distribution) will be poorly approximated by a single Gaussian, potentially leading to FID scores that do not reflect the actual perceptual quality of the outputs.

Insensitivity to Certain Artifacts

FID operates on aggregate statistics (mean and covariance) rather than individual images. This means it can miss certain types of quality problems. For example, if a small percentage of generated images contain severe artifacts while the majority are high quality, the aggregate statistics may still look favorable. FID averages over the entire distribution and does not directly penalize worst-case outputs.

Similarly, FID may not detect subtle spatial distortions, color shifts, or structural inconsistencies that a human viewer would immediately notice. These fine-grained quality issues can be important in applications where individual image quality matters, such as medical imaging or product photography. Supplementary metrics and human evaluation remain necessary for a complete quality assessment.

No Measure of Prompt Alignment

For conditional generation tasks, FID measures only the quality and diversity of generated images relative to a reference set. It does not measure whether the generated images correspond to their conditioning inputs. A text-to-image model could achieve a low FID by generating high-quality, diverse images that have no relationship to the text prompts, and FID would not detect this failure.

Metrics like CLIP score address the alignment dimension, which is why practitioners evaluating generative AI systems typically report multiple metrics rather than relying on FID alone.

How to Calculate FID

Calculating FID involves a series of well-defined steps. The process can be implemented from scratch using standard deep learning frameworks or performed using established open-source libraries.

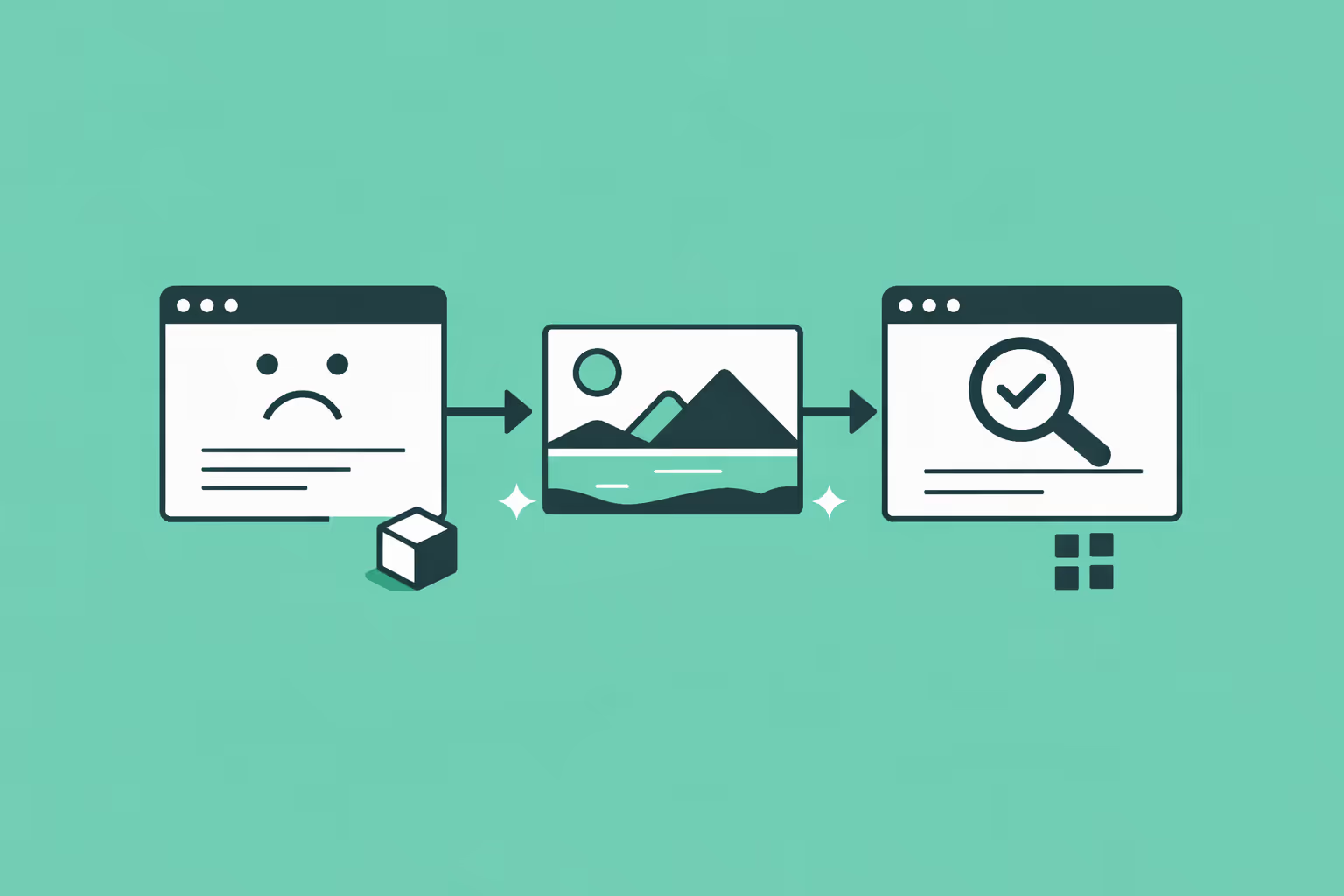

Step 1: Prepare Image Sets

Assemble two sets of images: one set of real images from the target domain and one set of generated images from the model being evaluated. Both sets should be large enough for stable statistics. The standard recommendation is 50,000 images per set. The images should be resized to 299x299 pixels to match Inception v3's expected input dimensions.

Step 2: Extract Features

Pass each image through a pretrained Inception v3 model and collect the activations from the final average pooling layer (producing a 2048-dimensional vector per image). Both sets of images go through the same network with the same weights. No training or fine-tuning occurs during this step. The network is used purely as a feature extractor.

Step 3: Compute Statistics

For each set of feature vectors, calculate the mean vector (a 2048-dimensional vector) and the covariance matrix (a 2048x2048 matrix). These statistics summarize the distribution of each image set in the Inception feature space.

Step 4: Compute the Frechet Distance

Apply the FID formula using the mean vectors and covariance matrices from both sets:

FID = ||m_r - m_g||^2 + Tr(C_r + C_g - 2 (C_r C_g)^(1/2))

Computing the matrix square root is the most computationally intensive part of this step. Standard implementations use the scipy.linalg.sqrtm function in Python.

Step 5: Interpret the Result

A lower FID score indicates that the generated images are more similar to the real images in terms of visual quality and diversity. There is no universal threshold for "good" or "bad" FID values because scores depend on the dataset, resolution, and domain. Comparisons are most meaningful when made against other models evaluated under identical conditions (same real image set, same number of generated images, same preprocessing).

Common Libraries

Several open-source tools make FID calculation straightforward:

- pytorch-fid is a widely used Python package that computes FID from two directories of images using PyTorch. It handles feature extraction, statistics computation, and distance calculation in a single command.

- clean-fid addresses known implementation inconsistencies (such as image resizing methods) that can cause FID scores to vary across implementations. It provides a standardized calculation pipeline designed to produce reproducible results.

- torch-fidelity is another PyTorch-based library that computes FID alongside other generative metrics like Inception Score and Kernel Inception Distance.

For researchers and engineers working with transformer models or other modern generative model architectures, these libraries integrate easily into existing evaluation pipelines and can be called programmatically during automated training and validation workflows.

FAQ

What is a good FID score?

There is no absolute threshold that defines a "good" FID score because the value depends on the dataset, image resolution, and evaluation protocol. On standard benchmarks like CIFAR-10, state-of-the-art models achieve FID scores in the low single digits (typically 2 to 5). On more complex datasets like ImageNet at 256x256 resolution, competitive scores range from roughly 2 to 10.

The most meaningful use of FID is relative comparison: if Model A achieves a lower FID than Model B on the same dataset with the same evaluation protocol, Model A is producing more realistic and diverse images. Comparing FID scores across different datasets or preprocessing pipelines is not reliable.

How is FID different from Inception Score?

Inception Score (IS) evaluates generated images without comparing them to a reference set of real images. It measures whether the generated images are individually recognizable (high classification confidence) and collectively diverse (uniform distribution across classes). FID improves on this by explicitly comparing generated images to real images, capturing differences in both visual quality and distributional similarity.

FID is generally considered more informative because a model could achieve a high Inception Score while still producing images that look nothing like the target dataset. FID detects this kind of distributional mismatch.

Can FID be used for non-image data?

The core idea behind FID, comparing distributions of feature embeddings using the Frechet distance, can be adapted to other data types. Researchers have applied analogous metrics to audio generation (Frechet Audio Distance), video generation (Frechet Video Distance), and text generation. The key requirement is a pretrained feature extractor appropriate for the data type. For images, Inception v3 serves this role. For audio, a pretrained audio classification network fills the same function. The mathematical framework remains the same; only the feature extractor changes.

Why do FID scores sometimes vary across implementations?

FID scores can differ between implementations due to subtle differences in image preprocessing, such as the interpolation method used for resizing images to 299x299 pixels. Bilinear, bicubic, and other interpolation methods produce slightly different resized images, which in turn produce different Inception features and different FID scores.

The clean-fid library was developed specifically to address this problem by standardizing the preprocessing pipeline. When comparing FID scores across papers or codebases, it is important to verify that the same preprocessing and implementation were used.

Does a low FID guarantee good image quality?

A low FID indicates that the generated image distribution is statistically similar to the real image distribution in the Inception feature space. It does not guarantee that every individual generated image is of high quality. FID is an aggregate metric that can mask problems in individual samples. A model could produce mostly excellent images with occasional severe failures and still achieve a low FID.

For applications where individual image quality matters, human evaluation and per-image quality metrics should supplement FID. Additionally, FID does not assess whether conditional outputs match their prompts, so it should be combined with alignment metrics for text-to-image or other conditional generation tasks.

How many images are needed for a reliable FID calculation?

The standard recommendation is to use at least 50,000 images for both the real and generated sets. Research has shown that FID estimates become unstable with fewer than 10,000 images, exhibiting higher variance and potential bias. If computational constraints make generating 50,000 images impractical, the sample size should be reported alongside the FID score so that readers can assess the reliability of the estimate.

Some recent work has proposed bias-corrected FID variants for small sample sizes, but the 50,000-image convention remains the most widely accepted standard for published benchmarks.

Further reading

Machine Teaching: How Humans Guide AI to Learn Faster

Machine teaching is the practice of designing optimal training data and curricula so AI models learn faster and more accurately. Explore how it works, key use cases, and how it compares to machine learning.

Robot: Definition, Types, and How Robots Work

A robot is a programmable machine that senses its environment and performs tasks autonomously or semi-autonomously. Learn how robots work, their types, use cases, and the future of robotics.

What Is Natural Language Generation (NLG)? Definition, Techniques, and Use Cases

Learn what natural language generation is, how NLG systems convert data into human-readable text, the types of NLG architectures, real-world use cases, and how to get started.

Algorithmic Transparency: What It Means and Why It Matters

Understand algorithmic transparency, why it matters for accountability and compliance, real-world examples in hiring, credit, and healthcare, and how organizations can improve it.

Machine Learning Bias: How It Happens, Types, and How to Fix It

Machine learning bias is a systematic error in ML models that produces unfair or inaccurate outcomes for certain groups. Learn the types, real-world examples, and proven strategies for detection and mitigation.

Generative Adversarial Network (GAN): How It Works, Types, and Use Cases

Learn what a generative adversarial network is, how the generator and discriminator work together, explore GAN types, real-world use cases, and how to get started.