Home DALL-E: How It Works, What It Can Do, and Practical Guide

DALL-E: How It Works, What It Can Do, and Practical Guide

Learn how DALL-E generates images from text prompts using diffusion models. Explore its capabilities, use cases, limitations, and how to get started.

What Is DALL-E?

DALL-E is a text-to-image generation model developed by OpenAI that creates original images from natural language descriptions. A user types a prompt describing what they want to see, and the model produces a visual interpretation of that description, ranging from photorealistic photographs to stylized illustrations, abstract compositions, or technical diagrams.

The model sits within the broader category of generative AI, which refers to systems that produce new content rather than classifying or analyzing existing data. Where predictive models identify patterns in information, generative models synthesize entirely new outputs. DALL-E applies this principle specifically to visual content, translating language into pixel-level compositions.

What distinguishes DALL-E from earlier image generation tools is its ability to interpret complex, multi-element prompts with spatial and contextual awareness. A user can describe "a corgi wearing a beret, sitting in a Parisian cafe, painted in watercolor style," and the model will attempt to compose all of those elements into a coherent scene. This compositional reasoning separates modern text-to-image systems from simpler template-based or filter-based approaches.

DALL-E is accessed primarily through OpenAI's ChatGPT interface and the OpenAI API, making it available to both individual users and development teams integrating image generation into products and workflows.

How DALL-E Works

Understanding the technical architecture behind DALL-E helps clarify both its capabilities and its constraints. The system relies on a combination of deep learning techniques that have matured significantly over the last few years.

The Diffusion Model Architecture

DALL-E's current versions use a diffusion model as their core architecture. Diffusion models work by learning to reverse a noise-adding process. During training, the model is shown millions of images that are progressively corrupted with random noise until they become pure static. The model then learns to reverse this process, recovering a clean image from noise, step by step.

When generating a new image, the model starts with random noise and applies its learned denoising process, guided by the text prompt. Each denoising step brings the image closer to a coherent visual that matches the prompt's description. This process typically runs for dozens of iterations before producing the final output.

The diffusion approach gives DALL-E several advantages over older generation techniques. It produces higher-quality images with finer detail, handles a broader range of visual styles, and generates more coherent compositions when given complex prompts.

Text Encoding and Prompt Interpretation

Before the diffusion process begins, DALL-E must convert the text prompt into a numerical representation the model can use. This happens through a text encoder, typically based on a transformer architecture similar to those used in large language models.

The text encoder maps each word and phrase in the prompt to a high-dimensional vector that captures its semantic meaning. These vectors guide the diffusion process at every step, ensuring the generated image stays aligned with the user's description. The quality of this text encoding determines how accurately the model follows instructions, which is why prompt structure matters significantly.

Training Data and Model Learning

DALL-E is trained on large datasets of image-text pairs sourced from the internet. During training, the model learns statistical relationships between visual patterns and language descriptions. It does not store or retrieve specific images. Instead, it learns general visual concepts: what "sunset" looks like across thousands of examples, how "oil painting" textures differ from "digital illustration," and how spatial relationships like "next to" or "above" translate into pixel arrangements.

This statistical learning approach means DALL-E can combine concepts it has seen separately during training into novel compositions it has never encountered. A user can request something that likely does not exist in any training image, and the model will attempt to construct it from its learned understanding of each individual element.

| Component | Function | Key Detail |

|---|---|---|

| The Diffusion Model Architecture | DALL-E's current versions use a diffusion model as their core architecture. | Diffusion models work by learning to reverse a noise-adding process |

| Text Encoding and Prompt Interpretation | Before the diffusion process begins. | Large language models |

| Training Data and Model Learning | DALL-E is trained on large datasets of image-text pairs sourced from the internet. | — |

What DALL-E Can Do

DALL-E's capabilities extend well beyond simple image generation. The model supports several distinct workflows that serve different creative and operational needs.

Text-to-Image Generation

The primary capability is generating images from text prompts. Users describe what they want, and DALL-E produces an original image. The range of possible outputs is broad: photorealistic portraits, product mockups, architectural visualizations, scientific diagrams, fantasy illustrations, and abstract compositions all fall within the model's capability.

Prompt specificity directly affects output quality. A prompt like "a building" produces generic results. A prompt like "a three-story Art Deco apartment building in Miami, white facade with turquoise accents, golden hour lighting, shot from street level" produces something much closer to a specific creative vision.

Developing effective prompting techniques is an essential skill for getting consistent, high-quality results.

Image Editing and Inpainting

DALL-E supports editing existing images by modifying selected regions while preserving the rest. This feature, called inpainting, allows users to select a portion of an image and describe what should replace it. The model generates new content for the selected area that blends seamlessly with the surrounding context.

Inpainting is practical for tasks like removing unwanted objects from photographs, changing backgrounds, adding elements to existing compositions, or correcting details in generated images. The model maintains lighting, perspective, and style consistency between the edited region and the original image.

Style Variation and Iteration

Users can generate multiple variations of the same concept by adjusting prompt language, specifying different artistic styles, or requesting the model to produce several alternatives. This makes DALL-E useful for exploratory design work where the goal is to evaluate multiple directions before committing to one.

A marketing team exploring visual concepts for a campaign might generate dozens of variations from a single prompt, adjusting style, composition, and tone to find the direction that best serves the project. This iterative approach accelerates the early stages of creative production where brainstorming and exploration consume the most time.

Transparency and Context-Aware Composition

Recent versions of DALL-E demonstrate improved ability to handle transparency, layered compositions, and context-aware placement. The model can generate images with transparent backgrounds for use in design workflows, compose multiple elements with correct spatial relationships, and maintain consistent lighting and perspective across complex scenes.

These technical improvements make the model more practical for production work where outputs need to integrate with existing design systems, presentations, or marketing materials rather than standing alone as isolated images.

Practical Use Cases

DALL-E's capabilities map to specific operational needs across several industries and professional contexts. The following applications represent established patterns where the tool delivers measurable value.

Marketing and Content Production

Content teams use DALL-E to produce visual assets for blog posts, social media, email campaigns, and advertising materials. The model generates custom illustrations, header images, and conceptual visuals faster than traditional design workflows allow. For teams producing high volumes of content, this reduces the bottleneck between editorial planning and visual execution.

The practical advantage is not just speed. DALL-E enables teams to produce custom visuals for every piece of content rather than relying on generic stock photography. Custom images perform better in engagement metrics because they are tailored to the specific topic and audience rather than serving as visual placeholders.

Product Design and Prototyping

Design teams use DALL-E during early-stage ideation to generate visual concepts quickly. Industrial designers, architects, and product developers describe concepts in natural language and receive visual representations they can evaluate, share with stakeholders, and iterate on before investing time in detailed CAD work or manual illustration.

This workflow compresses the concept exploration phase. Instead of spending hours sketching each variation by hand, a designer can generate and evaluate dozens of directions in minutes, then refine the most promising options through traditional design tools.

Education and Training Materials

Educators and instructional designers use DALL-E to create custom visuals for learning materials. Scientific illustrations, historical scene reconstructions, conceptual diagrams, and scenario-based imagery can be generated to match specific curriculum needs rather than relying on whatever stock images happen to be available.

For organizations building online training programs, DALL-E provides a way to create visually rich course materials without maintaining a dedicated illustration team. Custom visuals improve learner engagement because they are designed to clarify the specific concept being taught rather than serving a decorative function.

Accessibility and Communication

DALL-E can generate visual representations of abstract concepts that are difficult to communicate through text alone. Technical teams use it to create diagrams, process visualizations, and conceptual illustrations that make complex ideas more accessible to non-technical audiences.

This application is particularly relevant for organizations training diverse teams across multiple skill levels. Visual representations reduce the cognitive load of processing dense written content and provide alternative entry points for understanding complex topics.

Limitations and Challenges

DALL-E is a powerful tool, but understanding its constraints is essential for using it effectively and responsibly.

Text Rendering and Fine Detail

DALL-E struggles with generating accurate text within images. While recent versions have improved significantly, the model can still produce misspelled words, malformed letters, or inconsistent typography. Any workflow requiring precise text in generated images should plan for manual correction or use separate text overlay tools.

Fine details like hands, fingers, and complex mechanical parts also remain challenging. The model sometimes produces anatomical inconsistencies or physically impossible configurations, particularly in complex poses or multi-figure compositions.

Prompt Sensitivity

Small changes in prompt wording can produce dramatically different results. This sensitivity means achieving a specific output often requires multiple iterations and careful prompt refinement. Users accustomed to deterministic tools may find this variability frustrating initially.

The relationship between prompt and output is not linear. Adding more detail to a prompt does not always improve the result. Sometimes simplifying a prompt produces better output because the model has fewer competing instructions to resolve. Learning this balance requires practice and familiarity with how the model interprets language.

Copyright and Intellectual Property

The legal status of AI-generated images remains unsettled in most jurisdictions. Questions about copyright ownership, the use of copyrighted training data, and the extent to which AI outputs can be protected as original works are still being debated by courts and legislators worldwide.

Organizations using DALL-E for commercial purposes should establish clear internal policies regarding AI governance and output attribution. Some industries require disclosure when content is AI-generated, and these requirements are likely to expand as regulatory frameworks mature.

A cautious approach includes documenting the creation process, maintaining human oversight over final outputs, and avoiding generation of content that closely mimics identifiable artistic styles.

Bias and Representation

DALL-E's training data reflects the biases present in the images and text available on the internet. The model can produce outputs that reinforce stereotypes, underrepresent certain demographics, or default to narrow visual conventions unless prompted otherwise.

OpenAI has implemented safety filters and bias mitigation measures, but no system eliminates bias entirely. Users should review generated content critically, particularly for materials intended for diverse audiences or sensitive contexts. Understanding the different types of AI and their inherent limitations helps teams set appropriate expectations and build review processes into their workflows.

Rate Limits and Cost

DALL-E access through OpenAI is metered. Users on free tiers have limited generation capacity, and API usage incurs per-image costs that can accumulate quickly in high-volume workflows. Organizations planning to integrate DALL-E into production systems need to budget for ongoing usage costs and plan for rate limits during peak demand.

How to Get Started with DALL-E

Getting started with DALL-E is straightforward, but achieving consistent, high-quality results requires deliberate practice and workflow design.

Access Options

DALL-E is available through several channels, each suited to different use cases:

- ChatGPT. The simplest access point. Users with a ChatGPT Plus or Team subscription can generate images directly within the chat interface by describing what they want. This is ideal for individual use, quick prototyping, and exploratory work.

- OpenAI API. Developers and organizations can access DALL-E programmatically through the API, enabling integration into custom applications, automated workflows, and production systems. The API provides more control over parameters like image size, quality settings, and generation count.

- Microsoft Copilot. DALL-E powers image generation within Microsoft's Copilot products, making it accessible within the Microsoft 365 ecosystem for users who work primarily in those tools.

Building Effective Prompts

The single most important skill for getting value from DALL-E is prompt construction. Effective prompts follow a consistent structure:

- Subject. What is the main focus of the image.

- Setting. Where the subject exists, the environment and context.

- Style. The artistic approach, whether photorealistic, watercolor, flat illustration, or another visual language.

- Technical details. Lighting, camera angle, color palette, aspect ratio, and level of detail.

- Exclusions. What should not appear in the image.

Starting with clear, specific prompts and iterating based on results is more effective than writing long, complex prompts on the first attempt. Teams building AI communication skills find that prompt writing improves rapidly with structured practice.

Workflow Integration

For DALL-E to deliver consistent value, it needs to fit into existing production workflows rather than operating as an isolated tool. Practical integration strategies include:

- Batch generation. Generate multiple variations during dedicated sessions rather than one-off requests scattered throughout the day. This improves efficiency and makes it easier to compare options.

- Template development. Build reusable prompt templates for recurring image types. A team that regularly produces blog header images, for example, benefits from a standardized prompt structure that maintains visual consistency across content.

- Human review checkpoints. Establish review steps where a human evaluates generated outputs before they move into production. This catches quality issues, bias problems, and style inconsistencies before they reach the audience.

- Post-processing pipeline. Plan for light editing after generation. Most professional uses of DALL-E involve at least minor adjustments in tools like Photoshop or Figma, such as adding text overlays, correcting colors, cropping to specific dimensions, or combining generated elements with existing design assets.

Organizations that treat DALL-E as one component in a structured creative workflow, rather than a replacement for the entire workflow, consistently achieve better results and avoid the quality inconsistencies that come from over-reliance on any single tool.

FAQ

Can DALL-E generate images for free?

DALL-E offers limited free generation through ChatGPT's free tier, but consistent use requires a paid subscription. ChatGPT Plus includes a monthly allocation of image generations. API access is pay-per-use, with pricing based on image resolution and quality settings. For production-level usage, budgeting for ongoing costs is necessary.

How does DALL-E differ from Midjourney and Stable Diffusion?

DALL-E, Midjourney, and Stable Diffusion each prioritize different strengths. DALL-E excels at prompt adherence and integration with the OpenAI ecosystem. Midjourney is known for producing highly aesthetic, stylized images with minimal prompting. Stable Diffusion is open-source, offering maximum customization and the ability to run locally. The best choice depends on the specific workflow requirements, budget, and technical capacity of the team.

Comparing AI model approaches helps clarify how different architectures lead to different practical trade-offs.

Is DALL-E output copyrightable?

Copyright law for AI-generated images is still evolving. In most jurisdictions, pure AI output without significant human creative input is not eligible for copyright protection. However, images produced through substantial human direction, editing, and curation may qualify. Organizations should consult legal counsel for jurisdiction-specific guidance and maintain documentation of the human creative process involved in producing final assets.

What are the content restrictions?

OpenAI enforces content policies that restrict generation of violent, sexual, deceptive, or hateful imagery. The model also limits generation of realistic images depicting identifiable public figures. These restrictions are enforced through both prompt filtering and output review systems. Attempts to circumvent these restrictions can result in account suspension.

Can DALL-E be used for commercial projects?

Yes. OpenAI grants users full commercial rights to images generated through DALL-E, provided they comply with OpenAI's usage policies. This includes use in marketing materials, products, publications, and client deliverables. The key constraint is compliance with content policies and applicable intellectual property laws in the relevant jurisdiction.

Further reading

12 Best Free and AI Chrome Extensions for Teachers in 2025

Free AI Chrome extensions tailored for teachers: Explore a curated selection of professional-grade tools designed to enhance classroom efficiency, foster student engagement, and elevate teaching methodologies.

AgentOps: Tools and Practices for Managing AI Agents in Production

Learn what AgentOps is, why it matters for AI agent deployments, the core components of observability, cost tracking, and governance, and how to implement AgentOps in your organization.

.avif)

25+ Best ChatGPT Prompts for Instructional Designers

Discover over 25 best ChatGPT prompts tailored for instructional designers to enhance learning experiences and creativity.

What Is a Neural Net Processor (NPU)? Definition, Architecture, and Use Cases

Learn what a neural net processor is, how NPUs accelerate AI workloads through dedicated hardware, how they compare to GPUs and CPUs, and where they are deployed across industries.

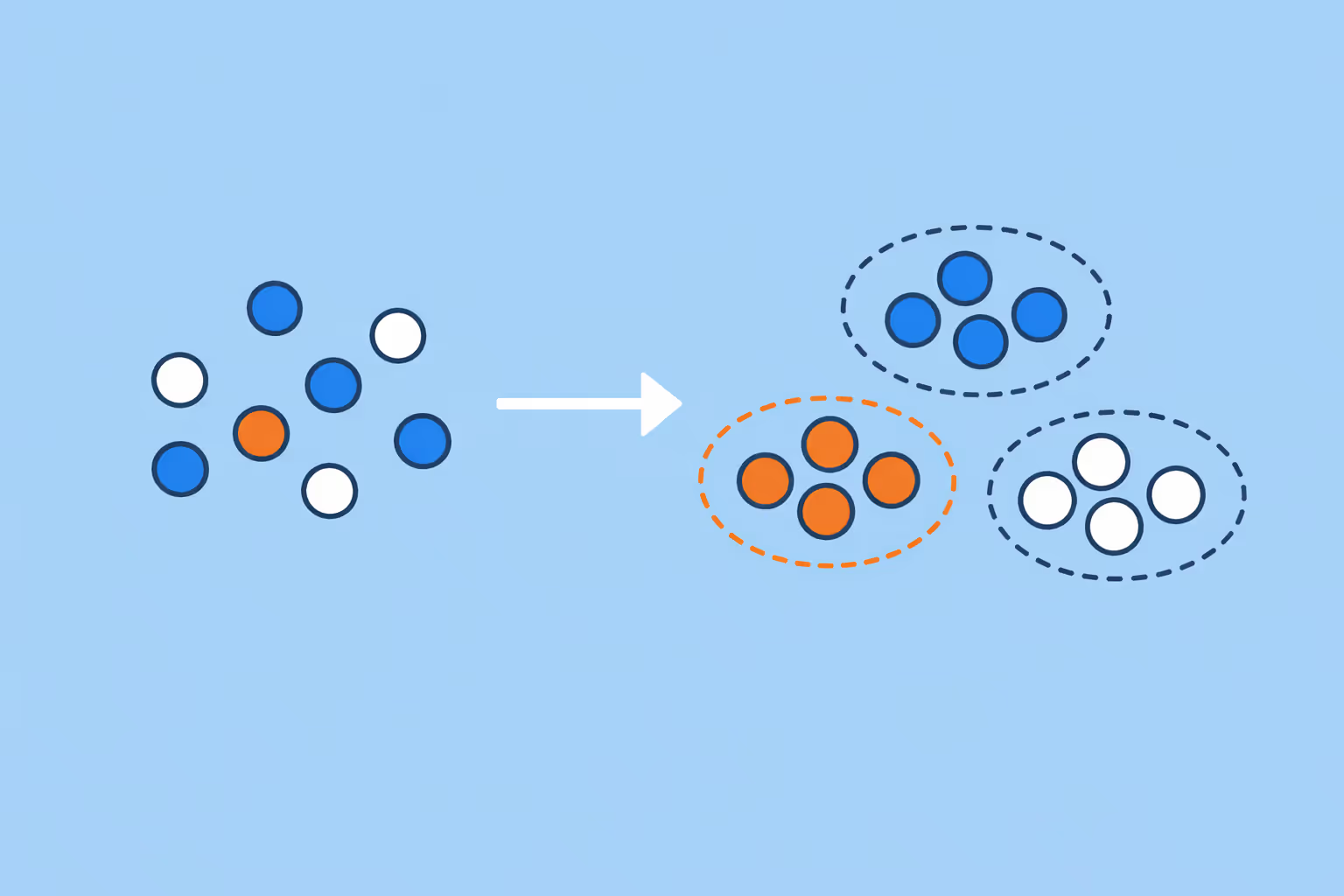

Clustering in Machine Learning: Methods, Use Cases, and Practical Guide

Clustering in machine learning groups unlabeled data by similarity. Learn the key methods, real-world use cases, and how to choose the right approach.

Neural Radiance Field (NeRF): How It Works, Use Cases, and Practical Guide

Learn what a neural radiance field is, how NeRF reconstructs 3D scenes from 2D images, its real-world applications, and the key challenges practitioners face.