Home Amazon Bedrock: A Complete Guide to AWS's Generative AI Platform

Amazon Bedrock: A Complete Guide to AWS's Generative AI Platform

Amazon Bedrock is AWS's fully managed service for building generative AI applications. Learn how it works, key features, use cases, and how it compares to alternatives.

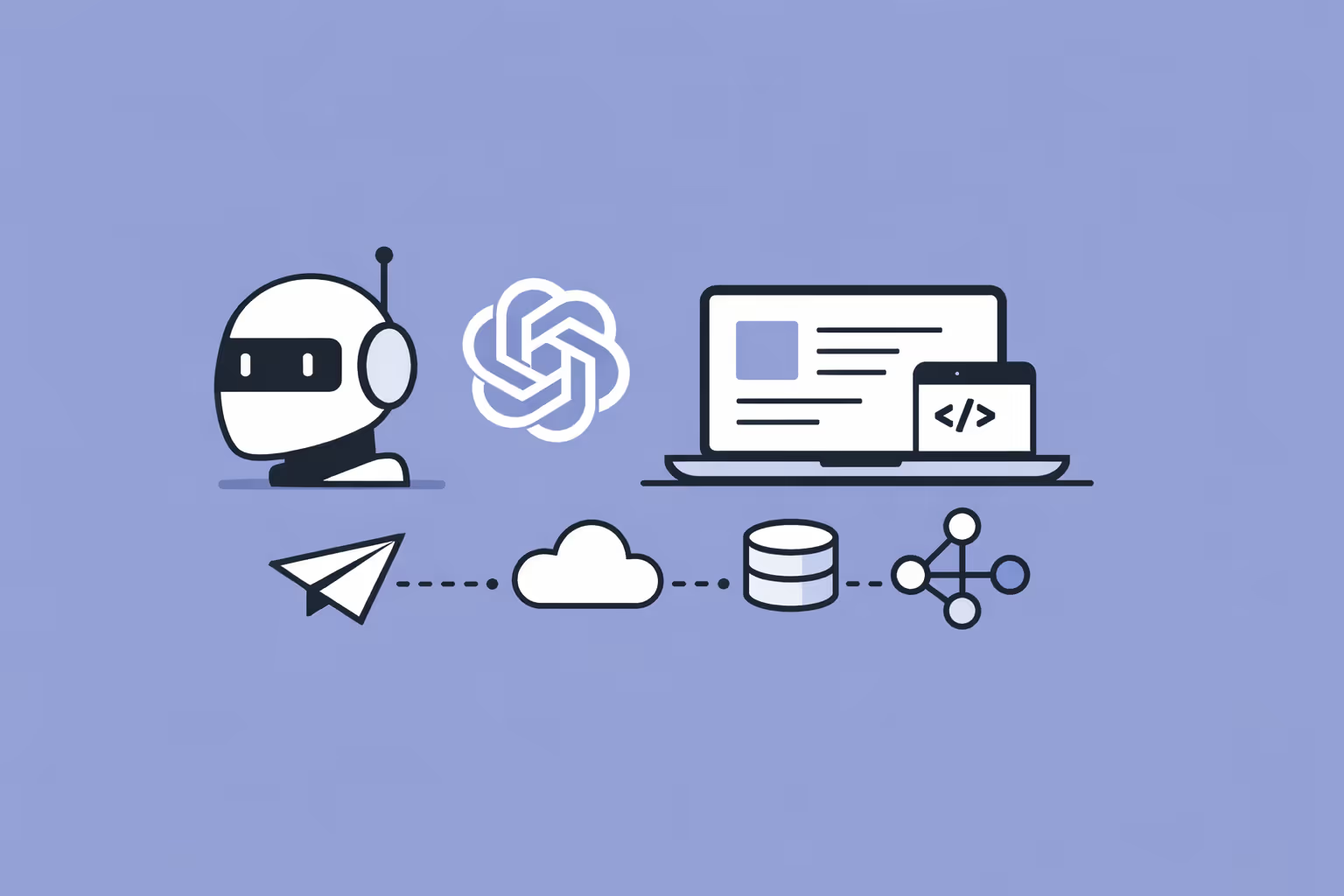

What Is Amazon Bedrock?

Amazon Bedrock is a fully managed service from Amazon Web Services (AWS) that gives organizations access to foundation models from multiple AI providers through a single API. Rather than requiring teams to host, scale, and maintain large language models on their own infrastructure, Bedrock abstracts that complexity away. You select a model, send requests, and get responses, without managing servers, containers, or GPU clusters.

The service sits within the broader AWS ecosystem, which means it integrates natively with services like Amazon S3, AWS Lambda, Amazon CloudWatch, and IAM. For organizations already running workloads on AWS, this eliminates the friction of stitching together a separate AI stack. For those evaluating cloud-based AI platforms, it represents one of the most comprehensive managed offerings available.

What makes Bedrock distinct from general-purpose AI APIs is model choice. The platform hosts foundation models from Anthropic, Meta, Mistral AI, Cohere, Stability AI, and Amazon's own Titan family. This multi-provider approach lets teams evaluate and switch between models without rewriting application code. A single integration point gives access to a diverse range of capabilities across text generation, embeddings, image generation, and more.

Bedrock is not a model training platform. It is a model consumption and customization platform. You do not build foundation models from scratch here. Instead, you use pre-trained models as they are, fine-tune them with your own data, or build retrieval-augmented generation (RAG) pipelines that ground model outputs in your proprietary knowledge bases. This distinction matters for organizations navigating the types of AI approaches available to them.

How Amazon Bedrock Works

Understanding Bedrock requires breaking it into three layers: model access, customization, and orchestration. Each layer builds on the previous one, and organizations can adopt them incrementally.

Model Access via a Unified API

At the foundation level, Bedrock provides a standardized API for invoking foundation models. You send a prompt to the InvokeModel endpoint, specify which model you want, and receive a response. The API handles authentication, routing, scaling, and inference execution behind the scenes.

This abstraction is significant. Running a large language model at production scale normally requires provisioned GPU instances, model weight management, load balancing, and latency optimization. Bedrock eliminates all of that. You pay per token processed, the infrastructure scales automatically, and you never interact with the underlying compute.

The API supports both synchronous and streaming responses. Streaming is critical for interactive applications where users expect to see output as it generates rather than waiting for the full response. Bedrock also supports batch inference for high-volume, non-interactive workloads like document processing or data classification.

Model Customization and Fine-Tuning

Bedrock allows organizations to customize foundation models using their own data. Two mechanisms are available.

Fine-tuning adjusts a model's weights using labeled training data. This is appropriate when you need a model to adopt specific behaviors, terminology, or output formats that the base model does not reliably produce. Fine-tuning creates a private copy of the model that only your account can access.

Continued pre-training exposes a model to large volumes of unlabeled domain-specific text. This is useful when the base model lacks knowledge of your industry's vocabulary, concepts, or patterns. Unlike fine-tuning, continued pre-training does not require labeled examples; it learns from raw text exposure.

Both approaches keep your data private. Training data is not shared with model providers and is not used to improve the base models. This is a critical consideration for organizations handling sensitive information, particularly those with strict compliance training and data governance requirements.

Agents and Orchestration

Bedrock Agents allow you to build applications where a foundation model can reason through multi-step tasks and take actions. You define an agent with a set of instructions, connect it to APIs or data sources through action groups, and the agent determines how to fulfill user requests by chaining model calls with tool invocations.

For example, an agent connected to an HR system, a knowledge base, and a scheduling API could handle a request like "find our parental leave policy, summarize the key provisions, and schedule a meeting with HR to discuss my eligibility." The agent decomposes this into subtasks, executes each one, and assembles a coherent response.

This orchestration layer transforms Bedrock from a simple model API into a platform for building sophisticated AI-powered workflows. It is particularly relevant for organizations pursuing digital transformation initiatives where AI needs to interact with existing business systems rather than operate in isolation.

| Component | Function | Key Detail |

|---|---|---|

| Model Access via a Unified API | At the foundation level, Bedrock provides a standardized API for invoking foundation. | The API handles authentication, routing, scaling |

| Model Customization and Fine-Tuning | Bedrock allows organizations to customize foundation models using their own data. | Two mechanisms are available |

| Agents and Orchestration | Bedrock Agents allow you to build applications where a foundation model can reason through. | You define an agent with a set of instructions |

Key Features and Capabilities

Knowledge Bases and RAG

Bedrock Knowledge Bases let you connect foundation models to your proprietary data sources. You point Bedrock at documents stored in Amazon S3, and the service automatically chunks, embeds, and indexes them in a vector database. When a user asks a question, Bedrock retrieves relevant document chunks and includes them as context in the model's prompt.

This retrieval-augmented generation approach solves the hallucination problem for domain-specific questions. Instead of relying solely on what the model learned during pre-training, it grounds responses in your actual documents, policies, manuals, or datasets. The model cites its sources, giving users a way to verify the information.

Knowledge Bases support multiple vector store backends, including Amazon OpenSearch Serverless, Amazon Aurora, Pinecone, and Redis Enterprise Cloud. Organizations with existing vector databases can connect them directly rather than migrating data.

Guardrails

Bedrock Guardrails provide configurable safeguards for AI applications. You define policies that filter harmful content, block denied topics, redact personally identifiable information (PII), and constrain model outputs to appropriate boundaries.

Guardrails operate at the platform level, which means they apply consistently regardless of which foundation model you use. This is important for enterprises deploying AI across multiple use cases where each application may need different safety parameters. A customer-facing chatbot requires stricter content filtering than an internal code generation tool.

For teams responsible for cybersecurity awareness and data protection, Guardrails offer a centralized mechanism to enforce AI safety policies without embedding filtering logic into every application.

Model Evaluation

Bedrock includes built-in tools for evaluating model performance. You can run automatic evaluations that measure accuracy, robustness, and toxicity across standard benchmarks. You can also set up human evaluation workflows where reviewers rate model outputs against custom criteria.

Model evaluation is essential for informed model selection. Rather than choosing a model based on marketing claims or general benchmarks, you can test multiple models against your specific use cases and data. This evidence-based approach to model selection parallels how organizations should approach measuring results in any technology investment.

Provisioned Throughput

For workloads that require guaranteed performance, Bedrock offers provisioned throughput. You purchase model units that reserve dedicated inference capacity, ensuring consistent latency and throughput regardless of platform demand.

This is relevant for production applications with strict latency requirements or predictable high-volume workloads. On-demand pricing works well for development and variable workloads, but provisioned throughput provides the predictability that production SLAs demand.

Amazon Bedrock Use Cases

Enterprise Knowledge Assistants

The most common Bedrock deployment pattern is an internal knowledge assistant. Organizations connect Bedrock to their document repositories, policy manuals, product documentation, or training materials and build conversational interfaces that employees can query in natural language.

This directly impacts learning and development workflows. Instead of employees searching through folders, wikis, or SharePoint sites, they ask questions and receive precise, sourced answers.

The reduction in time-to-information translates directly into productivity gains, particularly during employee onboarding when new hires need to absorb large volumes of institutional knowledge quickly.

Intelligent Training and Education Platforms

Bedrock powers AI features in training platforms where personalized content delivery matters. A foundation model connected to a course catalog and learner profiles can recommend learning paths, generate practice questions, summarize complex material, and provide adaptive feedback based on learner performance.

This aligns with the broader shift toward adaptive learning, where AI tailors educational experiences to individual learners rather than delivering one-size-fits-all content. The model choice flexibility in Bedrock allows education technology teams to select the model that best balances quality, cost, and latency for each feature.

Organizations building or evaluating training programs should consider how Bedrock's RAG capabilities could ground AI tutors in their proprietary curriculum rather than relying on generic model knowledge.

Document Processing and Analysis

Bedrock handles document-heavy workflows where organizations need to extract, classify, summarize, or transform information at scale. Insurance claims processing, legal contract review, financial report analysis, and compliance document screening all fit this pattern.

The batch inference capability is particularly valuable here. Rather than processing documents one at a time through a chat interface, organizations submit thousands of documents for asynchronous processing. Results feed into downstream systems, dashboards, or human review queues.

Teams tracking performance metrics can use Bedrock to automate the extraction and synthesis of data from unstructured reports, turning qualitative information into structured, analyzable datasets.

Customer-Facing Conversational AI

Bedrock supports customer service chatbots and virtual assistants that go beyond scripted decision trees. Connected to product databases, order management systems, and knowledge bases, a Bedrock-powered assistant can handle nuanced customer queries, process returns, troubleshoot technical issues, and escalate complex cases to human agents.

Guardrails ensure these customer-facing applications maintain brand-appropriate tone and never disclose sensitive internal information. The combination of flexible model selection, RAG grounding, and safety controls makes Bedrock a strong fit for conversational AI deployments where accuracy and safety are non-negotiable.

Amazon Bedrock vs. Alternatives

Choosing a generative AI platform requires understanding how Bedrock compares to other options in the market.

Amazon Bedrock vs. Azure OpenAI Service. Azure OpenAI provides access primarily to OpenAI's models (GPT-4, GPT-4o, DALL-E) through Microsoft's Azure cloud. If your organization is committed to OpenAI's model family and already operates on Azure, it is a natural fit. Bedrock's advantage is model diversity. You are not locked into a single model provider, and you can switch models as the competitive landscape evolves.

Bedrock also offers native RAG through Knowledge Bases, while Azure OpenAI requires more manual integration with Azure AI Search for similar functionality.

Amazon Bedrock vs. Google Vertex AI. Vertex AI is Google Cloud's machine learning platform, offering access to Gemini models alongside tools for training, deploying, and managing ML models broadly. Vertex AI is more comprehensive as a general ML platform, covering traditional ML and generative AI. Bedrock is more focused. It is purpose-built for generative AI consumption and customization.

Organizations that only need generative AI capabilities, not full ML pipelines, may find Bedrock simpler to adopt.

Amazon Bedrock vs. Direct API Integration. Organizations can bypass managed platforms entirely and integrate directly with model providers like Anthropic, OpenAI, or Mistral through their native APIs. This offers maximum flexibility but requires managing authentication, rate limiting, failover, caching, and compliance independently.

Bedrock bundles these operational concerns into a managed service, which reduces engineering overhead at the cost of some flexibility and the addition of AWS as an intermediary.

For organizations building L&D tools or internal AI applications, the decision often comes down to existing cloud infrastructure. Bedrock is strongest when you are already on AWS and want managed simplicity with model optionality.

Evaluating Amazon Bedrock for Your Organization

Adopting Bedrock is not just a technical decision. It is an organizational one that touches infrastructure, security, cost management, and workforce readiness.

Infrastructure alignment. Bedrock delivers the most value to organizations already invested in AWS. If your data lives in S3, your applications run on Lambda or ECS, and your team manages infrastructure through CloudFormation or Terraform on AWS, Bedrock integrates naturally. If your primary cloud is Azure or GCP, evaluate their native AI services first before introducing cross-cloud complexity.

Data readiness. The power of Bedrock's Knowledge Bases depends on having well-organized, high-quality document repositories. Before deploying RAG, audit your content. Outdated, contradictory, or poorly structured documents produce poor AI responses regardless of model quality. Organizations should invest in data fluency across their teams to ensure the data feeding AI systems is accurate and well-maintained.

Cost modeling. Bedrock's pricing is usage-based, charged per input and output token. Costs scale with volume, model selection, and whether you use on-demand or provisioned throughput. Before committing, run representative workloads through the platform to establish baseline costs. Compare these against alternatives and against the engineering cost of self-managed infrastructure.

Detailed cost analysis should be part of your broader HR analytics and technology investment framework.

Security and compliance. Bedrock encrypts data in transit and at rest, supports AWS PrivateLink for VPC-level network isolation, and provides CloudTrail logging for audit trails. Data submitted for inference is not stored by AWS or shared with model providers. For regulated industries, these controls are baseline requirements. Verify that Bedrock's compliance certifications (SOC, ISO, HIPAA eligibility) align with your specific regulatory obligations.

Team capability. Deploying Bedrock effectively requires developers who understand API integration, prompt engineering, and RAG architecture. It also requires stakeholders who can evaluate AI outputs critically.

Investing in bias training and competency assessment ensures your team can identify when models produce biased, inaccurate, or inappropriate outputs rather than deploying AI blindly.

The role of AI in online learning continues to expand, and platforms like Bedrock make it possible to build sophisticated AI features without deep machine learning expertise. But responsible deployment still requires organizational preparation, clear use case definition, and ongoing evaluation.

For a deeper technical understanding of the platform's capabilities and API reference, consult the official Amazon Bedrock documentation.

Frequently Asked Questions

What foundation models are available through Amazon Bedrock?

Amazon Bedrock provides access to foundation models from multiple providers, including Anthropic's Claude models, Meta's Llama models, Mistral AI's models, Cohere's Command and Embed models, Stability AI's image generation models, and Amazon's own Titan models for text, embeddings, and image generation. The model roster expands regularly as AWS adds new providers and model versions.

This multi-provider approach allows organizations to select the model that best fits each specific use case based on quality, cost, and latency requirements.

Does Amazon Bedrock store or use my data to train models?

No. Amazon Bedrock does not store prompts or completions, and your data is not used to train or improve the base foundation models. Fine-tuning and continued pre-training create private model copies accessible only to your AWS account. Data transmitted to and from the service is encrypted, and organizations can use AWS PrivateLink to keep all traffic within their virtual private cloud. These privacy controls make Bedrock suitable for workloads involving sensitive, proprietary, or regulated data.

How does Amazon Bedrock pricing work?

Bedrock uses a pay-per-use pricing model based on the number of input and output tokens processed. Each foundation model has its own per-token rate, with more capable models generally costing more. Organizations can choose between on-demand pricing, where you pay only for what you use with no commitments, and provisioned throughput, where you purchase reserved capacity for consistent performance.

Additional costs apply for features like Knowledge Bases (vector storage and embedding generation) and fine-tuning (training compute). Running pilot workloads before scaling is the most reliable way to forecast production costs.

Further reading

Machine Learning Engineer: What They Do, Skills, and Career Path

Learn what a machine learning engineer does, the key skills and tools required, common career paths, and how to enter this high-demand field.

Algorithmic Transparency: What It Means and Why It Matters

Understand algorithmic transparency, why it matters for accountability and compliance, real-world examples in hiring, credit, and healthcare, and how organizations can improve it.

%201.avif)

AI Watermarking: What It Is, Benefits, and Limits

Understand AI watermarking, how it works for text and images, its benefits for content authenticity, and the practical limits that affect real-world deployment.

Gradient Descent: How It Works, Types, and Practical Implementation

Learn what gradient descent is, how it optimizes machine learning models, its main variants, and how to implement it in practice.

What Is Case-Based Reasoning? Definition, Examples, and Practical Guide

Learn what case-based reasoning (CBR) is, how the retrieve-reuse-revise-retain cycle works, and see real examples across industries.

OpenAI: What It Is, Key Products, Technology, and How to Get Started

Learn what OpenAI is, explore its key products like GPT and DALL-E, understand how its technology works, discover real-world use cases, and find out how to get started with OpenAI's tools and APIs.